Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

Forecasting (Prediction) Limits: Example Linear Deterministic Trend Estimated by Least-Squares

Caricato da

zahid_rezaDescrizione originale:

Titolo originale

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

Forecasting (Prediction) Limits: Example Linear Deterministic Trend Estimated by Least-Squares

Caricato da

zahid_rezaCopyright:

Formati disponibili

Forecasting (prediction) limits

Example Linear deterministic trend estimated by least-squares

( ) ( ) ( ) ( ) ( ) | |

( ) ( ) ( )

|

|

|

|

|

.

|

\

|

|

.

|

\

|

+

|

.

|

\

|

+

+

+ + =

=

|

|

|

|

|

.

|

\

|

|

.

|

\

|

+

|

.

|

\

|

+

+

+ + = =

= + = =

=

+ + = + + = =

+ + =

=

=

+ + + + +

+ + +

+ +

t

s

e

t

s

e e

l t l t l t l t l t

l t l t l t

t t t l t l t

t t t

t

s

t

l t

t

t

s

t

l t

t

Y Var Y Var Y Y e Var

Y Y e

l t Y Y b Y Y b l t b b Y Y Y E Y

e t Y

1

2

2

2

1

2

2

2 2

1 1 1 0 1 0 1

0

2

1

2

1

1

1

2

1

2

1

1

theory analysis regression From

t independen

and

, , , , , ,

o

o o

| |

Note! The average of the numbers 1, 2, , t is

( )

2

1

2

1 1 1

1

+

=

+

=

=

t t t

t

s

t

t

s

Hence, calculated prediction limits for Y

t+l

become

where c is a quantile of a proper sampling distribution emerging from the use of

and the requested coverage of the limits.

=

+

|

.

|

\

|

+

|

.

|

\

|

+

+

+ +

t

s

e l t

t

s

t

l t

t

c Y

1

2

2

1

2

1

1

1

o

2 2

of estimator an as

e e

o o

For t large it suffices to use the standard normal distribution and a good

approximation is also obtained even if the term

is omitted under the square root

=

|

.

|

\

| +

|

.

|

\

| +

+

+

t

s

t

s

t

l t

t

1

2

2

1

2

1

1

( ) ( ) 2 1 , 0 Pr

2

2

o

o

o

o

= >

+

z N

z Y

e l t

ARIMA-models

q p

l

l

j

j e l t

z Y

u u | |

o

o

, ,

and

, ,

estimates parameter

the of functions are

, ,

where

1 1

1 0

1

0

2

2

=

+

Using R

ts=arima(x,) for fitting models

plot.Arima(ts,) for plotting fitted models with 95%

prediction limits

See documentation for plot.Arima . However, the generic command plot

can be used.

forecast.Arima Install and load package forecast. Gives

more flexibility with respect to prediction

limits.

Seasonal ARIMA models

Example beersales data

A clear seasonal pattern and also a trend, possibly a quadratic trend

Residuals from detrended data

beerq<-lm(beersales~time(beersales)+I(time(beersales)^2))

plot(y=rstudent(beerq),x=as.vector(time(beersales)),type="b",

pch=as.vector(season(beersales)),xlab="Time")

Seasonal pattern, but possibly no long-term trend left

SAC and SPAC of the residuals:

SAC

SPAC

Spikes at or close

to seasonal lags

(or half-seasonal

lags)

Modelling the autocorrelation at seasonal lags

Pure seasonal variation:

= u

=

= u < u

+ u + =

otherwise 0

36 24 12 0

circle unit the outside

0 1 equation stic characteri the to Roots 1 if Stationary

model - AR(1) Seasonal

12

1

12

1 1

12 12 1

,... , , , k

e Y Y

k

k

t t t

o

=

O +

O

=

=

= O < O

O + =

otherwise 0

12

1

0 1

circle unit the outside

0 1 equation stic characteri the to Roots 1 if Invertible

model - MA(1) Seasonal

2

1

1

12

1 1

12 12 1

k

k

e e Y

k

t t t

Non-seasonal and seasonal variation:

AR(p, P)

s

or ARMA(p,0)(P,0)

s

t s P t P s t p t p t t

e Y Y Y Y Y + u + + u + + + + =

1 1 1

| | o

However, we cannot discard that the non-seasonal and seasonal variation

interact Better to use multiplicative Seasonal AR Models

( )( )

t t

s P

P

s p

p

e Y B B B B + = u u

o | |

1 1

1 1

Example:

( )( )

( )

t t t t t

t t

t t

e Y Y Y Y

e Y B B B

e Y B B

+ + + =

= +

=

13 12 1

13 12

12

05 . 0 2 . 0 3 . 0

2 . 0 3 . 0 2 . 0 3 . 0 1

2 . 0 1 3 . 0 1

Multiplicative MA(q, Q)

s

or ARMA(0,q)(0,Q)

s

( )( )

t

s Q

Q

s q

q t

e B B B B Y

O O + =

1 1

1 1 u u

Mixed models:

( )( )

( )( )

t

s Q

Q

s q

q

t

s P

P

s p

p

e B B B B

Y B B B B

O O +

+ = u u

1 1

1 1

1 1

1 1

u u

o | |

Many terms! Condensed expression:

( ) ( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

= =

= =

O = O =

u = u =

O + = u

Q

j

j

s

j

q

j

j

j

s

P

i

i

s

i

s

p

i

i

i

t

s

t

s

B B B B

B B B B

e B B Y B B

1 1

1 1

1 ; 1

1 ; 1

u u

| |

u o |

( ) ( )

s

Q P q p , , ARMA

Non-stationary Seasonal ARIMA models

Non-stationary at non-seasonal level:

Model dth order regular differences: ) ( ) ( ) ( )

t

d

t t

d

Y B Y Y = V V V = V 1

Non-stationary at seasonal level:

Seasonal non-stationarity is harder to detect from a plotted times-series. The

seasonal variation is not stable.

Model Dth order seasonal differences:

) ( ) ( ) ( )

t

D

s

t s s s t

D

s

Y B Y Y = V V V = V 1

Example First-order monthly differences:

can follow a stable seasonal pattern

( )

12 12

1

= = V

t t t

s

t

Y Y Y B Y

The general Seasonal ARIMA model

( ) ( )( ) ( ) ( ) ( )

t

s

t

D

s

d

s

e B B Y B B B B O + = u u o | 1 1

It does not matter whether regular or seasonal differences are taken first

( ) ( )

s

Q D P q d p , , , , ARIMA

Model specification, fitting and diagnostic checking

Example beersales data

Clearly non-

stationary at non-

seasonal level, i.e.

there is a long-

term trend

Investigate SAC and SPAC of original data

Many substantial spikes

both at non-seasonal and at

seasonal level-

Calls for differentiation at

both levels.

Try first-order seasonal differences first. Here: monthly data

( )

12

12

1

= =

t t t t

Y Y Y B W

beer_sdiff1 <- diff(beersales,lag=12)

Look at SAC and SPAC again

Better, but now we need to try regular differences

Take first order differences in seasonally differenced data

( )( ) ( ) ( )

13 1 12 1

12

1 1 1

= = = =

t t t t t t t t t

Y Y Y Y W W W B Y B B U

beer_sdiff1rdiff1 <- diff(beer_sdiff1,lag=1)

Look at SAC and SPAC again

SAC starts to look good, but SPAC not

Take second order differences in seasonally differenced data

Since we suspected a non-linear long-term trend

( ) ( ) ( )

( )

( )

14 2 13 1 12

2 1 2 1 1

1

12

2

2

2

1 1 1

+

= + = =

= = = =

t t t t t t

t t t t t t t

t t t t t

Y Y Y Y Y Y

W W W W W W W

U U U B Y B B V

beer_sdiff1rdiff2 <- diff(diff(beer_sdiff1,lag=1),lag=1)

Could be an ARMA(2,0)(0,1)

12

or an ARMA(1,1) (0,1)

12

Non-seasonal part Seasonal part

These models for original data becomes

ARIMA(2,2,0) (0,1,1)

12

and ARIMA(1,2,1) (0,1,1)

12

model1 <-arima(beersales,order=c(2,2,0),

seasonal=list(order=c(0,1,1),period=12))

Series: beersales

ARIMA(2,2,0)(0,1,1)[12]

Coefficients:

ar1 ar2 sma1

-1.0257 -0.6200 -0.7092

s.e. 0.0596 0.0599 0.0755

sigma^2 estimated as 0.6095: log likelihood=-216.34

AIC=438.69 AICc=438.92 BIC=451.42

Diagnostic checking can be used in a condensed way by function tsdiag. The

Ljung-Box test can specifically be obtained from function Box.test

tsdiag(model1)

standardized residuals

SPAC(standardized residuals)

P-values of Ljung-Box test with K = 24

Box.test(residuals(model1), lag = 12, type = "Ljung-Box",

fitdf = 3)

Box-Ljung test

data: residuals(model1)

X-squared = 30.1752, df = 9, p-value = 0.0004096

K

(how many lags

included)

p + q + P + Q

(how many degrees of freedom withdrawn from K)

For seasonal data with season length s the L-B test is usually calculated for

K = s, 2s, 3s and 4s

Box.test(residuals(model1), lag = 24, type = "Ljung-Box",

fitdf = 3)

Box-Ljung test

data: residuals(model1)

X-squared = 57.9673, df = 21, p-value = 2.581e-05

Box.test(residuals(model1), lag = 36, type = "Ljung-Box",

fitdf = 3)

Box-Ljung test

data: residuals(model1)

X-squared = 76.7444, df = 33, p-value = 2.431e-05

Box.test(residuals(model1), lag = 48, type = "Ljung-Box",

fitdf = 3)

Box-Ljung test

data: residuals(model1)

X-squared = 92.9916, df = 45, p-value = 3.436e-05

Hence, the residuals from the first model are not satisfactory

model2 <-arima(beersales,order=c(1,2,1),

seasonal=list(order=c(0,1,1),period=12))

print(model2)

Series: beersales

ARIMA(1,2,1)(0,1,1)[12]

Coefficients:

ar1 ma1 sma1

-0.4470 -0.9998 -0.6352

s.e. 0.0678 0.0176 0.0930

sigma^2 estimated as 0.4575: log likelihood=-192.86

AIC=391.72 AICc=391.96 BIC=404.45

Better fit ! But is it good?

tsdiag(model2)

Not good! We should maybe try second-order seasonal differentiation too.

Time series regression models

The classical set-up uses deterministic trend functions and seasonal indices

( ) ( )

( )

( )

n variatio seasonal no trend, quatadic

otherwise 0

month in is if 1

where

data monthly in end linear tr

: Examples

2

2 1 0

12

2

, 1 0

t t Y

j t

t x

e t x t Y

e t S t m Y

t

j

t

j

j j s t

t t

+ + =

=

+ + + =

+ + =

=

| | |

| | |

The classical set-up can be extended by allowing for autocorrelated error

terms (instead of white noise). Usually it is sufficient with and AR(1) or

AR(2). However, the trend and seasonal terms are still assumed deterministic.

Dynamic time series regression models

To extend the classical set-up with explanatory variables comprising other time

series we need another way of modelling.

Note that a stationary ARMA-model

can also be written

( ) ( )

( ) ( )

t t

t

q

t

p

p

q t q t t p t p t t

e B Y B

e B B Y B B

e e e Y Y Y

u | |

u u | | |

u u | | |

+ =

+ =

+ + + + =

0

1 1 0 1

1 1 1 1 0

1 1

( )

( )

t t

e

B

B

Y

|

u

| + =

0

( )

|

|

.

|

\

|

=

0

0

|

|

|

B

The general dynamic regression model for a response time series Y

t

with one

covariate time series X

t

can be written

( )

( )

( )

( )

t t

b

t

e

B

B

X B

B

B C

Y

|

u

o

e

| +

+ =

0

Special case 1:

X

t

relates to some event that has occurred at a certain time points (e.g. 9/11)

It can the either be a step function

or a pulse function

( ) ( ) | | T S

T t

T t

T X

t t

<

>

=

0

1

( ) ( ) | | T P

T t

T t

T X

t t

=

=

=

0

1

Step functions would imply a permanent change in the level of Y

t

. Such a change

can further be constant or gradually increasing (depending on e(B) and o(B) ). It

can also be delayed (depending on b )

Pulse functions would imply a temporary change in the level of Y

t

. Such a

change may be just at the specific time point gradually decreasing (depending

on e(B) and o(B) ).

Strep and pulse functions are used to model the effects of a particular event, as

so-called intervention.

Intervention models

For X

t

being a regular times series (i.e. varying with time) the models are called

transfer function models

Potrebbero piacerti anche

- Ch17 Curve FittingDocumento44 pagineCh17 Curve FittingSandip GaikwadNessuna valutazione finora

- A-level Maths Revision: Cheeky Revision ShortcutsDa EverandA-level Maths Revision: Cheeky Revision ShortcutsValutazione: 3.5 su 5 stelle3.5/5 (8)

- Cs421 Cheat SheetDocumento2 pagineCs421 Cheat SheetJoe McGuckinNessuna valutazione finora

- Time Series Analysis: Box-Jenkins MethodDocumento26 pagineTime Series Analysis: Box-Jenkins Methodपशुपति नाथNessuna valutazione finora

- Statistical InferenceDocumento148 pagineStatistical InferenceSara ZeynalzadeNessuna valutazione finora

- Notes Chapter 6 7Documento20 pagineNotes Chapter 6 7awa_caemNessuna valutazione finora

- Q3 Module 18 CLMD4ASTAT&PROB 225-237Documento13 pagineQ3 Module 18 CLMD4ASTAT&PROB 225-237KyotieNessuna valutazione finora

- ST104a Commentary 2022Documento29 pagineST104a Commentary 2022Nghia Tuan NghiaNessuna valutazione finora

- Taking 20the 20fear 20out 20of 20data 20 YsisDocumento347 pagineTaking 20the 20fear 20out 20of 20data 20 Ysisws7ycw6hy2Nessuna valutazione finora

- Final Exam SolutionsDocumento7 pagineFinal Exam SolutionsMeliha PalošNessuna valutazione finora

- Box Jenkins MethodologyDocumento29 pagineBox Jenkins MethodologySylvia CheungNessuna valutazione finora

- 2.2 Vector Autoregression (VAR) : M y T 1, - . - , TDocumento24 pagine2.2 Vector Autoregression (VAR) : M y T 1, - . - , TrunawayyyNessuna valutazione finora

- Ec2 4Documento40 pagineEc2 4masudul9islamNessuna valutazione finora

- Case On ARIMADocumento4 pagineCase On ARIMAShreyash MantriNessuna valutazione finora

- Stat 501 Homework 1 Solutions Spring 2005Documento9 pagineStat 501 Homework 1 Solutions Spring 2005NawarajPokhrelNessuna valutazione finora

- ARIMA (P, D, Q) ModelDocumento4 pagineARIMA (P, D, Q) ModelselamitspNessuna valutazione finora

- Formula SheetDocumento7 pagineFormula SheetMohit RakyanNessuna valutazione finora

- Imputation of Complete No-Stationary Seasonal Time SeriesDocumento13 pagineImputation of Complete No-Stationary Seasonal Time SeriesMuflihah Musa SNessuna valutazione finora

- Time Series Notes9Documento32 pagineTime Series Notes9Sam SkywalkerNessuna valutazione finora

- EE 627: Term 2/2016 Homework 4 Due April 25, 2017: Solution: The Autoregressive Polynomial Can Be Factorized AsDocumento4 pagineEE 627: Term 2/2016 Homework 4 Due April 25, 2017: Solution: The Autoregressive Polynomial Can Be Factorized AsluscNessuna valutazione finora

- Stability Analysis - by Kenil JaganiDocumento10 pagineStability Analysis - by Kenil Jaganikeniljagani513Nessuna valutazione finora

- 36-225 - Introduction To Probability Theory - Fall 2014: Solutions For Homework 1Documento6 pagine36-225 - Introduction To Probability Theory - Fall 2014: Solutions For Homework 1Nick LeeNessuna valutazione finora

- Edgar Recursive EstimationDocumento18 pagineEdgar Recursive EstimationSaurabh K AgarwalNessuna valutazione finora

- List of Formulae in StatisticsDocumento3 pagineList of Formulae in Statisticssawantdt100% (1)

- MJC JC 2 H2 Maths 2011 Mid Year Exam Solutions Paper 2Documento11 pagineMJC JC 2 H2 Maths 2011 Mid Year Exam Solutions Paper 2jimmytanlimlongNessuna valutazione finora

- Narayana Grand Test - 8Documento12 pagineNarayana Grand Test - 8Meet ShahNessuna valutazione finora

- Hilbert Pachpatte InequalitiesDocumento28 pagineHilbert Pachpatte InequalitiesAlvaro CorvalanNessuna valutazione finora

- Basic Econometrics HealthDocumento183 pagineBasic Econometrics HealthAmin HaleebNessuna valutazione finora

- Chapter 3 Laplace TransformDocumento20 pagineChapter 3 Laplace TransformKathryn Jing LinNessuna valutazione finora

- Liu2017 Article AFastAndStableAlgorithmForLineDocumento31 pagineLiu2017 Article AFastAndStableAlgorithmForLineAman JalanNessuna valutazione finora

- Tssol 11Documento5 pagineTssol 11aset999Nessuna valutazione finora

- Frequency ResponseDocumento30 pagineFrequency ResponseGovind KumarNessuna valutazione finora

- Stat 372 Midterm W14 SolutionDocumento4 pagineStat 372 Midterm W14 SolutionAdil AliNessuna valutazione finora

- Simple Nonlinear Time Series Models For Returns: José Maria GasparDocumento10 pagineSimple Nonlinear Time Series Models For Returns: José Maria GasparQueen RaniaNessuna valutazione finora

- Ch17 Curve FittingDocumento44 pagineCh17 Curve Fittingvarunsingh214761Nessuna valutazione finora

- Efficient Observation of Random PhenomenaDocumento34 pagineEfficient Observation of Random PhenomenaponjoveNessuna valutazione finora

- ARIMA Forecasting: Graeme Walsh May 14, 2013Documento5 pagineARIMA Forecasting: Graeme Walsh May 14, 2013Kevin BunyanNessuna valutazione finora

- Chapter 4XDocumento96 pagineChapter 4XAndrei FirteNessuna valutazione finora

- Problem Set 3Documento9 pagineProblem Set 3Biren PatelNessuna valutazione finora

- MEM1833 Topic 3 State Space Realizations HANDOUTDocumento27 pagineMEM1833 Topic 3 State Space Realizations HANDOUTHasrulnizam HashimNessuna valutazione finora

- 2009 2 Art 04Documento8 pagine2009 2 Art 04Raja RamNessuna valutazione finora

- Polynomial Curve FittingDocumento44 paginePolynomial Curve FittingHector Ledesma IIINessuna valutazione finora

- W Z Is The Adaptive: CSP401 Test #10Documento3 pagineW Z Is The Adaptive: CSP401 Test #10Jason GonzalezNessuna valutazione finora

- Time Series Practice P5Documento4 pagineTime Series Practice P5Aa Hinda no AriaNessuna valutazione finora

- (A) Modeling: 2.3 Models For Binary ResponsesDocumento6 pagine(A) Modeling: 2.3 Models For Binary ResponsesjuntujuntuNessuna valutazione finora

- CEM3005W Formula Sheet For Physical Chemistry of LiquidsDocumento2 pagineCEM3005W Formula Sheet For Physical Chemistry of LiquidsZama MakhathiniNessuna valutazione finora

- Solutions Chapter 9Documento34 pagineSolutions Chapter 9reloadedmemoryNessuna valutazione finora

- Technical Note - Autoregressive ModelDocumento12 pagineTechnical Note - Autoregressive ModelNumXL ProNessuna valutazione finora

- AIEEE MathsDocumento3 pagineAIEEE MathsSk SharukhNessuna valutazione finora

- Error Analysis And: Graph DrawingDocumento26 pagineError Analysis And: Graph DrawingNischay KaushikNessuna valutazione finora

- Non-Linear Methods 4.1. Asymptotic Analysis: 4.1.2. Stochastic RegressorsDocumento73 pagineNon-Linear Methods 4.1. Asymptotic Analysis: 4.1.2. Stochastic RegressorsDNessuna valutazione finora

- Agresti Ordinal TutorialDocumento75 pagineAgresti Ordinal TutorialKen MatsudaNessuna valutazione finora

- MCMC BriefDocumento69 pagineMCMC Briefjakub_gramolNessuna valutazione finora

- R R A S: Quantitative MethodsDocumento9 pagineR R A S: Quantitative MethodsMehmoodulHassanAfzalNessuna valutazione finora

- Chapter 13Documento27 pagineChapter 13vishiwizardNessuna valutazione finora

- Stationary Time SeriesDocumento21 pagineStationary Time SeriesJay AydinNessuna valutazione finora

- Recursive Least Squares Algorithm For Nonlinear Dual-Rate Systems Using Missing-Output Estimation ModelDocumento20 pagineRecursive Least Squares Algorithm For Nonlinear Dual-Rate Systems Using Missing-Output Estimation ModelTín Trần TrungNessuna valutazione finora

- Estabilidad Interna y Entrada-Salida de Sistemas Continuos: Udec - DieDocumento10 pagineEstabilidad Interna y Entrada-Salida de Sistemas Continuos: Udec - DieagustinpinochetNessuna valutazione finora

- Logit Marginal EffectsDocumento12 pagineLogit Marginal Effectsjive_gumelaNessuna valutazione finora

- Least Square FitDocumento16 pagineLeast Square FitMuhammad Awais100% (1)

- MATH 211 - Winter 2013 Lecture Notes: (Adapted by Permission of K. Seyffarth)Documento28 pagineMATH 211 - Winter 2013 Lecture Notes: (Adapted by Permission of K. Seyffarth)cmculhamNessuna valutazione finora

- MAM2085F 2013 Exam SolutionsDocumento8 pagineMAM2085F 2013 Exam Solutionsmoro1992Nessuna valutazione finora

- Quantreg NLRQDocumento7 pagineQuantreg NLRQYuri SantosNessuna valutazione finora

- Bayesian Structural Time Series ModelsDocumento100 pagineBayesian Structural Time Series Modelsquantanglement100% (1)

- Murrar Brauer 2018 MM ANOVADocumento7 pagineMurrar Brauer 2018 MM ANOVAhaleyNessuna valutazione finora

- ADA FormulaDocumento11 pagineADA FormulaDinesh RaghavendraNessuna valutazione finora

- STA 114 SPECIAL TEST 2 - Questions Solution 11 May 2021Documento10 pagineSTA 114 SPECIAL TEST 2 - Questions Solution 11 May 2021Monowalehippie MangaNessuna valutazione finora

- 1 PB PDFDocumento15 pagine1 PB PDFKhotimNessuna valutazione finora

- Driving Pressure and Survival in ARDS-Amato-ESM-NEJM 2015Documento57 pagineDriving Pressure and Survival in ARDS-Amato-ESM-NEJM 2015SumroachNessuna valutazione finora

- Pengaruh Media Iklan Terhadap Pengambilan Keputusan Konsumen Membeli Pasta Gigi PepsodentDocumento11 paginePengaruh Media Iklan Terhadap Pengambilan Keputusan Konsumen Membeli Pasta Gigi PepsodentTRI HARYANINessuna valutazione finora

- ProbStat Course Calendar 4Q1819Documento2 pagineProbStat Course Calendar 4Q1819Raymond LuberiaNessuna valutazione finora

- Exam Research 2 StudentsDocumento7 pagineExam Research 2 StudentsVILLA 2 ANACITA LOCIONNessuna valutazione finora

- Advanced EconometricsDocumento9 pagineAdvanced Econometricsvikrant vardhanNessuna valutazione finora

- Quantiles: A Score Distribution Where The Scores Are Divided IntoDocumento7 pagineQuantiles: A Score Distribution Where The Scores Are Divided IntoCarlo MagnunNessuna valutazione finora

- Lesson 3.5: Post Hoc AnalysisDocumento24 pagineLesson 3.5: Post Hoc AnalysisMar Joseph MarañonNessuna valutazione finora

- MATH 322: Probability and Statistical MethodsDocumento27 pagineMATH 322: Probability and Statistical MethodsAwab AbdelhadiNessuna valutazione finora

- Chapter 9 Between Subjects Design Notes 1Documento3 pagineChapter 9 Between Subjects Design Notes 1Norhaine GadinNessuna valutazione finora

- Analisis Regresi BergandaDocumento6 pagineAnalisis Regresi Bergandarama daniNessuna valutazione finora

- 1 Mean Mode Median PDFDocumento13 pagine1 Mean Mode Median PDFct asiyah100% (2)

- Correlation and RegressionDocumento3 pagineCorrelation and Regressionapi-197545606Nessuna valutazione finora

- CH 13Documento21 pagineCH 13Eriko Timothy Ginting100% (1)

- Study Schedule Topic Learning Outcomes Activities Week 4Documento38 pagineStudy Schedule Topic Learning Outcomes Activities Week 4Sunny EggheadNessuna valutazione finora

- Applied Bayesian Econometrics For Central Bankers Updated 2017 PDFDocumento222 pagineApplied Bayesian Econometrics For Central Bankers Updated 2017 PDFimad el badisyNessuna valutazione finora

- Package Sdaa': R Topics DocumentedDocumento16 paginePackage Sdaa': R Topics DocumentedLuis Antonio RuizNessuna valutazione finora

- Assessment Test in PR 2Documento15 pagineAssessment Test in PR 2Den Gesiefer LazaroNessuna valutazione finora

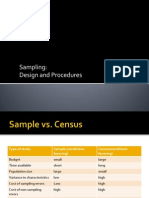

- Sampling: Design and ProceduresDocumento18 pagineSampling: Design and ProceduresKamil BandayNessuna valutazione finora

- Linear Regression Questions AnswersDocumento6 pagineLinear Regression Questions AnswersAndile MhlungwaneNessuna valutazione finora