Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

Applications: 1.engineers at IBM's Office: Smarttags" Research Center in San Jose, CA, Report That A Number of Large

Caricato da

Deepak AttriDescrizione originale:

Titolo originale

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

Applications: 1.engineers at IBM's Office: Smarttags" Research Center in San Jose, CA, Report That A Number of Large

Caricato da

Deepak AttriCopyright:

Formati disponibili

BlueEyes and Its Applications BlueEyes is the name of a human recognition venture initiated by IBM to allow people to interact

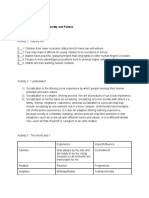

with computers in a more natural manner. The technology aims to enable devices to recognize and use natural input, such as facial expressions. The initial developments of this project include scroll mice and other input devices that sense the user's pulse, monitor his or her facial expressions, and the movement of his or her eyelids. I have prepared a 4-page handout on BlueEyes and Its Business Applications from several cited sources. BlueEyes and Its Applications Animal survival depends on highly developed sensory abilities. Likewise, human cognition depends on highly developed abilities to perceive, integrate, and interpret visual, auditory, and touch information. Without a doubt, computers would be much more powerful if they had even a small fraction of the perceptual ability of animals or humans. Adding such perceptual abilities to computers would enable computers and humans to work together more as partners. Toward this end, the BlueEyes project aims at creating computational devices with the sort of perceptual abilities that people take for granted. How can we make computers "see" and "feel"? BlueEyes uses sensing technology to identify a user's actions and to extract key information. This information is then analyzed to determine the user's physical, emotional, or informational state, which in turn can be used to help make the user more productive by performing expected actions or by providing expected information. For example, a BlueEyes-enabled television could become active when the user makes eye contact, at which point the user could then tell the television to "turn on". Most of us hardly notice the surveillance cameras watching over the grocery store or the bank. But lately those lenses have been looking for far more than shoplifters. Applications: 1.Engineers at IBM's office: smarttags" Research Center in San Jose, CA, report that a number of large retailers have implemented surveillance systems that record and interpret customer movements, using software from Almaden's BlueEyes research project. BlueEyes is developing ways for computers to anticipate users' wants by gathering video data on eye movement and facial expression. Your gaze might rest on a Web site heading, for example, and that would prompt your computer to find similar links and to call them up in a new window. But the first practical use for the research turns out to be snooping on shoppers. BlueEyes software makes sense of what the cameras see to answer key questions for retailers, including, How many shoppers ignored a promotion? How many stopped? How long did they stay? Did their faces

register boredom or delight? How many reached for the item and put it in their shopping carts? BlueEyes works by tracking pupil, eyebrow and mouth movement. When monitoring pupils, the system uses a camera and two infrared light sources placed inside the product display. One light source is aligned with the camera's focus; the other is slightly off axis. When the eye looks into the cameraaligned light, the pupil appears bright to the sensor, and the software registers the customer's attention.this is way it captures the person's income and buying preferences. BlueEyes is actively been incorporated in some of the leading retail outlets. 2. Another application would be in the automobile industry. By simply touching a computer input device such as a mouse, the computer system is designed to be able to determine a person's emotional state. for cars, it could be useful to help with critical decisions like: "I know you want to get into the fast lane, but I'm afraid I can't do that.Your too upset right now" and therefore assist in driving safely. 3. Current interfaces between computers and humans can present information vividly, but have no sense of whether that information is ever viewed or understood. In contrast, new real-time computer vision techniques for perceiving people allows us to create "Face-responsive Displays" and "Perceptive Environments", which can sense and respond to users that are viewing them. Using stereo-vision techniques, we are able to detect, track, and identify users robustly and in real time. This information can make spoken language interface more robust, by selecting the acoustic information from a visuallylocalized source. Environments can become aware of how many people are present, what activity is occuring, and therefore what display or messaging modalities are most appropriate to use in the current situation. The results of our research will allow the interface between computers and human users to become more natural and intuitive. 4. We could see its use in video games where, it could give individual challenges to customers playing video games.Typically targeting commercial business. The integration of children's toys, technologies and computers is enabling new play experiences that were not commercially feasible until recently. The Intel Play QX3 Computer Microscope, the Me2Cam with Fun Fair, and the Computer Sound Morpher are commercially available smart toy products developed by the Intel Smart Toy Lab in . One theme that is common across these PC-connected toys is that users interact with them using a combination of visual, audible and tactile input & output modalities. The presentation will provide an overview of the interaction design of these products and pose some unique challenges faced by designers and engineers of such experiences targeted at novice computer users, namely young children.

5. The familiar and useful come from things we recognize. Many of our favorite things' appearance communicate their use; they show the change in their value though patina. As technologists we are now poised to imagine a world where computing objects communicate with us in-situ; where we are. We use our looks, feelings, and actions to give the computer the experience it needs to work with us. Keyboards and mice will not continue to dominate computer user interfaces. Keyboard input will be replaced in large measure by systems that know what we want and require less explicit communication. Sensors are gaining fidelity and ubiquity to record presence and actions; sensors will notice when we enter a space, sit down, lie down, pump iron, etc. Pervasive infrastructure is recording it. This talk will cover projects from the Context Aware Computing Group at MIT Media Lab.

Potrebbero piacerti anche

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDa EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeValutazione: 4 su 5 stelle4/5 (5794)

- The Yellow House: A Memoir (2019 National Book Award Winner)Da EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Valutazione: 4 su 5 stelle4/5 (98)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDa EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryValutazione: 3.5 su 5 stelle3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDa EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceValutazione: 4 su 5 stelle4/5 (895)

- The Little Book of Hygge: Danish Secrets to Happy LivingDa EverandThe Little Book of Hygge: Danish Secrets to Happy LivingValutazione: 3.5 su 5 stelle3.5/5 (400)

- Shoe Dog: A Memoir by the Creator of NikeDa EverandShoe Dog: A Memoir by the Creator of NikeValutazione: 4.5 su 5 stelle4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItDa EverandNever Split the Difference: Negotiating As If Your Life Depended On ItValutazione: 4.5 su 5 stelle4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDa EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureValutazione: 4.5 su 5 stelle4.5/5 (474)

- Grit: The Power of Passion and PerseveranceDa EverandGrit: The Power of Passion and PerseveranceValutazione: 4 su 5 stelle4/5 (588)

- The Emperor of All Maladies: A Biography of CancerDa EverandThe Emperor of All Maladies: A Biography of CancerValutazione: 4.5 su 5 stelle4.5/5 (271)

- On Fire: The (Burning) Case for a Green New DealDa EverandOn Fire: The (Burning) Case for a Green New DealValutazione: 4 su 5 stelle4/5 (74)

- Team of Rivals: The Political Genius of Abraham LincolnDa EverandTeam of Rivals: The Political Genius of Abraham LincolnValutazione: 4.5 su 5 stelle4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDa EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaValutazione: 4.5 su 5 stelle4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDa EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersValutazione: 4.5 su 5 stelle4.5/5 (344)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDa EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyValutazione: 3.5 su 5 stelle3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDa EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreValutazione: 4 su 5 stelle4/5 (1090)

- The Unwinding: An Inner History of the New AmericaDa EverandThe Unwinding: An Inner History of the New AmericaValutazione: 4 su 5 stelle4/5 (45)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Da EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Valutazione: 4.5 su 5 stelle4.5/5 (121)

- Her Body and Other Parties: StoriesDa EverandHer Body and Other Parties: StoriesValutazione: 4 su 5 stelle4/5 (821)

- Anya S. Salik G12-STEM - Sison Understanding Culture, Society and Politics Quarter 1 - Module 5Documento3 pagineAnya S. Salik G12-STEM - Sison Understanding Culture, Society and Politics Quarter 1 - Module 5Naila SalikNessuna valutazione finora

- Characteristicsof Quantitative ResearchDocumento12 pagineCharacteristicsof Quantitative ResearchGladys Glo MarceloNessuna valutazione finora

- Ray Schlanger Sutton 2009Documento23 pagineRay Schlanger Sutton 2009abigailNessuna valutazione finora

- Integrative Approaches To Supervision PDFDocumento219 pagineIntegrative Approaches To Supervision PDFpentele100% (1)

- How To Communicate Globally and EffectivelyDocumento11 pagineHow To Communicate Globally and EffectivelyconniephuongNessuna valutazione finora

- Qar LessonDocumento5 pagineQar Lessonapi-270541136Nessuna valutazione finora

- Recre CP 1 Recreation and Leisure Recreational ActivitiesDocumento5 pagineRecre CP 1 Recreation and Leisure Recreational ActivitiesYuuki Chitose (tai-kun)Nessuna valutazione finora

- Developmental Characteristics of AdolescenceDocumento1 paginaDevelopmental Characteristics of Adolescenceapi-644447279Nessuna valutazione finora

- Treadway Lima Tire Plant - Case Analysis by Vishal JoshiDocumento8 pagineTreadway Lima Tire Plant - Case Analysis by Vishal Joshivijoshi50% (2)

- REBTDocumento18 pagineREBTGayathree ChandranNessuna valutazione finora

- Kelas Xi Asking Nad Giving OpinionDocumento8 pagineKelas Xi Asking Nad Giving Opinionerdita80% (5)

- Handout - Creating Sales MAGICDocumento16 pagineHandout - Creating Sales MAGICGlodhakKaosNessuna valutazione finora

- Maths Launching FuturesDocumento218 pagineMaths Launching FuturesRodsNessuna valutazione finora

- QM5 Unit02 Worksheet 2 Reinforcement With AnswersDocumento2 pagineQM5 Unit02 Worksheet 2 Reinforcement With Answersrocio ruiz ruizNessuna valutazione finora

- MG214 AssignmentDocumento3 pagineMG214 AssignmentStephanie GaraeNessuna valutazione finora

- Bullying and CiberbullyingDocumento9 pagineBullying and CiberbullyingDyNessuna valutazione finora

- 1969 Ajzen and Fishbein - The Predicion of Behavioral Intentions in A Choice SituationDocumento17 pagine1969 Ajzen and Fishbein - The Predicion of Behavioral Intentions in A Choice SituationJohnny ChamataNessuna valutazione finora

- EQUITY THEORY - OB Week 6Documento11 pagineEQUITY THEORY - OB Week 6Cristian VasquezNessuna valutazione finora

- 10 Ways To Concentrate Like Sherlock HolmesDocumento3 pagine10 Ways To Concentrate Like Sherlock HolmesRicks FlanelNessuna valutazione finora

- Ge2021 PeeDocumento122 pagineGe2021 PeeaassaanmkNessuna valutazione finora

- Brain Rules PostersDocumento12 pagineBrain Rules PostersPear Press100% (2)

- Role of Local and Regional Food in Destination Marketing A Study of ShimlaDocumento10 pagineRole of Local and Regional Food in Destination Marketing A Study of ShimlaVaibhav VermaNessuna valutazione finora

- Action ResearchDocumento16 pagineAction Researchrar93Nessuna valutazione finora

- Course Outline - CTU152 NEW 1Documento8 pagineCourse Outline - CTU152 NEW 1deleyNessuna valutazione finora

- How To Be UnstoppableDocumento29 pagineHow To Be UnstoppableMinista JazzNessuna valutazione finora

- Envisioning The Future of Education TechnologyDocumento1 paginaEnvisioning The Future of Education TechnologyiSocialWatch.comNessuna valutazione finora

- Assessment and Identification of Needs PDFDocumento637 pagineAssessment and Identification of Needs PDFlbchongthu100% (1)

- Journal No. 2Documento2 pagineJournal No. 2Sheena Chan95% (19)

- NEO FFiDocumento10 pagineNEO FFiAsh LunaNessuna valutazione finora

- Plato and PoetsDocumento53 paginePlato and PoetsSafiullah SafiemNessuna valutazione finora