Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

Intel VTune Using

Caricato da

Grigore LupescuCopyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

Intel VTune Using

Caricato da

Grigore LupescuCopyright:

Formati disponibili

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Contents

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Intel Core i7 Processor Features . . . . . . . . . . . . . . . . . . . . . . . . 3 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Intel Core i7 Processor Performance Monitoring Unit . . . . 3 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Complexities of Performance Measurement . . . . . . . . . . . . . . . 4 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 The Software Optimization Process . . . . . . . . . . . . . . . . . . . . . . . 4 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Step 1: Identify the Hotspots . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Step 2: Determine Efficiency . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Efficiency Method 1: % Execution Stalled . . . . . . . . . . . . . . . . . . 4 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Efficiency Method 2: Changes in Cycles per Instruction (CPI) 5 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Efficiency Method 3: Code Examination . . . . . . . . . . . . . . . . . . . 5 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Code Examination Issue 1: Failure to Use Intel SSE Instructions for Floating Point . . . . 6 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Code Examination Issue 2: Failure to Use New Intel SSE4 .2 Instructions . . . . . . . . . . . . . 6 Step 3: Identify Architectural Reason for Inefficiency . . . . . 6 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Cache Misses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Branch Mispredicts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Front-End Stalls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Address Aliasing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 4k False Store Forwarding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Set Associative Cache Issue . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Memory Bank Issues . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Long Latency Instructions and Exceptions . . . . . . . . . . . . . . . . 8 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 DTLB Misses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Notes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 For More Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .9

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Introduction

This article is designed for developers working on an Intel Core i7 platform and using Intel VTune Performance Analyzer . Software optimization should begin after you have: Utilized any compiler optimization options (/O2, /QxSSE4 .2, etc) Chosen an appropriate workload Measured baseline performance If you are new to Intel VTune, see the companion article, Intel VTune Performance Analyzer How-To Guide . The performance information here applies to other tools as well, such as PTU, but is focused on Intel VTune analyzer .

Intel Turbo Boost Technology: Adds complexity for performance analysis . If you can it is easier to turn it off during performance analysis . The features of the Integrated Memory Controller/Smart Memory Access/QPI/8 MB Intel Smart Cache: Adds more things which can be or need to be measured on Nehalem

Intel Core i7 Processor Performance Monitoring Unit

The event names change for each processor, but the performance analysis concepts stay the same . Measure 7 performance events at a time - 4 general purpose performance counters

Intel Core i7 Processor Features

Notes: The following features greatly affect performance monitoring: The new performance monitoring unit (new counters, new meanings) Intel Wide Dynamic Execution (including the OOO capabilities of the processor): Adds complexity for performance analysis Intel Hyper-Threading Technology: Adds complexity for performance analysis . If you can it is easier to turn it off during performance analysis . Integrated Memory Controller Intel Hyper-Threading Technology Intel Turbo Boost Technology Intel Smart Memory Access Intel QuickPath Interconnect 8 MB Intel Smart Cache Intel Wide Dynamic Execution Intel HD Boost Quad-core processing Digital thermal sensor New performance monitoring unit

- 3 fixed function performance counters Measure more events than ever before - Hundreds of different things can now be measured Only a few are commonly required for performance insight Measure core and uncore events Gather more information for certain events - i .e ., Load latency Notes: The 3 fixed function performance counters: INST_RETIRED.ANY_P CPU_CLK_UNHALTED.THREAD_P CPU_CLK_UNHALTED.REF_P Each of these counters has a corresponding programmable version (which will use a general purpose counter): INST_RETIRED.ANY CPU_CLK_UNHALTED.THREAD CPU_CLK_UNHALTED.REF The new capability to measure uncore events is not supported by Intel VTune . And the new information gathered when measuring certain events (i .e ., load latency in the PEBS record) is not supported by Intel VTune Performance Analyzer .

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Complexities of Performance Measurement

Two features of the Intel Core i7 processor have a significant effect on performance measurement: - Intel Hyper-Threading Technology - Intel Turbo Boost Technology With these features enabled, it is more complex to measure and interpret performance data - Most events are counted per thread, some events per core - See Intel VTune Help for specific events Some experts prefer to analyze performance with these features disabled, then reenable them once optimizations are complete Notes: Both Intel Hyper-Threading Technology and Intel Turbo Boost Technology can be enabled or disabled through BIOS on most platforms . Contact the system vendor or manufacturer for the specifics of any platform before attempting this . Incorrectly modifying bios settings from those supplied by the manufacturer can result in rendering the system completely unusable and may void the warranty . Dont forget to reenable these features once you are through with the software optimization process .

Step 1: Identify the Hotspots

What: Hotspots are where your application spends the most time Why: You should aim your optimization efforts there - Why improve a function that only takes 2% of your applications runtime? How: Intel VTune Analyzer Basic Sampling Wizard - Usually hotspots are defined in terms of the CPU_CLK_ UNHALTED.THREAD event (aka clockticks) Notes: For Intel Core i7 processors, the CPU_CLK_UNHALTED.THREAD counter measures unhalted clockticks on a per thread basis . So for each tick of the CPUs clock, the counter will count 2 ticks if HyperThreading is enabled, 1 tick if Hyper-Threading is disabled . There is no per-core clocktick counter . There is also a CPU_CLK_UNHALTED.REF counter, which counts unhalted clockticks per thread, at the reference frequency for the CPU. In other words, the CPU_CLK_UNHALTED.REF counter should not increase or decrease as a result of frequency changes due to Turbo Mode or Speedstep Technology .

Step 2: Determine Efficiency

Determine efficiency of the hotspot using one of three methods: - % execution stalled - Changes in CPI - Code examination Notes: As youll see throughout this article, some of these methods are more appropriate for certain codes than others .

The Software Optimization Process

1 . Identify Hotspots - Determine efficiency of hotspots If inefficient, identify architectural reason for inefficiency 2 . Optimize the issue 3 . Repeat from step 1

% execution stalled and changes in CPI methods rely on Intel VTunes Notes: Although Intel VTune analyzer can also be helpful in threading an application, this discussion is not aimed at the process of introducing threads . The process described here could be used either before or after threading . Intel Thread Profiler is another performance analysis tool included with Intel Vtune Performance Analyzer for Windows* that can help determine the efficiency of a parallel algorithm . Remember that you should only focus on hotspots . Only try to determine efficiency, identify causes, and optimize in hotspots . sampling. The code examination method relies on using Intels capability as a source/disassembly viewer .

Efficiency Method 1: % Execution Stalled

Why: Helps you understand how efficiently your app is using the processors How: (UOPS_EXECUTED.CORE_STALL_CYCLES / (UOPS_ EXECUTED.CORE_ACTIVE_CYCLES + UOPS_EXECUTED. CORE_STALL_CYCLES)) * 100

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Next: - < 10% stall cycles is good: focus on code reduction - 10%50% for client applications: worth investigating stall reduction - 50%80% for server applications: worth investigating stall reduction Notes: The UOPS_EXECUTED.CORE_STALL_CYCLES counter measures when the EXECUTION stage of the pipeline is stalled . This counter counts per core, not per thread . This has implications for doing performance analysis with Intel Hyper-Threading enabled . For example, if Intel Hyper-Threading is enabled, you wont be able to tell if the stall cycles are evenly distributed across the threads or just occurring on one thread . For this reason it can be easier to interpret performance data taken while Intel Hyper-Threading is disabled . (Be sure to enable again after optimizations are completed .) Optimized code (i .e ., SSE instructions) may actually lower the CPI, and increase stall %, but it will increase the performance. CPI and stalls are just the general guide of efficiency . The real measure of efficiency is work taking less timework is not something that can be measured by the CPU . CPI will be doubled if using Intel Hyper-Threading. With Intel HyperThreading enabled, there are actually two different definitions of CPI. We call them Per Thread CPI and Per Core CPI. The Per Thread CPI will be twice the Per Core CPI . Only convert between per Thread and per Core CPI when viewing aggregate CPIs (summed for all logical threads) . Optimized code (i .e ., SSE instructions) may actually lower the CPI, and increase stall percentage, but it will increase the performance . CPI and stalls provides general guidance of efficiency . The real measure of efficiency is work taking less time. Work is not something that can be measured by the CPU .

Efficiency Method 3: Code Examination

Why: Methods 1 and 2 measure how long it takes instructions to execute . The other type of inefficiency is executing too many instructions . How: Use Intel VTune analyzer capability as a source and disassembly viewer Next: - Failure to utilize modern instructions results in larger code size - See code examination issues below Notes: This method involves looking at the disassembly to make sure the most efficient instruction streams are generated . This can be complex and require an expert knowledge of the Intel instruction set and compiler technology. Next, well look at how to find the two easiest issues to detect and fix .

Efficiency Method 2: Changes in Cycles per Instruction (CPI)

Why: Another measure of efficiency that can be useful when comparing two runs - Shows average time it takes one of your workloads instructions to execute How: CPU_CLK_UNHALTED.THREAD / INST_RETIRED.ANY (also automatically calculated in the hotspots view) Next: - CPI can vary widely depending on the application and platform - If code size stays constant, optimizations should focus on reducing CPI Notes: CPI is a ratio of cycles per instruction, so if the code size changes for a binary, CPI will change . In general, if CPI reduces as a result of optimizations, that is good, and if it increases, that is bad . However, there are exceptions . Some code can have a very low CPI but still be inefficient because more instructions are executed than are needed . This problem is discussed using the Code Examination method for determining efficiency .

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

Code Examination Issue 1: Failure to Use Intel SSE Instructions for Floating Point

Why: Using p(arallel) Intel Streaming SIMD Extension (SSE, SSE2, SSE3, SSSE3, SSE4 .1, and SSE4 .2) instructions has the potential to greatly increase the floating point performance . Compilers/libraries may not use the latest instructions by default . How: Drill down to Source View on floating point loops and Examine Assembly Next: Look for floating point math assembly that are not using p(acked) instructions with xmm# or rmm# registers . For example:

- PCMPGTQ Next: If Intel SSE4 .2 is not used, try any of the following: - Libraries, i .e ., Intel Integrated Performance Primitives (Intel IPP) and/or Intel Math Kernel Library (Intel MKL) - Intel Compiler 11.0: Use /QxSSE4.2 (Linux: -xSSE4.2) Note: Some LIBM and CRT library functions have been enhanced in the 11.0 compiler to use Intel SSE4.2 (strlen for example) - Switches for GCC (-mSSE4 .2) or MS compiler (/arch:SSE2) - Rewrite in Assembly/Intrinsics

Step 3: Identify Architectural Reason for Inefficiency

If Methods 1 or 2 are used to determine if code is inefficient, Solutions for Code Examination: Issue 1 and 2 are the same . Notes: Intel SSE included movups (on previous IA-32 and Intel 64 processors, movups) was not the best way to load data from memory . Now, on Intel Core i7 processors, movups and movupd are as fast as movaps and movapd on aligned data . So, when the Intel Compiler uses / QxSSE4 .2 it removes the if condition to check for aligned data speeding up loops and reducing the number of instructions to implement . Notes: + addss is a s(calar) Intel SSE instruction, which is better than x87 instructions, but not as good as packed SSE instructions . These are issues that result in stall cycles and high CPI . It is important to go through these in order, as the most likely problem areas are presented first . investigate potential issues in order of likelihood - Cache Misses - Branch Mispredicts - Front End Stalls - Address Aliasing - Long Latency Instructions and Exceptions - DTLB Misses

Code Examination Issue 2: Failure to Use New Intel SSE4 .2 Instructions

Why: New Intel Streaming SIMD Extensions (SSE4.2) can optimize XML parsing, string parsing/searching, encryption/decryption, CRC checksum, I-SCSI, RDMA, and bit-pattern algorithms How: If the functionality is in the domain of Intel SSE4 .2, drill down to Source View and examine the assembly for Intel SSE4 .2, which uses xmm# or rmm# registers and one of the following instructions: - PCMPISTRI - PCMPESTRI - PCMPISTRM - PCMPESTR - CRC32 - POPCNT

6

Cache Misses

Why: Cache misses raise the CPI of an application - Focus on long latency data accesses coming from second and third level misses How: MEM_LOAD_RETIRED.LLC_MISS, MEM_LOAD_RETIRED .LLC_UNSHARED_HIT, MEM_LOAD_RETIRED. OTHER_CORE_L2_HIT_HITM Next: - Within a hotspot, estimate the % of cycles due to long latency data access: For third level misses: ((MEM_LOAD_RETIRED.LLC_MISS * 180) / CPU_CLK_UNHALTED.THREAD) * 100 For second level misses: (((MEM_LOAD_RETIRED. LLC_UNSHARED_HIT * 35) + (MEM_LOAD_RETIRED.OTHER_ CORE_L2_HIT_HITM * 74)) / CPU_CLK_UNHALTED.THREAD) * 100

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

If percentage is significant (>20%), consider reducing misses: use software prefetch instructions, data blocking, using local variables for threads, padding data structures to cacheline boundaries, or changing your algorithm to reduce data storage

- Try using the /QxSSE4 .2 switch on the Intel Compiler - Try using profile-guided optimizations with your compiler - Use linker ordering techniques (/ORDER on Microsofts linker or a linker script on gcc) - Use switches that reduce code size, such as /O1 or /Os Notes: The pipeline for the Intel Core i7 processor consists of many stages . Some stages are collectively referred to as the front end; these are the stages responsible for fetching, decoding, and issuing instructions and micro-operations . The back end contains the stages responsible for dispatching, executing, and retiring instructions and micro-operations . These two parts of the pipeline are de-coupled and separated by a buffer (in fact, buffering is used throughout the pipeline), so when the front end is stalled, it does NOT necessarily mean the back end is stalled . Front-end stalls are a problem when they are causing instruction starvation, meaning no micro-operations are being issued to the back end . If instruction starvation occurs for a long enough period of time, the back end may stall .

Branch Mispredicts

Why: Mispredicted branches cause pipeline inefficiencies due to wasted work or instruction starvation (while waiting for new instructions to be fetched) How: UOPS_ISSUED.ANY, UOPS_RETIRED.ANY, BR_INST_EXEC. ANY, BR_MISP_EXEC.ANY, RESOURCE_STALLS.ANY Next: - ((UOPS_ISSUED.ANY/UOPS_RETIRED.ANY: Tells you the fraction of execution that was wasted (due to mispredictions) - Instruction starvation = (UOPS_ISSUED.CORE_STALL_CYCLES - RESOURCE_STALLS.ANY)/CPU_CLK_UNHALTED.THREAD - If you have significant wasted work (> .1) or instruction starvation (> .1), examine branch misprediction percentage ((BR_ MISP_EXEC.ANY/BR_INST_EXEC.ANY) * 100) - Try to reduce misprediction impact with better code generation (compiler, profile-guided optimizations, or hand tuning) Notes: All applications will have some branch mispredicts. Up to 10% of branches being mispredicted could be considered normal, depending on the application. However, anything above 10% should be investigated. With branch mispredicts, it is not the number of mispredicts that is the problem, but the impact . The equations above show how to determine the impact of the mispredicts on the front end (with instruction starvation) and the back-end (with wasted execution cycles given to wrongly speculated code) .

Address Aliasing

Why: Having multiple accesses to data that is large 2N (see example: 4K) apart will result in inefficiencies that manifest themselves in multiple ways, such as: - Set Associative Caches - 4K False Store Forwarding How: PARTIAL_ADDRESS_ALIAS Next: - ((PARTIAL_ADDRESS_ALIAS) * 3) / CPU_CLK_UNHALTED. THREAD) * 100) tells you the fraction of execution that was wasted (due to 4K False Store Forwarding) - If impact is significant (> 10%), change the layout of the data, as it is also likely to cause you significant extra data cache misses - Change data layout or data access patterns so that your software isnt skipping through data large 2N Notes: This counter only measures 4K False Store Forwarding, but if 4K False Store Forwarding is detected, your code is probably being impacted by Set Associative Cache issues, 4K False Store Forwarding, and potentially even memory bank issuesall resulting in more cache misses and slower data access .

Front-End Stalls

Why: Front-end stalls (at the issue stage of the pipeline) may cause instruction starvation . This may lead to stalls at the execute stage in the pipeline . How: RESOURCE_STALLS.ANY, UOPS_ISSUED. CORE_STALL_CYCLES Next: - If (UOPS_ISSUED.CORE_STALL_CYCLES - RESOURCE_STALLS. ANY)/CPU_CLK_UNHALTED.THREAD > .1, you have significant instruction starvation - This category of stalls can be fixed with better code generation and layout techniques:

7

Using Intel VTune Performance Analyzer to Optimize Software on Intel Core i7 Processors

4K False Store Forwarding In order to speed things up, when the CPU is processing stores and loads, it uses a subset of the address to determine if the store forward optimization can occur; if the bits are the same it starts to process the load . Later on, it will fully resolve the addresses and determine that the load is not from the same address as the store, resulting in the processor needing to fetch the appropriate data . Set Associative Cache Issue Set Associative Caches are organized in two ways . If two data elements are in memory at addresses that are 2N apart such that they fall in the same set, then they will take two entries in that set on a 4-way set associative cache . The fifth access that tries to occupy the same slot will force one of the other entries out of the cache, turning a large multi-KB/MB cache into a N entry cache . Level one, two, and three caches of different processor SKUs have different cache sizes, line sizes, and associativity . Skipping through memory at exactly 2N boundaries (2K,4K, 16K 256K) will cause the cache to evict entries more quickly . The exact size depends on the processor and the cache . Memory Bank Issues Typically (and for best performance), computers are installed with multiple DIMMs for the RAM. No single DIMM can keep up with requests from the CPU . The system determines which data goes to which DIMM via some bits in the address. Skipping through memorylarge 2N may cause all or most of the accesses to hit the same DIMM.

Notes: FP_ASSIST .ALL: It would be nice to use this counter, but it only works for x87 code (some of the manuals misreport that this event works on SSE code) . UOPS_DECODED.MS: Counts the number of Uops decoded by the Microcode Sequencer, MS . The MS delivers uops when the instruction is more than 4 uops long or a microcode assist is occurring . There is no public definitive list of events/instructions that will cause this to fire. If there is a high % of uop_dedoded.ms, then try to deduce why the processor is issuing extra instructions or causing an exception in your code . 1 UOP does not necessarily equal to one cycle, but the result is close enough to determine if it is affecting your overall time .

DTLB Misses

Why: DTLB Load misses that require a page walk can affect your applications performance How: DTLB_LOAD_MISSES.WALK_COMPLETED Next: - Estimate the impact on your app from TLB misses ((DTLB_ LOAD_MISSES.WALK_COMPLETED * 30) / CPU_CLK_UNHALTED. THREAD) * 100 - If impact is significant (> 5-10%), optimize functions with high DTLB misses - Possible optimizations: Target data locality to TLB size, use the Extended Page Tables (EPT) on virtualized systems, try large pages (database/server apps only) Notes: On-target data locality to TLB size . This is accomplished via data blocking and trying to minimize random access patterns . This is more likely to occur with server applications or applications with a large random dataset .

Long Latency Instructions and Exceptions

Why: If these kinds of events/instructions are causing a significant impact, altering the code may yield more performance How: UOPS_DECODED.MS, UOPS_RETIRED.ANY Next: - If (UOPS_DECODED.MS/UOPS_RETIRED.ANY) * 100 > 50%, then impact is significant - Now examine the code for potential exceptions or long latency instructions - Common exceptions to check for include: Check your source for possible Denormals/Overflow in floating point codes. To fix this, enable FTZ and/or DAZ if using SSE instructions or scale your results up or down depending on the problem Check your source for floating point division by zero (precondition via denominator)

8

For More Information

Intel Vtune Performance Analyzer user forums: http://software .intel .com/en-us/forums/intel-vtuneperformance-analyzer/ Go Parallel resource site: http://www .ddj .com/go-parallel Intel 64 and IA-32 software developer manuals: http://www .intel .com/products/processor/manuals/ index .htm The Intel PTU tool provides a more advanced level of tuning with access to more counter capabilities . Learn more about PTU at: http://whatif .intel .com

2009-2010, Intel Corporation. All rights reserved. Intel, the Intel logo, Intel Core and VTune are trademarks of Intel Corporation in the U.S. and other countries. *Other names and brands may be claimed as the property of others. 0209/BLA/CMD/PDF 321520-001

Potrebbero piacerti anche

- Spec 40009 38 0Documento41 pagineSpec 40009 38 0PlonkerNessuna valutazione finora

- SAN Storage PerformanceDocumento412 pagineSAN Storage PerformancePablo Alberto Islas PadillaNessuna valutazione finora

- Using Rational Performance Tester Version 7Documento574 pagineUsing Rational Performance Tester Version 7ahm_mo2001Nessuna valutazione finora

- Embedded DSP Processor Design: Application Specific Instruction Set ProcessorsDa EverandEmbedded DSP Processor Design: Application Specific Instruction Set ProcessorsNessuna valutazione finora

- Reference Manual: ADEPT Automatic Video Trackers Edition 4BDocumento136 pagineReference Manual: ADEPT Automatic Video Trackers Edition 4Bing jya100% (1)

- Intelligent Sensor Design Using the Microchip dsPICDa EverandIntelligent Sensor Design Using the Microchip dsPICNessuna valutazione finora

- Snort 3 User ManualDocumento103 pagineSnort 3 User ManualColm MaddenNessuna valutazione finora

- PIC Microcontroller Projects in C: Basic to AdvancedDa EverandPIC Microcontroller Projects in C: Basic to AdvancedValutazione: 5 su 5 stelle5/5 (10)

- Practical Continuous Testing SampleDocumento77 paginePractical Continuous Testing SampleMansa ChNessuna valutazione finora

- ARM AssyLangDocumento156 pagineARM AssyLangxuanvinhspktvlNessuna valutazione finora

- DigisilentUsersManual enDocumento1.411 pagineDigisilentUsersManual enEnock MwebesaNessuna valutazione finora

- Snort UserDocumento114 pagineSnort UserOscar Ivan Herrera BonillaNessuna valutazione finora

- Signal Processing On Intel ArchitectureDocumento16 pagineSignal Processing On Intel ArchitectureRajani PanathalaNessuna valutazione finora

- Pok UserguideDocumento110 paginePok Userguidesheldon_chuangNessuna valutazione finora

- Pintos Guide PDFDocumento76 paginePintos Guide PDFEsteban JuárezNessuna valutazione finora

- ARM AssyLang PDFDocumento0 pagineARM AssyLang PDFtamilpannaiNessuna valutazione finora

- DA56 EnglishDocumento132 pagineDA56 EnglishDenis Villanueva PerezNessuna valutazione finora

- TutorialDocumento118 pagineTutorialEdward Raja KumarNessuna valutazione finora

- DebuggerDocumento535 pagineDebuggercheckblaNessuna valutazione finora

- Computer Organziation and Architecture Lab: Department of Computer EngineeringDocumento53 pagineComputer Organziation and Architecture Lab: Department of Computer EngineeringAli HashmiNessuna valutazione finora

- Delem Bending MachineDocumento152 pagineDelem Bending Machineomar alamoriNessuna valutazione finora

- Certification Study Guide IBM Total Storage Productivity Center V3.1 - Sg247390Documento388 pagineCertification Study Guide IBM Total Storage Productivity Center V3.1 - Sg247390bupbechanhNessuna valutazione finora

- ORACLE For UNIX - Performance Tuning TipsDocumento90 pagineORACLE For UNIX - Performance Tuning Tipselcaso34Nessuna valutazione finora

- DA65 WeDocumento150 pagineDA65 Westancicd9325Nessuna valutazione finora

- Openss7 Linux Native SCTP Installation and Reference Manual: Brian Bidulock For The Openss7 ProjectDocumento188 pagineOpenss7 Linux Native SCTP Installation and Reference Manual: Brian Bidulock For The Openss7 ProjectTomy StarkNessuna valutazione finora

- LmfitDocumento323 pagineLmfitNeha RajputNessuna valutazione finora

- Performance Tuning Guide: DB2 UDB V7.1Documento418 paginePerformance Tuning Guide: DB2 UDB V7.1karthika.subramanianNessuna valutazione finora

- MatlabDocumento83 pagineMatlabCristianDavidFrancoHernandezNessuna valutazione finora

- Microprocessor Project: Fabrice Ben Hamouda, Yoann Bourse, Hang ZhouDocumento27 pagineMicroprocessor Project: Fabrice Ben Hamouda, Yoann Bourse, Hang Zhoumarye bayeNessuna valutazione finora

- Digital Logic DesignDocumento108 pagineDigital Logic DesignAyesha ZafarNessuna valutazione finora

- Hacs Pyscript Readthedocs Io en StableDocumento42 pagineHacs Pyscript Readthedocs Io en StableRamesh NaradalaNessuna valutazione finora

- Problem Solving With Python CompressDocumento326 pagineProblem Solving With Python CompressAtik AzimNessuna valutazione finora

- PSPP Users' Guide: GNU PSPP Statistical Analysis Software Release 0.8.2-Gf8ea4bDocumento196 paginePSPP Users' Guide: GNU PSPP Statistical Analysis Software Release 0.8.2-Gf8ea4bEvelyn MoranNessuna valutazione finora

- 0254 CPP Programming TutorialDocumento119 pagine0254 CPP Programming TutorialDamjan IlievskiNessuna valutazione finora

- Delem: Reference Manual Operation of Version V2Documento150 pagineDelem: Reference Manual Operation of Version V2William Millan RomeroNessuna valutazione finora

- 8085 Documentation PDFDocumento35 pagine8085 Documentation PDFgkalfakakouNessuna valutazione finora

- IBM Tivoli Usage Accounting Manager V7.1 Handbook Sg247404Documento400 pagineIBM Tivoli Usage Accounting Manager V7.1 Handbook Sg247404bupbechanhNessuna valutazione finora

- 8085 Simulator: A User Manual OnDocumento41 pagine8085 Simulator: A User Manual OnkanchankonwarNessuna valutazione finora

- SG 246432Documento534 pagineSG 246432registrarse1974Nessuna valutazione finora

- Test Works BookDocumento118 pagineTest Works BookEmilia VardarovaNessuna valutazione finora

- GetdpgmshDocumento462 pagineGetdpgmshalexisNessuna valutazione finora

- Snort UserDocumento116 pagineSnort Userumor80105Nessuna valutazione finora

- Implementing IBM Content Manager OnDemand SolutionsDocumento372 pagineImplementing IBM Content Manager OnDemand SolutionsLuis RamirezNessuna valutazione finora

- SciNet TutorialDocumento22 pagineSciNet TutorialJohn SmithNessuna valutazione finora

- Sfepy ManualDocumento1.099 pagineSfepy ManualMarcoNessuna valutazione finora

- Driverless A I BookletDocumento120 pagineDriverless A I BookletkrominesNessuna valutazione finora

- 15h SW Opt GuideDocumento396 pagine15h SW Opt GuideTheodoros MaragakisNessuna valutazione finora

- Gnu PSPP: A System For Statistical Analysis Edition 0.4.3, For PSPP Version 0.4.3Documento175 pagineGnu PSPP: A System For Statistical Analysis Edition 0.4.3, For PSPP Version 0.4.3infobitsNessuna valutazione finora

- Delem: Reference Manual Operation of Version V1Documento132 pagineDelem: Reference Manual Operation of Version V1Cash ZebaNessuna valutazione finora

- An Introduction To Chip-8 Emulation Using The Rust ProgrammingDocumento53 pagineAn Introduction To Chip-8 Emulation Using The Rust ProgrammingDanillo SouzaNessuna valutazione finora

- Sfepy Manual PDFDocumento911 pagineSfepy Manual PDFCamilo CorreaNessuna valutazione finora

- Theangulartutorial PDFDocumento537 pagineTheangulartutorial PDFankur881120Nessuna valutazione finora

- The Angular Tutorial PreviewDocumento115 pagineThe Angular Tutorial Previewmovs6812210% (1)

- Compellent Enterprise Manager Administrators GuideDocumento436 pagineCompellent Enterprise Manager Administrators Guidebogdan_asdasdNessuna valutazione finora

- Ultimate Guide To CVS Server Administration in Fedora 6Documento47 pagineUltimate Guide To CVS Server Administration in Fedora 6ATOzTOA100% (3)

- Automapper Documentation: Jimmy BogardDocumento103 pagineAutomapper Documentation: Jimmy BogardEliseo Hernandez GuerreroNessuna valutazione finora

- Certification Study Guide Series IBM Tivoli Application Dependency Discovery Manager V7.1 Sg247764Documento224 pagineCertification Study Guide Series IBM Tivoli Application Dependency Discovery Manager V7.1 Sg247764bupbechanhNessuna valutazione finora

- Drying AgentDocumento36 pagineDrying AgentSo MayeNessuna valutazione finora

- Class 7 Science Electric Current and Its EffectsDocumento7 pagineClass 7 Science Electric Current and Its Effectsshanna_heenaNessuna valutazione finora

- As4e-Ide-2 7 0 851-ReadmeDocumento10 pagineAs4e-Ide-2 7 0 851-ReadmeManoj SharmaNessuna valutazione finora

- Winter Internship Report (23/09/2016 - 31/01/2017)Documento56 pagineWinter Internship Report (23/09/2016 - 31/01/2017)AyushNessuna valutazione finora

- Ex Delta Ex Delta - Dia: OVAL CorporationDocumento8 pagineEx Delta Ex Delta - Dia: OVAL CorporationDaniela GuajardoNessuna valutazione finora

- Elvax 460Documento3 pagineElvax 460ingindjorimaNessuna valutazione finora

- Wri Method FigDocumento15 pagineWri Method Figsoumyadeep19478425Nessuna valutazione finora

- Battery Power Management For Portable Devices PDFDocumento259 pagineBattery Power Management For Portable Devices PDFsarikaNessuna valutazione finora

- VULCAN Instruction Manual ALL-A4Documento94 pagineVULCAN Instruction Manual ALL-A4Ayco DrtNessuna valutazione finora

- Synthetic Rubber Proofed/Coated Fuel Pump Diaphragm Fabric-Specification (Documento9 pagineSynthetic Rubber Proofed/Coated Fuel Pump Diaphragm Fabric-Specification (Ved PrakashNessuna valutazione finora

- ZXComputingDocumento100 pagineZXComputingozzy75Nessuna valutazione finora

- Dnvgl-Ru-Ships (2015) Part 3 Ch-10 TrolleyDocumento7 pagineDnvgl-Ru-Ships (2015) Part 3 Ch-10 TrolleyyogeshNessuna valutazione finora

- KSB - Submersible Pump - Ama Porter 501 SEDocumento30 pagineKSB - Submersible Pump - Ama Porter 501 SEZahid HussainNessuna valutazione finora

- Deepwater Horizon Accident Investigation Report Appendices ABFGHDocumento37 pagineDeepwater Horizon Accident Investigation Report Appendices ABFGHBren-RNessuna valutazione finora

- A New Finite Element Based On The Strain Approach For Linear and Dynamic AnalysisDocumento6 pagineA New Finite Element Based On The Strain Approach For Linear and Dynamic AnalysisHako KhechaiNessuna valutazione finora

- Logcat 1693362990178Documento33 pagineLogcat 1693362990178MarsNessuna valutazione finora

- Water System PQDocumento46 pagineWater System PQasit_mNessuna valutazione finora

- TIL 1881 Network Security TIL For Mark VI Controller Platform PDFDocumento11 pagineTIL 1881 Network Security TIL For Mark VI Controller Platform PDFManuel L LombarderoNessuna valutazione finora

- d9 VolvoDocumento57 pagined9 Volvofranklin972100% (2)

- Libeskind Daniel - Felix Nussbaum MuseumDocumento6 pagineLibeskind Daniel - Felix Nussbaum MuseumMiroslav MalinovicNessuna valutazione finora

- Host Interface Manual - U411 PDFDocumento52 pagineHost Interface Manual - U411 PDFValentin Ghencea50% (2)

- McLaren Artura Order BKZQG37 Summary 2023-12-10Documento6 pagineMcLaren Artura Order BKZQG37 Summary 2023-12-10Salvador BaulenasNessuna valutazione finora

- PDK Repair Aftersales TrainingDocumento22 paginePDK Repair Aftersales TrainingEderson BJJNessuna valutazione finora

- Qualcast 46cm Petrol Self Propelled Lawnmower: Assembly Manual XSZ46D-SDDocumento28 pagineQualcast 46cm Petrol Self Propelled Lawnmower: Assembly Manual XSZ46D-SDmark simpsonNessuna valutazione finora

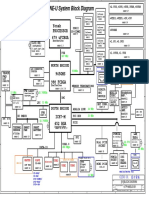

- Clevo M620ne-UDocumento34 pagineClevo M620ne-UHh woo't hoofNessuna valutazione finora

- cjv30 Maintenance V10a PDFDocumento101 paginecjv30 Maintenance V10a PDFEdu100% (1)

- MS3XV30 Hardware 1.3Documento229 pagineMS3XV30 Hardware 1.3Colton CarmichaelNessuna valutazione finora

- MB m.2 Support Am4Documento2 pagineMB m.2 Support Am4HhhhCaliNessuna valutazione finora

- LN3 Geng2340Documento61 pagineLN3 Geng2340Seth VineetNessuna valutazione finora

- 4 MPM Scope - OutputDocumento45 pagine4 MPM Scope - OutputSajid Ali MaariNessuna valutazione finora

- iPhone 14 Guide for Seniors: Unlocking Seamless Simplicity for the Golden Generation with Step-by-Step ScreenshotsDa EverandiPhone 14 Guide for Seniors: Unlocking Seamless Simplicity for the Golden Generation with Step-by-Step ScreenshotsValutazione: 5 su 5 stelle5/5 (4)

- CompTIA A+ Complete Review Guide: Core 1 Exam 220-1101 and Core 2 Exam 220-1102Da EverandCompTIA A+ Complete Review Guide: Core 1 Exam 220-1101 and Core 2 Exam 220-1102Valutazione: 5 su 5 stelle5/5 (2)

- Chip War: The Quest to Dominate the World's Most Critical TechnologyDa EverandChip War: The Quest to Dominate the World's Most Critical TechnologyValutazione: 4.5 su 5 stelle4.5/5 (229)

- Chip War: The Fight for the World's Most Critical TechnologyDa EverandChip War: The Fight for the World's Most Critical TechnologyValutazione: 4.5 su 5 stelle4.5/5 (82)

- iPhone Unlocked for the Non-Tech Savvy: Color Images & Illustrated Instructions to Simplify the Smartphone Use for Beginners & Seniors [COLOR EDITION]Da EverandiPhone Unlocked for the Non-Tech Savvy: Color Images & Illustrated Instructions to Simplify the Smartphone Use for Beginners & Seniors [COLOR EDITION]Valutazione: 5 su 5 stelle5/5 (4)

- CompTIA A+ Complete Practice Tests: Core 1 Exam 220-1101 and Core 2 Exam 220-1102Da EverandCompTIA A+ Complete Practice Tests: Core 1 Exam 220-1101 and Core 2 Exam 220-1102Nessuna valutazione finora

- Computer Science: A Concise IntroductionDa EverandComputer Science: A Concise IntroductionValutazione: 4.5 su 5 stelle4.5/5 (14)

- CompTIA A+ Complete Review Guide: Exam Core 1 220-1001 and Exam Core 2 220-1002Da EverandCompTIA A+ Complete Review Guide: Exam Core 1 220-1001 and Exam Core 2 220-1002Valutazione: 5 su 5 stelle5/5 (1)

- Arduino and Raspberry Pi Sensor Projects for the Evil GeniusDa EverandArduino and Raspberry Pi Sensor Projects for the Evil GeniusNessuna valutazione finora

- CompTIA A+ Certification All-in-One Exam Guide, Eleventh Edition (Exams 220-1101 & 220-1102)Da EverandCompTIA A+ Certification All-in-One Exam Guide, Eleventh Edition (Exams 220-1101 & 220-1102)Valutazione: 5 su 5 stelle5/5 (2)

- Cyber-Physical Systems: Foundations, Principles and ApplicationsDa EverandCyber-Physical Systems: Foundations, Principles and ApplicationsHoubing H. SongNessuna valutazione finora

- iPhone X Hacks, Tips and Tricks: Discover 101 Awesome Tips and Tricks for iPhone XS, XS Max and iPhone XDa EverandiPhone X Hacks, Tips and Tricks: Discover 101 Awesome Tips and Tricks for iPhone XS, XS Max and iPhone XValutazione: 3 su 5 stelle3/5 (2)

- iPhone Photography: A Ridiculously Simple Guide To Taking Photos With Your iPhoneDa EverandiPhone Photography: A Ridiculously Simple Guide To Taking Photos With Your iPhoneNessuna valutazione finora

- Raspberry PI: Learn Rasberry Pi Programming the Easy Way, A Beginner Friendly User GuideDa EverandRaspberry PI: Learn Rasberry Pi Programming the Easy Way, A Beginner Friendly User GuideNessuna valutazione finora

- Creative Selection: Inside Apple's Design Process During the Golden Age of Steve JobsDa EverandCreative Selection: Inside Apple's Design Process During the Golden Age of Steve JobsValutazione: 4.5 su 5 stelle4.5/5 (49)

- Samsung Galaxy S22 Ultra User Guide For Beginners: The Complete User Manual For Getting Started And Mastering The Galaxy S22 Ultra Android PhoneDa EverandSamsung Galaxy S22 Ultra User Guide For Beginners: The Complete User Manual For Getting Started And Mastering The Galaxy S22 Ultra Android PhoneNessuna valutazione finora

- The comprehensive guide to build Raspberry Pi 5 RoboticsDa EverandThe comprehensive guide to build Raspberry Pi 5 RoboticsNessuna valutazione finora

- Cancer and EMF Radiation: How to Protect Yourself from the Silent Carcinogen of ElectropollutionDa EverandCancer and EMF Radiation: How to Protect Yourself from the Silent Carcinogen of ElectropollutionValutazione: 5 su 5 stelle5/5 (2)

- The User's Directory of Computer NetworksDa EverandThe User's Directory of Computer NetworksTracy LaqueyNessuna valutazione finora

- Jensen Huang's Nvidia: Processing the Mind of Artificial IntelligenceDa EverandJensen Huang's Nvidia: Processing the Mind of Artificial IntelligenceNessuna valutazione finora

- Hacking With Linux 2020:A Complete Beginners Guide to the World of Hacking Using Linux - Explore the Methods and Tools of Ethical Hacking with LinuxDa EverandHacking With Linux 2020:A Complete Beginners Guide to the World of Hacking Using Linux - Explore the Methods and Tools of Ethical Hacking with LinuxNessuna valutazione finora

![iPhone Unlocked for the Non-Tech Savvy: Color Images & Illustrated Instructions to Simplify the Smartphone Use for Beginners & Seniors [COLOR EDITION]](https://imgv2-1-f.scribdassets.com/img/audiobook_square_badge/728318688/198x198/f3385cbfef/1715431191?v=1)