Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

05-Huawei Storage Reliability Technologies

Caricato da

Agustin GarciaCopyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

05-Huawei Storage Reliability Technologies

Caricato da

Agustin GarciaCopyright:

Formati disponibili

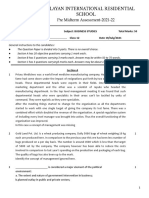

2.

2019/9

Storage Reliability Technologies

HUAWEI TECHNOLOGIES CO., LTD.

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 2

Storage Reliability Metrics

Downtime DT = (1 – A) x 8760 x 60 (minutes/year)

Time during which a service or

MTBF function is unavailable

Mean Time Between Failure

Durability

Service HA No data loss

Availability

Availability = MTBF/(MTBF + MTTR) Durability = 1 – Data Loss Rate

MTTR Maintainability

Mean Time To Recover Rapid fault recovery

Repair rate = 1/MTTR

High hardware reliability

Reliability Failure rate = 1/MTBF, 1 FITs = 10-9 (1/h)

Return repair rate F(t) = x t Annual return repair rate = x 8760

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 3

Overview of Storage System Reliability

Level 3:

Solution level

Data reliability Service availability O&M reliability

The data protection Geo- Component

Remote Backup and Active-active Rolling upgrade

function and disaster redundancy upgrade

replication archiving

recovery (DR) solutions

are used to provide Proactive

system-level data Snapshot Data clone maintenance

protection and DR.

Level 2: Node Continuous Bad block/sector Slow disk

RAID 2.0+ DIX+T10 PI

System level redundancy mirroring scanning isolation

Based on system-level Fast Reconstructio Overload Multiple Health

reliability design, fault Wear leveling

reconstruction n and offload control cache copies evaluation

self-healing and data

integrity protection are Matrix Second-level Quick response

Pre-copy

provided for a system. protection switchover to slow I/Os

Level 1:

Module level

Hardware

Component Device Media Production Disk

Model reliability is ensured.

reliability

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 4

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 5

Module-Level Reliability — Component

Module System Solution

Level Level Level

Component reliability Disk reliability Data integrity Planned activity

• Optical module • Storage component • Fault forecast • Anti-vibration design

• Clock • Connector • Production- • ERT • T10 PI

• Dedicated IC • Error prevention

component phase filtering • Heat dissipation design • Chip ECC/CRC

• Replacement of

Component • Soft error faulty components

Reliability of Huawei-developed Media

SSD reliability prevention

• Reliable upgrade

chips • Backup power • Load balancing algorithm • Protocol data • Reliable expansion

integrity

• Error detecting • ECC/CRC reliability • Error correction algorithm

• Error handling • RAID • Bad block management • Data storage

• BIST

redundancy Running fault

detection and

• Key hardware signals • Storage components • Board process/materials self-healing

• BIST

Manufacturing

Board Board reliability • Low-speed management bus • Clock signals • Fault diagnosis and

• Board temperature • Board power supply • SI/PI reliability locating

• Materials • Fault prediction

screening • Device inspection

• Burn-in testing before power-on

System power supply/backup • ORT

Redundancy design System cooling • Environment

Device power • IQC exception detection

• FRU/Network redundancy • Fan reliability

• PDU/BBU/CBU/Power supply reliability • Component/Link/

Component/Software/

Data/Protocol

Anti- Dust Moisture Security standards detection and self-

Environment Power Temperature Anti-vibration

corrosion

Altitude

proof proof

EMC

compliance healing

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 6

Electronic products failure rate (Hardware)

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 7

Module-Level Reliability — Disk Module System Solution

Level Level Level

Manufacturing and Screening

ERT Joint test

About 1000 disks have

Tests/audits during ORT long-term test

Sampling test by Huawei production

been tested for three the supplier's for product samples

Huawei test

months by introducing production after delivery

multiple acceleration 5-level FA

factors.

analysis

System test Tiltwatch/Shockwatch

I. System/Disk logs

Locate simple

500+ test cases cover label problems quickly.

the following fields: ERT long-term Circular improvements Disk deployment test

System functions and II. Protocol analysis

reliability test (Quality/Supply/Cooperation) Locate interactive

performance,

compatibility with earlier problems accurately.

versions, disk system III. Electrical signal

reliability Failure analysis analysis

Locate complex disk

Disk test Online quality data problems.

100+ test cases cover the Joint test Component

Start:

analysis/warning IV. Disk teardown for

authentication/ system

following fields: analysis

Functions, performance,

Qualification test Preliminary test for R&D Design review Locate physical

samples/Analysis on

compatibility, reliability,

product applications

Disk selection RMA reverse damages of disks.

firmware test, vibration,

V. Data restoration

temperature cycle, disk

head, disk, special

specifications verification Introduction

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 8

Module-Level Reliability — Cause for Module System Solution

Level Level Level

Silent Data Corruptions on Disks

• Silent data corruption: Data is changed unexpectedly but no

error or warning is reported. Head

– The Remzi team from the University of Wisconsin–Madison

conducted 41 months' research on more than 1.5 million

Platter

HDDs and found more than 400,000 silent data corruptions. Head flying Fingerprint Hair

(3000

height (400 microinch)

Smoke

• Typical silent data corruptions on storage media: (2-4

microinch) (250

Dust

(1500

microinch)

microinch) microinch)

– Bit corruption

– Lost write

– Misdirected writes

– Torn writes

• Causes:

– Firmware bugs

– Process defects, such as head deviation from the track and FLASH CELL

head contamination caused by oil leakage

– Electromagnetic/Signal interference, such as read/write

interference and cosmic ray interference

– Component aging Data retention error

Note 1: With the increase of magnetic recording density, the head flying height becomes lower and the particle stability decreases gradually. In this

case, silent data corruptions are more likely to occur when there are process defects or electromagnetic/signal interference.

Note 2: Electronic escape may occur on the FLASH CELL of SSDs as time goes by, which causes the bit value to change from 0 to 1.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 9

Module-Level Reliability — Key Module System Solution

Level Level Level

SSD Technologies

Data Redundancy Wear Leveling

Rebuilding of bad pages Using redundant information Erase cycle Erase cycle

⊕ 100% 100%

50% 50%

x Block Block

Uncorrectable

① Granular multi-copy & RAID: metadata (multiple copies) and user data (RAID) ① Wear leveling: periodically moves data blocks so that the data blocks with less

② Data restoration: LDPC, Read Retry, and intra-disk XOR that enable data wear can be used again(There level of SSD wear threshold:

restoration using redundancy mild/moderate/severe~ <50%/<=50%<85%/>=85%).

Bad Block Management and Background Inspection Advanced Management

Logic Block Physical Block

Block 0 Block 0 Pool

Block 1 Invalid Block

Block 2 Block 2 ③

Block 4 Block 3 BDM

② Unused ①

Unused

Block 5 Block

Unused Reservoir

DISK

DISK DISK

DISK

① Background Scan: combines read and write Scan and proactively reports bad ①②

blocks detected during Scan .

② Bad block isolation: detects, migrates, and isolates bad blocks.

③ Power failure protection: During power failure, capacitors are used to ① Online diagnosis: automatic fault diagnosis and location

provide sufficient power to write data in the memory to flash, ensuring zero ② Online self-healing: restores a disk to its factory settings online.

data loss. ③ DIE failure: active reporting and capacity reduction

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 10

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 11

Module System Solution

System-Level Reliability Level Level Level

Service HA

Full-redundancy Solid Data Reliability

architecture End-to-end data reliability

Continuous design

mirroring RAID 2.0+

Multiple cache Fast reconstruction

copies Reconstruction and offload

Controller failover Pre-copy

in seconds Matrix protection

SmartQoS DIX+T10 PI

Disk Fault Tolerance

Disk reliability panorama

SSD wear leveling/anti–wear

leveling

Bad sector/block scanning

and repair

Health evaluation

Slow disk isolation

Quick response to slow I/Os

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 12

Service HA Module

Level

System

Level

Solution

Level

Host

1 • Host multi-path End-to-End Redundancy Design

Front end Front end

Interface Interface Interface Interface

Interface

Interface

module module module module

module

module

Controller

A

Controller

B

Controller

A

Controller

B

1. Path Redundancy: host multi-path/disk

Isolation of management

3 multi-path …

and data planes

4 • Cache Mirroring

Software Software Software Software

2. Component Redundancy:

BBU/Interface card/controllers …

Interface

Interface

module

module

Interface

module

Interface

module Inter- Inter-

Interface

module

Interface

module 3. Data Redundancy: Cache

Back end

enclosure

switching

enclosure

switching Back end mirror/RAID/Data Integrity …

2 Disk multi-path

Disk

enclosure 4. Isolation: management,IO Planes

RAID ... isolation/smart Qos …

3 ... ... ... ... ... ... ... ... ... ... ...

RAID ...

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 13

Module System Solution

Service HA(Dorado V6) Level Level Level

Host

End-to-End Redundancy Design

1

Front end Controller Controller Front end

A enclosure B

Interface Interface Interface Interface 1. Services and host connections are not adversely affected if

Interface

Interface

module module module module

module

module

a controller is faulty: front-end interconnect I/O modules,

protocol offload, and controller failover within seconds

Controller Controller Controller Controller Controller Controller Controller Controller 2. Services are not interrupted if multiple controllers are

A B C D A B C D

faulty: three copies of controller data cache, continuous

Interface

Interface

module

module

2 mirroring, and cross-engine mirroring

3. Services and host connections are not adversely affected if

6 the software is faulty: Process availability is checked in real

Interface

Interface

module

module

Software Software Software Software Software Software Software Software

time. If a process is faulty, the process can be started in

seconds. If a background task becomes faulty frequently, the

3 task is isolated intermittently.

Interface

Interface

module

module

4. Services are not interrupted if an engine or multiple

Interface 4 Interface Interface Interface controllers are faulty: Back-end interconnect I/O modules

module module Inter- Inter- module module ensure interconnection among engines.

enclosure enclosure

Back end switching switching Back end 5. Services are not interrupted if multiple disks are faulty:

User data supports EC-2/EC-3 and multiple copies.

Disk 6. The controller does not reset and services are not

enclosure interrupted if a switch module is faulty: High-end storage is

5 equipped with multiple interface modules for redundancy. Mid-

RAID ...

range and entry-level storage is equipped with a single interface

... ... ... ... ... ... ... ... ... ... ... module. If the interface module is faulty, TCP forwarding is

RAID ... implemented.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 14

Service HA — Continuous Mirroring(Dorado V6)

Module System Solution

Level Level Level

Fault Fault Fault

1 2 3 4 2 3 4 2 3

4* 1* 2* 3* 1 2* 3* 1 2*

Mirroring the copy Continus mirroring

4 1*

4* 1*

3* 4*

Controller A Controller B Controller C Controller D Controller A Controller B Controller C Controller D Controller A Controller B Controller C Controller D

Normal Failure of one controller (controller A) Failure of one more controller (controller D)

Continuous mirroring (ensuring service continuity when seven out of eight controllers are faulty): If controller A is faulty,

controller B detects and creates a mirroring relationship with controllers C and D to ensure dual-copy redundancy. If controller D fails

at the moment, controllers B and C detect each other and create copies for data blocks on each other, ensuring two copies of cached

data. In an 8-controller scenario, a maximum of seven controllers can fail consecutively without interrupting services.

Service continuity: If a controller fails, its services are quickly switched to the mirror controller, and the mirroring relationship is re-

established for the remaining controllers. This feature eliminates the performance deterioration associated with the controller failure

because the surviving controller does not enter the write-through mode and is still in the write-back mode. In addition, data mirrors

ensure system reliability.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 15

Module System Solution

Service HA — Multiple Cache Copies(Dorado V6) Level Level Level

Controller enclosure 0 Controller enclosure 1

1 2 3 4 5 6 7 8

4* 1* 2* 3* 8* 5* 6* 7*

5** 6** 7** 8** 1** 2** 3** 4**

Controller Controller Controller Controller Controller Controller Controller Controller

A B C D A B C D

Services are not interrupted if two controllers are faulty: Three copies of cached data are written to hosts. That

is, for data of different LBAs, the system creates a pair to establish a dual-copy relationship and selects another

node except the current pair to house the third copy.

Services are not interrupted if the controller enclosure is faulty: When the system has two or more controller

enclosures, the controllers in other controller enclosures can be used to house the third copy. This ensures that

services are not interrupted if a controller enclosure (containing four controllers) is faulty.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 16

Service HA — Controller Failover Module System Solution

Level Level Level

Within Seconds(Dorado V6)

Host Quick Controller Failover

Controller A

Front end

1. The host delivers a request to controller A: If no

Front-end interconnect I/O module 1 Front-end interconnect I/O module 2

controller is faulty, host I/Os are delivered to controller A

through front-end interconnect I/O module 1.

Controller A Controller B Controller C Controller D

2. Controller A is faulty: If controller A is faulty, front-end

interconnect I/O module 1/2 and controller B/C/D detects

4 that the controller A is unavailable by means of

1

3 interruption.

3. Service switchover: Because the copies of data on

controller A are stored on controller B/C/D, only the status

2 X of the virtual node managed by controller A needs to be

switched to controller B/C/D, and the service switch

succeeds (less than one second is required). Then the

front-end interconnect I/O module is instructed to refresh

Interface module Interface module the distribution view.

Back end

4. I/O path switch: The front-end interconnect I/O module

Disk enclosure returns BUSY for the I/Os that have been delivered to

controller A. The retried and new I/Os delivered by the

RAID ... host are delivered to controller B/C/D based on the new

... ... ... ... ... ... view.

RAID ...

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 17

Module System Solution

Data Reliability Level Level Level

LUN End-to-End Data Reliability Design

Host

① Data redundancy: multiple copies

SAN ② Data+PI of metadata and data or N+M, EC

Redundancy ② End-to-end data integrity check:

The storage array uses T10 PI to

verify data integrity during internal

DC 1 DC 2 transmission, including data

Index ② Data+PI verification on disks and replication

Layer

L

Verification arrays. T10 PI provides full path

Meta

②Data+PI data verification protection.

③ Parent-child verification of

metadata and data: The system

③ Meta CRC

Persistence

Layer Self-healing checks the read offset and missed

write of disks.

FP⑥

④ Periodic stripe consistency

Data

④ scanning and repair

① ⑤ Rapid data reconstruction powered

② Recovery

⑤ Data+PI by RAID 2.0+ upon a disk fault

… ⑥ Data self-description, metadata

recovery upon damage

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 18

Solid Data Reliability — RAID 2.0+

Module System Solution

Level Level Level

Traditional LUN

Block

LUN

RAID virtualization

RAID

RAID Hot

RAID

group 1

spare

group 2

Traditional RAID Block Virtualization RAID

Resource management based on disks Resource management based on data blocks

I/Os of all LUNs are distributed to all disks to ensure that data of each LUN is

I/Os of a LUN are processed by limited disks in a RAID group.

evenly distributed on disks, balancing performance.

Slow reconstruction: If a single disk is faulty, only a limited number of disks in Fast reconstruction: If a single disk is faulty, all disks participate in

the RAID group participate in reconstruction. reconstruction.

A hot spare disk must be specified. Once the hot spare disk is faulty, it must be Reconstruction can be performed as long as there is free space, independent of

replaced in time. specific hot spare disks.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 19

Solid Data Reliability — Fast Module System Solution

Level Level Level

Reconstruction

HDD 0 HDD 4 HDD 8

01 02 03 41 42 43 81 82 83 CKG 0 01 26 33 74 88

04 05 06 44 45 46 84 85 86

HDD 0 HDD 4 HDD 8

07 08 09 47 48 49 87 88 89 x

CKG 1 03 16 44 66 73

HDD 1 HDD 5 HDD 9

81

11 12 13 51 52 53 91 92 93

HDD 1

HDD 5

(Hot spare)

HDD 9 RAID 5 (4+1)

x

14 15 16

17 18 19

54 55 56

57 58 59

94 95 96

97 98 99

⊕

0 1 2 3 4

HDD 2 HDD 6 x

CKG 2 14 28 42 56 96

5 21 22 23 61 62 63

68

⊕

HDD 2 HDD 6 24 25 26 64 65 66

27 28 29

HDD 3

67 68 69

HDD 7 ⊕

31 32 33 71 72 73 CKG 3 21 34 51 71 99

HDD 3 HDD 7 34 35 36 74 75 76

Rebuilding Rebuilding

37 38 39 77 78 79

Reconstruction using traditional RAID: During reconstruction, data Reconstruction using RAID 2.0+: RAID 2.0+ supports dozens of member

is read from the other functional disks and reconstructed. Then, disks. Each disk is equipped with dedicated hot spare space. When a disk

reconstructed data is written to a hot spare disk or a new disk. The fails, other disks participate in reconstruction reads and writes, greatly

write performance of a single disk restricts the reconstruction. shortening the reconstruction time. As more disks share reconstruction loads,

Therefore, the reconstruction takes a long time. the load on each disk significantly decreases.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 20

Solid Data Reliability — Reconstruction Module System Solution

Level Level Level

and Offload

3 Calculate the number

Controller of faulty blocks. 4 Write faulty

Controller Reconstruction

blocks into the hot

1 Initiate a disk spare space. 1. Reconstruction occupies controller computing resources

read request. (CPU resources): If a single or multiple disks are faulty, all

data is computed on the controller, causing the controller

Disk enclosure 1 Disk enclosure 2 CPU to be overloaded. The host I/O processing capabilities

2 Read 23 blocks. are adversely affected.

2. Reconstruction occupies massive data write bandwidth:

Disk 1 Disk 2 ... Disks Disk Disk 1 Disk 2 ... Disk Disk

All data on the disks in the RAID group is read to the

controller for computing, occupying the data write bandwidth.

3-5 36 35 36 As a result, the host I/O write bandwidth is affected.

5 Use P', Q', P", and Q"

Controller to calculate D1 and Dx'. 6 Write faulty

Reconstruction and Offload (2X Performance

blocks into the hot Improvement)

1.1 Initiate a 4.1 Calculate spare space.

reconstruction task. transmission 1.2 Initiate a 4.1 Calculate

1. The computing of RAID member disks is offloaded to the

P' and Q'. reconstruction task. transmission P" and Q". IP enclosure: The logic of the IP enclosure is simple, and the

CPUs are idle. The disk recovery data read by the IP

Disk Disk

Disk read Compute Disk read Compute enclosure is calculated in the enclosure by using P' and Q'.

enclosure 1 module module enclosure 2 module module 2. Reconstruction occupies a small amount of data write

2.1 Initiate a disk 2.1 Initiate a disk 3.2 Read bandwidth: When RAID data recovery is involved, data on

3.1 Read

read request. 12 blocks.

Disk

read request.

Disk

... Disks

12 blocks.

Disk Disk Disk

... Disk Disk

the disk to be recovered is computed in the IP enclosure and

does not need to be transmitted to the controller, reducing

back-end bandwidth occupation and reducing the impact of

1 2 3-5 36 1 2 35 36

reconstruction on system performance.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 21

Solid Data Reliability — DIX+T10 PI

Module System Solution

Level Level Level

Database OS Adapter Storage SSD

Write When data is written

DIX protection

The HBA verifies the DIX

into host memory, parity information, deletes The storage system

operation Oracle adds 8-byte Data

information passes

the DIX protection uses T10 PI to verify

through the operating

Integrity Extensions information, converts the data integrity in transfer

system to the HBA

(DIX) protection parity information into T10 PI, and stores T10 PI and

driver with the I/O

information for each and sends the T10 PI to the the data on disks.

request.

sector. storage array with the data.

Read The storage system

DIX protection

operation The ASM library verifies

information passes

The HBA verifies T10 PI,

deletes it, generates the DIX

reads data and T10 PI

from disks and verifies

through the operating

the data and DIX protection information, and data integrity. If an error

system to the

protection information. returns the protection is detected, the storage

application layer with

information back to the host. system uses RAID to

the I/O request.

restore data.

Storage systems can not only employ T10 PI to ensure the integrity of data within a storage system but also employ DIX+T10 PI to

protect end-to-end data integrity from Oracle databases to disks.

Multiple nodes within a storage system implement data parity. Such nodes include front-end chips, memories, back-end chips, and

other critical nodes in I/O paths.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 22

Module System Solution

Disk Fault Tolerance Level Level Level

FlashLink-Based Disk-Controller Disk Subhealth and Service Life

Disk Fault Management

Collaboration Management

Invalid data

CKG SAN

①

③ ⊕

①

High Latency

②

Idle CKG ⊕

x

x

① Quick response to slow I/Os: prevents

① Hot and cold data separation services from being affected by long retry and

② ROW full-stripe write recovery time. ① Intelligent bad block repair: The system

③ Global garbage collection: Valid data in the ② Smart disk health evaluation: detects and periodically queries the pending list of each

CKG with a large amount of garbage is isolates slow disks, disks with too many HSSD and rectifies the detected bad blocks in

migrated to the newly allocated CKG and the uncorrectable error-correcting code errors a timely manner.

storage space is reclaimed to the storage (UNCs), and disks with bit errors. ② Online diagnosis: The storage system works

pool. The system notifies the SSD to start ③ Service life management: provides with HSSDs to quickly diagnose disk fault

garbage collection in the disk. visualized life information display and life types and impact scopes and recover the

④ Global wear leveling and anti-wear leveling prediction. HSSDs.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 23

Solid Disk Reliability — Quick Module System Solution

Level Level Level

Response to Slow I/Os

Technical principles

Quick Response to Slow I/Os Disks retry for several times due to physical bad

sectors, head problems, or vibration, prolonging

a the I/O response time (second-level) and

causing service congestion or interruption.

The storage system monitors the response to

I/Os delivered to disks. If the response time

IP/FC SAN

exceeds the upper limit, the disks with slow I/Os

will not be accessed for a specified period of

time and the RAID group is degraded to quickly

respond to the host.

a'

Technical advantages

b XOR c XOR d = a The adverse impact brought by slow disks or

b c d slow disk response within a short period can be

eliminated before disks are isolated. I/O

x RAID

requests can be responded in a timely manner,

preventing services from being affected by long

retry and recovery policies.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 24

Solid Disk Reliability — Slow Disk Module System Solution

Level Level Level

Detection and Isolation

Technical principles

Slow Disk Detection and Isolation Based on disk characteristics and array attributes, divide

domains by disk type (disk rotational speed, disk interface type,

Slow and disk type). Use the average service time of multiple disks

disk in a domain as the average service time of the domain and

compare the average service time of the disks in the domain

Average with the average service time of the domain to find out the

service disks that are relatively slower.

time of Compare the average service time of a disk with the average

disks service time of other disks in the same domain. If this disk is

identified slower in multiple periods, the system determines that

this disk is a slow disk and returns a message indicating that

the disk is temporarily isolated to services for diagnosis. If the

disk cannot be recovered, it will be permanently isolated.

Technical advantages

When all disks in the system become slow, the latency of a

single disk cannot be much greater than that of other disks. In

Disk this case, no disk will be isolated, reducing the disk return rate.

Slow disks are identified and isolated only after diagnosis and

Abnormal Normal repair.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 25

Solid Disk Reliability — Bad Sector/Block Module System Solution

Level Level Level

Scanning and Repair

Technical principles

Bad Sector Repair Characteristics of bad sector generation:

space-time locality

Storage systems periodically scan disks for

potential faults.

0 1 2 3 4 5

Scan algorithm: cross scan, dynamic rate

adjustment

When detecting a bad sector, the storage

system employs RAID group redundancy to

IP/FC SAN

recover data on the bad sector and writes the

recovered data into a new disk (disk remapping

XOR XOR = 5'

will isolate the faulty area and map the area to

the internal reserved area).

Technical advantages

The disk vulnerability can be detected and

0 1 2 P0

removed as early as possible to minimize the

3 4 P1 5 system risks due to disk failure.

3 4' P1'

Prolongs disks' service life and maximizes

return on investment (ROI).

RAID/CKG

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 26

Solid Disk Reliability — Health Evaluation

Module System Solution

Level Level Level

Service Life Prediction

Disk health analysis (DHA): A

comprehensive system that integrates disk

data collection, health status monitoring,

data retransmission, data analysis,

DHA collection

DHA expert improvement, and optimization.

and analysis

system

system

DHA monitors online disks and evaluates the

health status of the disks. If the evaluation

result is lower than the security threshold,

disk pre-copy is implemented and risky disks

are isolated. The assessment criteria are

improved based on a large number of

samples, optimizing prediction results.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 27

Solid Disk Reliability — SSD Global Wear Module System Solution

Level Level Level

Leveling/Anti-wear Leveling

Global Wear Leveling and Anti-wear Leveling

Technical principles

Wear leveling: The workload is evenly

distributed to each disk to prevent some

Anti- disks from failure due to frequent access.

wear

leveling Anti-wear leveling: When detecting that the

Physical SSD #0 Physical SSD #1 Physical SSD #2 Physical SSD #3 SSD wear has reached the threshold, the

system starts anti-global wear leveling.

Unbalanced data distribution on the SSDs

Wear makes their wear degrees differ by at least

leveling 2%. This function prevents multiple disks

from reporting pre-failures in a short time.

Physical SSD #0 Physical SSD #1 Physical SSD #2 Physical SSD #3

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 28

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 29

Module System Solution

Solution-Level Reliability Level Level Level

Intra-array Reliability Solution

Production Production Remote DR ① Snapshot: snapshot activation using multi-time-segment cache technology

DC A DC B center C does not block host I/Os; automatic allocation and management of snapshot

storage space based on the RAID 2.0+ storage virtualization architecture;

Host cluster high-density snapshot

② Clone: physical and complete consistency copies, isolation of the primary

and secondary LUNs; consistency groups across multiple LUNs; incremental

synchronization between the primary and secondary LUNs

FC/IP

④ SAN

Inter-array Reliability Solution

③

Asynchronous

replication ③ Active-active

Intra-city Active-active architecture: Active-active LUNs are readable and

FC/IP SAN active-active FC/IP SAN writable in both DCs and data is synchronized in real time.

solution

① ②

High reliability: Cross-site bad block repair improves system reliability.

High performance: Multiple performance tuning measures are provided,

Real-time data

Cloning Snapshot reducing latency of interactions between two DCs and improving service

Snapshot Cloning synchronization Public cloud

performance by 30%.

Elastic scalability: expanded to the 3DC DR solution based on the

...

CloudBackup

...

remote replication solution.

Dorado V6 Dorado V6 ⑤ ④ Remote replication: Synchronous and asynchronous data replication

across storage arrays provides intra-city and remote data protection.

IP IP HEC / AWS

network network Cloud Backup Solution

⑤ CloudBackup: The public cloud object storage is used as the backup

Quorum storage to prevent data loss caused by manual or physical faults on storage

device devices in the enterprise DC.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 30

Service HA — SmartQoS Module

Level

System

Level

Solution

Level

Upper Limit Control

Host 1. Priority setting: The storage system converts the traffic control objective into a

number of tokens. You can set upper limit objectives for low-priority LUNs or

snapshots to guarantee sufficient resources for high-priority LUNs or snapshots.

2. Token application: The storage system processes the dequeued LUN or

snapshot I/Os by tokens. Requests can be dequeued and processed only when

sufficient LUNs or snapshots and host tokens are obtained.

Burst Quota

1. Token accumulation: If the performance of a LUN, snapshot, LUN group, or

host is lower than the upper threshold within a second, a one-second burst

duration is accumulated. When the service pressure suddenly increases, the

LUNs whose traffic LUNs whose LUNs whose performance can exceed the upper limit and reach the burst traffic. The

does not reach the traffic reaches the traffic far exceeds accumulated tokens are used by the current objects and the duration is

lower limit lower limit the lower limit configured. In this way, the system can respond to the burst traffic in time.

No Burst Traffic

suppression prevention suppression Lower Limit Guarantee

1. Minimum traffic: If each LUN is configured with the minimum traffic

(IOPS/bandwidth) by default, the minimum traffic must be ensured when the

LUN is overloaded.

2. Traffic suppression for high-load LUNs: When the system is overloaded, if

the traffic of some LUNs does not reach the lower limit, the system performs

... load rating on all LUNs. The system provides a loose traffic condition for

medium- and low-load LUNs based on the load status. The system suppresses

Disk the traffic of high-load LUNs until the system releases sufficient resources to

enable the traffic of all LUNs to reach the lower limit.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 31

Solution-Level Reliability — 3DC

Module System Solution

Level Level Level

Cascaded Architecture Parallel Architecture

Intra-city DR center Production Intra-city DR center

Production

B center A B

center A

A A

Synchronous/ Synchronous/

Asynchronous Asynchronous

replication replication

SAN Asynchronous SAN SAN

SAN

replication

A A' A A'

Remote DR Remote DR

center C center C

1. The intra-city DR center undertakes

remote replication tasks and has Asynchronous

replication

minor impact on services in the

production center.

If the storage system in the

2. If the storage system in the production

SAN production center malfunctions, SAN

center malfunctions, the intra-city DR

the intra-city or remote DR center

center takes over services and keeps

A" can quickly take over services.

a data replication relationship with the A"

remote DR center.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 34

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 35

O&M Reliability — Component Upgrade

System Solution

O&M

Level Level

Host

Upgrade Transparent to Hosts

Host Controller enclosure Upgrade transparent to hosts: Front-end ports are

connection connected to hosts. Components in a controller

Front-end interface module Front-end interface module

keeping exchange I/Os with hosts through front-end interface

Connection keeping Connection keeping

modules. The components do not directly connect to

hosts. The controller upgrade does not interrupt the

Phase 1 Controller Controller Controller Controller host connection.

A B C D

Component upgrade: The system upgrade is divided

Component Component Component Component

1 1 1 1 into two phases. The software components (processes)

with redundant units are upgraded first. After the

Component Component Component Component software packages are uploaded and the processes

2 2 2 2

are restarted, the second phase is triggered.

Component Component Component Component Zero performance loss: The restart time of each

N N N N

Phase 2 software component is less than 1 second. The front-

end interface module returns BUSY for failed I/Os

during the upgrade. The host retries the failed I/Os, and

the performance is restored to 100% within 2 seconds.

Short upgrade duration: No host compatibility issue is

Disk enclosure involved. Host information does not need to be

collected for evaluation. The entire storage system can

... be upgraded within 10 minutes as controllers do not

Fast restart

need to be restarted.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 36

O&M Reliability — Rolling Upgrade

System Solution

O&M

Level Level

Rolling Upgrade

Phase-based upgrade: By default, the system

performs rolling upgrade in two phases. Before

upgrading a controller, services on the controller

are switched to the other controllers in the same

controller enclosure to ensure service continuity

Switch during the upgrade.

Upgrading controllers Upgrading controllers Resumable upgrade: If the upgrade fails due to

I/O path switch

in phase 2 in phase 1 system overload, software bugs, or other faults,

you can continue the upgrade from the point

Controller C Controller D where the upgrade fails instead of re-upgrading

Controller A Controller B the system. This reduces the upgrade time.

Step 1 Switch Step 2 Upgrade Service Step 1 Switch Step 2 Upgrade Rollback upon failure: If the upgrade fails due

services (supporting firmware (supporting switchover services (supporting firmware (supporting

resumable resumable resumable resumable to system overload, software bugs, or other

upgrade/rollback). upgrade/rollback). upgrade/rollback). upgrade/rollback).

faults and the faults cannot be rectified within a

Step 4 The Step 4 The

controllers fail to be Step 3 Upgrade controllers fail to be Step 3 Upgrade short period of time, roll back the controller that

upgraded after being software (supporting upgraded after being software (supporting

restarted (supporting resumable restarted (supporting resumable has been upgraded or fails to be upgraded to the

resumable upgrade upgrade/rollback). resumable upgrade upgrade/rollback).

and rollback). and rollback). source version.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 37

Contents

1 Storage Reliability Metrics

2 Module-Level Reliability

3 System-Level Reliability

4 Solution-Level Reliability

5 O&M Reliability

6 Reliability Tests and Certifications

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 39

Reliability Tests and Certifications

Hardware Exercises and Certifications

Type Purpose Authentication Result

Ensure that the product meets the requirements of the Met the mandatory

corresponding EMC standards, real-world admission certification

EMC test On Apr. 20, 2013, Ya'an of Sichuan province encountered a 7.0-

electromagnetic environment, and device or system requirements of each

compatibility. country and manitude earthquake with an intensity of 9. The affected area

Ensure the personal safety when using the products, organization:

reduce the injury caused by electric shock, fire, heat, • China: CCC amounted to 18,682 km2.

Safety test mechanical damage, radiation, chemical damage, and • European

IT systems of the Ya'an TV Station have undergone the

energy, and meet the admission requirements of each Commission: CE

country. • USA: FCC catastrophe. Two S2600 storage systems deployed by Ya'an

• Japan: VCCI-A

Environment Check that the products meet the requirements. • Russia: CU Health Bureau were also proven to be powerfully shockproof in

(climatic) test Expose defects in design, process, and materials. • ...

Passed some optional this earthquake.

Improve the environment adaptability of the products certifications, such as

to mechanical stress during storage and transportation China's:

Environmental to ensure qualified product appearance, structure, and • 9-intensity

(mechanical) test performance and ensure that the product can Earthquake 9-intensity earthquake resistance certification

withstand the adverse impact caused by external

mechanical stress on the equipment.

resistance

certification (Huawei Only)

Find the weak points of the products and improve • China Environmental

HALT test Labeling certification

product reliability.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 40

Thank You

www.huawei.com

Copyright © 2019 Huawei Technologies Co., Ltd. 2019. All rights reserved.

All logos and images displayed in this document are the sole property of their respective copyright holders. No endorsement, partnership, or affiliation is suggested or

implied. The information in this document may contain predictive statements including, without limitation, statements regarding the future financial and operating results,

future product portfolio, new technology, etc. There are a number of factors that could cause actual results and developments to differ materially from those expressed

or implied in the predictive statements. Therefore, such information is provided for reference purpose only and constitutes neither an offer nor an acceptance. Huawei

may change the information at any time without notice.

HUAWEI TECHNOLOGIES CO., LTD. Huawei Confidential 41

Potrebbero piacerti anche

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDa EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeValutazione: 4 su 5 stelle4/5 (5794)

- The Life of An American Jew by Jack BernsteinDocumento14 pagineThe Life of An American Jew by Jack BernsteinFrancisco MonteiroNessuna valutazione finora

- Shoe Dog: A Memoir by the Creator of NikeDa EverandShoe Dog: A Memoir by the Creator of NikeValutazione: 4.5 su 5 stelle4.5/5 (537)

- ANC UmrabuloDocumento224 pagineANC UmrabuloCityPressNessuna valutazione finora

- Akash Company IntroductionDocumento16 pagineAkash Company IntroductionPujitNessuna valutazione finora

- The Yellow House: A Memoir (2019 National Book Award Winner)Da EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Valutazione: 4 su 5 stelle4/5 (98)

- DLL Week 7 July 15-19 2019Documento4 pagineDLL Week 7 July 15-19 2019Maryknol AlvarezNessuna valutazione finora

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDa EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceValutazione: 4 su 5 stelle4/5 (895)

- City by City Special ReportsDocumento12 pagineCity by City Special ReportsMahima AgarwalNessuna valutazione finora

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDa EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersValutazione: 4.5 su 5 stelle4.5/5 (344)

- Written Results of National Defence Academy and Naval Academy Examination (I), 2019Documento18 pagineWritten Results of National Defence Academy and Naval Academy Examination (I), 2019Zee News100% (1)

- The Little Book of Hygge: Danish Secrets to Happy LivingDa EverandThe Little Book of Hygge: Danish Secrets to Happy LivingValutazione: 3.5 su 5 stelle3.5/5 (399)

- Exim Policies and ProceduresDocumento9 pagineExim Policies and Proceduresapi-37637770% (1)

- Grit: The Power of Passion and PerseveranceDa EverandGrit: The Power of Passion and PerseveranceValutazione: 4 su 5 stelle4/5 (588)

- Dirty DozenDocumento39 pagineDirty DozenMelfred RicafrancaNessuna valutazione finora

- The Emperor of All Maladies: A Biography of CancerDa EverandThe Emperor of All Maladies: A Biography of CancerValutazione: 4.5 su 5 stelle4.5/5 (271)

- CCTV NoticeDocumento2 pagineCCTV NoticeAnadi GuptaNessuna valutazione finora

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDa EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaValutazione: 4.5 su 5 stelle4.5/5 (266)

- 5e Lesson Plan TemplateDocumento4 pagine5e Lesson Plan Templateapi-234230877Nessuna valutazione finora

- Never Split the Difference: Negotiating As If Your Life Depended On ItDa EverandNever Split the Difference: Negotiating As If Your Life Depended On ItValutazione: 4.5 su 5 stelle4.5/5 (838)

- 終戰詔書Documento7 pagine終戰詔書EidadoraNessuna valutazione finora

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDa EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryValutazione: 3.5 su 5 stelle3.5/5 (231)

- The International Journal of Advanced Manufacturing Technology - Submission GuidelinesDocumento21 pagineThe International Journal of Advanced Manufacturing Technology - Submission GuidelinesJohn AngelopoulosNessuna valutazione finora

- Amber Limited Main PPT SHRMDocumento10 pagineAmber Limited Main PPT SHRMimadNessuna valutazione finora

- On Fire: The (Burning) Case for a Green New DealDa EverandOn Fire: The (Burning) Case for a Green New DealValutazione: 4 su 5 stelle4/5 (73)

- Daft12ePPT Ch08Documento24 pagineDaft12ePPT Ch08Alex SinghNessuna valutazione finora

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDa EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureValutazione: 4.5 su 5 stelle4.5/5 (474)

- Bruner Theory of LearningDocumento2 pagineBruner Theory of LearningAndy Pierce100% (2)

- Team of Rivals: The Political Genius of Abraham LincolnDa EverandTeam of Rivals: The Political Genius of Abraham LincolnValutazione: 4.5 su 5 stelle4.5/5 (234)

- Transforming OrganizationsDocumento11 pagineTransforming OrganizationsAdeniyi AleseNessuna valutazione finora

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDa EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyValutazione: 3.5 su 5 stelle3.5/5 (2259)

- Course Guide & Course OverviewDocumento29 pagineCourse Guide & Course OverviewVonna TerribleNessuna valutazione finora

- BFHI GuidelineDocumento876 pagineBFHI GuidelinenopiyaniNessuna valutazione finora

- 5.21.20 Letter To BOP On USP Lee and FCI PetersburgDocumento2 pagine5.21.20 Letter To BOP On USP Lee and FCI PetersburgRJNessuna valutazione finora

- Transit Capacity and Quality of Service Manual, Third EditionDocumento2 pagineTransit Capacity and Quality of Service Manual, Third EditionkhanNessuna valutazione finora

- Informative SpeechDocumento2 pagineInformative SpeechArny MaynigoNessuna valutazione finora

- The Unwinding: An Inner History of the New AmericaDa EverandThe Unwinding: An Inner History of the New AmericaValutazione: 4 su 5 stelle4/5 (45)

- QP 12 BST 3 3Documento6 pagineQP 12 BST 3 3j sahaNessuna valutazione finora

- Teacher Instructional Improvement Plan 2011Documento2 pagineTeacher Instructional Improvement Plan 2011Hazel Joan Tan100% (2)

- A Roadmap Towards The Implementation of An Efficient Online Voting System in BangladeshDocumento5 pagineA Roadmap Towards The Implementation of An Efficient Online Voting System in BangladeshNirajan BasnetNessuna valutazione finora

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDa EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreValutazione: 4 su 5 stelle4/5 (1090)

- 2010 Metrobank Foundation Outstanding TeachersDocumento10 pagine2010 Metrobank Foundation Outstanding Teachersproffsg100% (1)

- The Economic Transformation Programme - Chapter 8Documento26 pagineThe Economic Transformation Programme - Chapter 8Encik AnifNessuna valutazione finora

- Matrix ImsDocumento6 pagineMatrix Imsmuhammad AndiNessuna valutazione finora

- Intellectual Property Code Edited RFBTDocumento135 pagineIntellectual Property Code Edited RFBTRobelyn Asuna LegaraNessuna valutazione finora

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Da EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Valutazione: 4.5 su 5 stelle4.5/5 (120)

- Vocational Centre at Khurja: Sunderdeep College of Architecture (Dasna, Ghaziabad, Uttar Pradesh)Documento45 pagineVocational Centre at Khurja: Sunderdeep College of Architecture (Dasna, Ghaziabad, Uttar Pradesh)NAZEEF KHANNessuna valutazione finora

- Wireless Energy Transfer Powered Wireless Sensor Node For Green Iot: Design, Implementation & EvaluationDocumento27 pagineWireless Energy Transfer Powered Wireless Sensor Node For Green Iot: Design, Implementation & EvaluationManjunath RajashekaraNessuna valutazione finora

- Her Body and Other Parties: StoriesDa EverandHer Body and Other Parties: StoriesValutazione: 4 su 5 stelle4/5 (821)