Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

34202103

Caricato da

jkl316Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

34202103

Caricato da

jkl316Copyright:

Formati disponibili

Revista del Centro de Investigación.

Universidad La Salle

Universidad La Salle

revista@ci.ulsa.mx

ISSN (Versión impresa): 1405-6690

MÉXICO

2004

Eduardo Gómez Ramírez / Ferran Mazzanti / Xavier Vilasis Cardona

CELLULAR NEURAL NETWORKS LEARNING USING GENETIC ALGORITHM

Revista del Centro de Investigación. Universidad La Salle, julio-diciembre, año/vol. 6,

número 021

Universidad La Salle

Distrito Federal, México

pp. 25-31

Red de Revistas Científicas de América Látina y el Caribe, España y Portugal

Universidad Autónoma del Estado de México

Artículo de Investigación

Cellular Neural Networks Learning

using Genetic Algorithm

Eduardo Gómez Ramírez, Ferran Mazzanti* & Xavier Vilasis Cardona*

Laboratorio de Investigación y Desarrollo de Tecnología Avanzada (LIDETEA)

UNIVERSIDAD LA SALLE

E-mail: egr@ci.ulsa.mx

*Departament d'Electrónica, Enginyeria i Arquitectura La Salle, U. Ramon Llull,

Pg. Bonanova 8, 08022 Barcelona, España

E-mail: mazzanti@salleURL.edu xvilasis@salleURL.edu

Recibido: Julio de 2002. Aceptado: Agosto de 200

ABSTRACT

In this paper an alternative to CNN learning using Genetic Algorithm is presented. The results show that it

is possible to find different solutions for the cloning templates that fulfill the same objective condition.

Different examples for image processing show the result of the proposed algorithm.

Keyword: Cellular neural network, learning, genetic algorithm, cloning templates, image processing.

RESUMEN

En este trabajo se propone una alternativa para el aprendizaje de Redes Neuronales Celulares utilizando

algoritmo genético. Con las simulaciones realizadas se observó que es posible encontrar diferentes com-

binaciones de matrices de pesos con las que se obtienen los mismos resultados. Se muestran diferentes

ejemplos para el procesamiento de imágenes para representar el comportamiento del algoritmo.

Palabras clave: Redes neuronales celulares, aprendizaje, algoritmo genético, plantilla donadora, proce-

samiento de imagen.

INTRODUCTION

Cellular Neural Networks (CNNs)1 are arrays of computers.3 The particular distribution of neu-

dynamical artificial neurons (cells) locally inter- rons in two–dimensional grids has turned CNNs

connected. This essential characteristic has ma- into a particularly suitable tool for image pro-

de possible the hardware implementation of lar- cessing.

ge networks on a single VLSI chip2 and optical

Determining the correct set of weights for

CNNs is traditionally a difficult task. This is,

1

O. Chua & L. Yang: "Cellular Neural Networks: Theory", essentially, because gradient descent methods

IEEE Trans. Circuits Syst., vol. 35, pp. 1257-1272, 1988. get rapidly stuck into local minima.4 One finally

O. Chua & L. Yang: "Cellular Neural Networks: Applica- resorts either to template the library, or sophisti-

tions", IEEE Trans. Circuits Syst., vol. 35, pp.1273-1290,

1988.

2 3

Liñan, L., Espejo, S., Dominguez-Castro, R., Rodriguez- Mayol W, Gómez E.: "2D Sparse Distributed Memory-

Vazquez, A. ACE4K: an analog VO 64x64 visual micro- Optical Neural Network for Pattern Recognition". IEEE

processor chip with 7-bit analog accuracy. Int. J. of Circ. International Conference on Neural Networks, Orlando,

Theory and Appl. en prensa, 2002. Florida, 28 de junio a 2 de julio, 1994

Kananen, A., Paasio, A. Laiho, M., Halonen, K. CNN appli- 4

M. Balsi, "Hardware Supervised Learning for Cellular an

cations from the hardware point of view: video sequence Hopfield Neural Networks", Proc. of World Congress on

segmentation. Int. J. of Circ. Theory and Appl. en prensa, Neural Networks, San Diego, Calif, 4 al 9 de junio, pp. III,

2002. 451-456, 1994.

Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003 25

Artículo

cated elaborations requiring a deep insight of A. Mathematical Model

the CNN behavior.5 Among global optimization

methods for learning, Genetic Algorithms reveal The simplified mathematical model that defines

to be a popular choice6. However, authors usua- this array8 is the following:

lly show a genetic algorithm to find only one of

dx c

the matrixes. The authors Doan, Halgamuge et = − x c ( t ) + ∑ a dc y d ( t ) + ∑ bdc u d + i c

al7 solely show experiments for specific cases dt d ∈N r ( c ) d ∈N r ( c )

and possibly the examples presented only work (Eq.1)

with these constraints that, of course, reduce the

space solution. 1 c

y c (t ) =

2

(

x ( t ) + 1 − x c (t ) − 1) )

In this work a genetic algorithm approach is

developed to compute all the parameters of a (Eq.2)

Cellular Neural Network (weights and current).

where xc represents the state of the cell c, yc the

The results show that it is possible to find many

output and uc its input. The state of each cell is

different matrixes that fulfill the objective condi-

controlled by the inputs and outputs of the adja-

tion. Different examples of image processing are

cent cells inside the r-neighborhood Nr(c). The

presented to illustrate the procedure developed.

outputs are feedback and multiplied by acd and

The structure of the paper is the following: the inputs are multiplied by control parameters

Section 2 describes the theory of Cellular Neural bcd. This is the reason that acd is called retro and

Networks, Section 3 the genetic algorithm pro- bcd is called control. The value ic is constant and

posed and in Section 4 the way the algorithm is used for adjusting the threshold 9. These coef-

was applied to find the optimal parameters for ficients are invariants to translations and it would

image processing. be called cloning template.

CELLULAR NEURAL NETWORKS The output yc is obtained applying the equa-

tion (2). This equation limits the value of the out-

As it was defined on its origins, a CNN is an put on the range between [-1,1], in the same

identical array of non-linear dynamic circuits. way the activation function does in the classical

This array could be made of different types ANN models (Fig. 1).

depending on the way they are connected or the

class of the neighborhood.

1.5 y

1

5

Zarandy, A., The Art of CNN Template Design. Int. J. of

Circ. Th. Appl., pp. 5-23, 1999.

6

0.5

Kozek, T., Roska, T., Chua, L.O. Genetic Algorithm for x

CNN Template Learning. IEEE Trans. Circ. Syst., pp. 392-

402, 1993. 0

P. López, M. Balsi, D.L. Vilario, D. Cabello, Design and

Training of Multilayer Discrete Time Cellular Neural Net-

-4 -2 0 2 4

-0.5

works for Antipersonnel Mine Detection using Genetic

Algorithms, Proc. of Sixth IEEE International Workshop

on Cellular Neural Networks and their Applications, Cata- -1

nia, Italia, 23 al 25 de mayo, 2000.

Taraglio S. & Zanela A., Cellular Neural Networks: age- -1.5

netic algorithm for parameters optimization in artificial

vision applications, CNNA-96., Proceedings, Fourth IEEE Figure 1. Output function

International Workshop, pp. 315-320, 24 al 26 de junio,

Sevilla, España, 1996.

8

7

Doan, M. D., Halgamuge, S., Glesner M. & Braunsforth, Harrer H. & Nossek J.: "Discrete-Time Cellular Neural Net-

Application of Fuzzy, GA and Hybrid Methods to CNN works". Cellular Neural Networks. Editado por T. Roska &

Template Learning, CNNA-96, Proceedings, Fourth IEEE J. Vandewalle. John Wiley & Sons, 1993.

9

International Workshop, pp. 327-332, 24 al 26 de junio, This coefficient is equivalent to the input bias on other arti-

Sevilla, España, 1996. ficial neural networks.

26 Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003

Artículo

B. Discrete Mathematical Model problem with the use of gradient error techni-

ques, as in these cases, it is not possible to find

The mathematical model illustrated before could an optimal solution. One alternative is the use of

be represented by the following expression: evolutionary computation techniques that can

be applied to find the different solutions or alter-

dx c

= −xc( t ) + k c natives to the same problem.

dt (Eq.3)

where: The next section describes the methodology

of Genetic Algorithm used in this work.

kc = ∑a c

d yd(t ) + ∑b u c

d

d

+ ic

d ∈N r ( c ) d ∈N r ( c )

GENETIC ALGORITHM

Because the final state is obtained evaluating Genetic Algorithm (GA) is a knowledge model

x(∞), the model could be simplified as: inspired on some of the evolution mechanisms

observed in nature. GA usually follows the next

cycle 11: (Fig. 2)

xc (k ) = ∑a

d ∈N r ( c )

c

d y d (k ) + ∑b u

d ∈N r ( c )

c

d

d

+ ic

• Generation of an initial population in a ran-

(Eq. 4)

dom way.

• Evaluation of the fitness or some objective

Some authors also considered a modification

function of every individual that belongs to

to the output (eq. 2), as follows [4]:

the population.

• Creation of a new population by the execu-

y c ( k ) = f ( x c ( k − 1)) tion of operations, like crossover and muta-

tion, over individuals whose fitness or profit

c

1 si x c ( k − 1) > 0 value have been measured.

y (k ) = c • Elimination of the former population and ite-

− 1 si x ( k − 1) < 0 (Eq. 5) ration using the new one until the termination

criteria is fulfilled or it has reached a certain

However, the simplification made (eq. 4) number of generations.

could be used with the function of the eq. 2.10

[1]. Equation (4) can be represented using the In this work the crossover or sexual recombi-

convolution operator like: nation, the mutation and other special process

that we call ‘add parents’12 and ‘add random

parents’ are used. Next Sections describe these

X k = A * Yk + B *U k + I

processes.

(Eq. 6)

where matrix A and B correspond to the rotation

of 180º of the corresponding coefficients acd and

bcd . The previous step is not necessary if the

matrix has the property of A=AT.

The cloning template, in some cases, is a set Figure 2. Operators of Genetic Algorithm

of different combinations that fulfill the same

conditions, e.g., there are different matrixes that

11

can solve the same problem. This can be a Alander J. T., An Indexed Bibliography of Genetic Algo-

rithms: Years 1957, 1993, 1994, Art of CAD Ltd. Bedner I.,

Genetic Algorithms and Genetic Programming at Stan-

ford, Stanford Bookstore, 1997.

10 12

M. Alencastre-Miranda, A. Flores-Méndez & E. Gómez E. Gómez-Ramírez, A. Poznyak, A. González Yunes & M.

Ramírez: "Comparación entre Métodos Clásicos y Redes Avila-Alvarez. Adaptive Architecture of Polynomial Artifi-

neuronales celulares para el análisis de imágenes". cial Neural Network to Forecast Nonlinear Time Series.

XXXVIII Congreso Nacional de Física. Zacatecas, Zaca- CEC99 Special Session on Time Series Prediction. Hotel

tecas, México, 16 al 20 de octubre, 1995. Mayflower, Washington D.C., EUA, 6 al 9 de julio, 1999.

Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003 27

Artículo

A. Crossover

M 1

Fg = , M 1 = [a b ], M 2 = [c d ]

The crossover operator is characterized by the M 2

combination of the genetic material of the indivi- [a b ]←

duals that are selected in function of the good Example: [a d]

performance (objective function). ⇒ C (Fg )=

[c b]

To explain the multipoint crossover for each

[c d ]←

fixed number g=1,..,ng, where ng is the number

of total generations, let introduce the matrix Fg Note that with this operator the parents Fg of

the population g are included in the result of the

which is the set of parents of a given population.

crossover.

This matrix is Boolean of dimension, Fg: npxnb,

where np is the number of parents of the popula- For the problem of CNN learning every sec-

tion at the generation g and nb is the size of tion of one father can be represented by one

every array (chromosomes). Let C(Fg,nt) be column or one row of one matrix of the cloning

the crossover operator which can be defined as template (matrix acd and bcd ).

the combination between the information of the

parents set considering the number of intervals B. Mutation

nt of each individual and the number of sons ns

such that: The mutation operator just changes some bits

that were selected in a random way from a fixed

nt

probability factor Pm; in other words, we just

ns = n p vary the components of some genes. This ope-

(Eq. 7) rator is extremely important, as it assures the

maintenance of the diversity inside the popula-

tion, which is basic for the evolution. This opera-

Then C(Fg,nt):npxnb nsxnb. To show how

tor M :nsxnb nsxnb changes with probability

the crossover operator can be applied the follo-

wing example is introduced. Let Fg has np=2 and Pm a specific population in the following way:

nt=2. This means that the array (the information

of one father) is divided in 3 sections and every Fij r (ω ) ≤ Pm

section is determined with ai and bi respectively M (Fij , Pm )=

Fij r (ω ) > Pm

for i=1,..,nt. It's important to appoint that with

this operator the parents Fg of the population g (Eq. 8)

are included in the result of the crossover:

C. Add Parents Mutated

In this part the parents Fg are mutated and

added to the result of the crossover process,

then the population Ag at the generation g can

be obtained like:

C (Fg )

Ag =

M (Fg , Pm )

(Eq. 9)

This step and the previous one ensure the

convergence of the algorithm, because every

generation at least has the best individual

Figure 3. Multipoint Crossover Operator obtained in the process. With this step the origi-

nal and mutated parents are included in the

28 Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003

Artículo

generation g and with some probability the indi- of the matrix. In the current case only mutations

viduals tend to the optimal one. are used.

D. Selection Process APPLICATION IN IMAGE PROCESSING

The Selection Process Sg computes the objec- The procedure used to train the network was:

tive function Og that represents a specific crite-

rion to maximize or minimize and selects the 1. To generate the input patterns using a

best np individuals of Ag as: known matrix in image processing to find

the different kind of lines (horizontal, verti-

S g (Ag , n p )= min p Og (Ag )

n

cal, diagonal) in different images.

2. To train the network using the methodology

(Eq. 10) explained above using GA.

3. Test the results using a new pattern.

Then, the parents of the next generation can

be calculated by: The results were repeated to obtain different

templates for the same problem. The parame-

Fg +1 = S g (Ag , n p ) ters used are: 4,000 individuals in the initial

population and 5 parents. The change in the

(Eq. 11)

values for every coefficient was evaluated using

an increment of 0.125 to reduce the space solu-

Summarizing, the Genetic Algorithm can be

tion.

describe with the following steps:

Example 1 Horizontal Line Detector

1. For the initial condition g=0 select the

A0 in a random way, such that A0 : nsxnb

Figures 4 and 5 show the input and output pa-

2. Compute F1= S0(A0) tterns used. This pattern was obtained using a

3. Obtain Ag well known matrix:

4. Calculate Sg

5. Return to step 3 until the maximum number of 0 − 1 0

generations is reached or one of the indivi- M = 0 2 0

duals of Sg obtains the minimal desired value

of Og 0 − 1 0

E. Add Random Parents

The parameters obtained are:

To avoid local minimal a new scheme is intro-

First Simulation:

duced and it is called ‘add random parents’. If

the best individual of one generation is the same − 0.125 0 0

than the previous one, a new random individual

A= 0 2.5 0.125

is included like parent of the next generation.

This step increases the population because − 0.75 − 1.375 0.125

when crossover is applied with the new random

parent, the number of sons increases. This step

is tantamount to have a major mutation probabil-

ity and to search in a new generation points of − 0.125 −2 0

the solution space.

B = 0.375 2.75 0

To apply the previous theory of GA, every 0.375 − 0.875 − 0.25

individual is the corresponding matrix A (acd) and

B (bcd). The crossover is computed using every I=-2.875

column of the matrix like previous explanations

and the mutation is applied to every component

Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003 29

Artículo

Second Simulation

Input Output

4.5 − 2.25 − 0. 5

A = − 1.625 0.75 3.375

2.125 − 0.875 − 0.875

0.75 − 7.125 − 0.625

B = 2.625 − 0.875 2.25

− 3.125 − 0.875 2.375

I=0.625

Example 2 Vertical Line Detector

Figure 4. Input and Output patterns for CNN

Figures 6 and 7 show the input and output pa- Learning

tterns used. These patterns were obtained using

a well known matrix:

Input Output

0 0 0

M = − 1 2 − 1

0 0 0

In this case we put constraints to select only Figure 5. Input and Output Patterns for CNN

the matrix B. The parameters obtained are: Test

First simulation Input Output

0 0 0

A = 0 0 0

0 0 0

0 0 0

B = − 1 1.75 − 0.75

0 0 0

I=0

Figure 6. Input and Output patterns for CNN

Learning

30 Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003

Artículo

Input Output 7. Zarandy, A., The Art of CNN Template

Design. Int. J. of Circ. Th. Appl., pp. 5–23,

1999.

8. Kozek, T., Roska, T., Chua, L.O. Genetic

Algorithm for CNN Template Learning.

IEEE Trans. Circ. Syst., pp. 392–402, 1993.

Figure 7. Input and Output Patterns for CNN 9. López, P. M. Balsi, D.L. Vilario, D. Cabello,

Test Design and Training of Multilayer Discrete

Time Cellular Neural Networks for Antiper-

CONCLUSIONS sonnel Mine Detection using Genetic Algo-

rithms, Proc. of Sixth IEEE International

The learning of CNN is not a simple procedure, Workshop on Cellular Neural Networks and

mainly due to the problem of computing the gra- their Applications, Catania, Italia, 23 al 25

dient of the estimation error. Genetic Algorithm de mayo, 2000.

is one alternative to solve it and it is possible to 10. Taraglio S. & Zanela A., Cellular Neural Net-

obtain different solutions for the same problem. works: a genetic algorithm for parameters

In this work the use of ‘add random parent’ optimization in artificial vision applications,

avoids reaching local minimal and improves the CNNA–96., Proceedings, Fourth IEEE

convergence of the algorithm. This is very International Workshop, pp. 315–320, 24 al

important when the space solution includes all 26 de junio, Sevilla, España, 1996.

the elements of the cloning template. 11. Doan, M. D., Halgamuge, S., Glesner M. &

Braunsforth, Application of Fuzzy, GA and

REFERENCES Hybrid Methods to CNN Template Learning,

CNNA–96, Proceedings, Fourth IEEE Inter-

1. Chua, O. & L. Yang: "Cellular Neural Net- national Workshop, pp. 327–332, 24 al 26

works: Theory", IEEE Trans. Circuits Syst., de junio, Sevilla, España, 1996.

vol. 35, pp. 1257-1272, 1988. 12. Harrer H. & Nossek J.: "Discrete–Time Cel-

2. Chua,O & L. Yang: "Cellular Neural Net- lular Neural Networks". Cellular Neural Net-

works: Applications", IEEE Trans. Circuits works. Editado por T. Roska & J. Vande-

Syst., vol. 35, pp. 1273–1290, 1988. walle. John Wiley & Sons, 1993.

3. Liñan, L., Espejo, S., Dominguez–Castro, 13. Alencastre–Miranda, M. A. Flores–Méndez

R., Rodriguez–Vazquez, A. ACE4K: an & E. Gómez Ramírez: "Comparación entre

analog VO 64x64 visual microprocessor Métodos Clásicos y Redes neuronales

chip with 7–bit analog accuracy. Int. J. of celulares para el análisis de imágenes".

Circ. Theory and Appl. in Press, 2002. XXXVIII Congreso Nacional de Física.

4. Kananen, A., Paasio, A. Laiho, M., Halonen, Zacatecas, Zacatecas, México, 16 al 20 de

K. CNN applications from the hardware octubre, 1995.

point of view: video sequence segmenta- 14. Alander J. T., An Indexed Bibliography of

tion. Int. J. of Circ. Theory and Appl., en Genetic Algorithms: Years 1957, 1993,

prensa, 2002. 1994, Art of CAD Ltd. Bedner I., Genetic

5. Mayol W, Gómez E.: "2D Sparse Distrib- Algorithms and Genetic Programming at

uted Memory–Optical Neural Network for Stanford, Stanford Bookstore, 1997.

Pattern Recognition". IEEE International 15. Gómez–Ramírez, E., A. Poznyak, A. Gon-

Conference on Neural Networks, Orlando, zález Yunes & M. Avila–Alvarez. Adaptive

Florida, 28 de junio a 2 de julio, 1994. Architecture of Polynomial Artificial Neural

6. Balsi, M. "Hardware Supervised Learning Network to Forecast Nonlinear Time Series.

for Cellular and Hopfield Neural Networks", CEC99 Special Session on Time Series

Proc. of World Congress on Neural Net- Prediction. Hotel Mayflower, Washington

works, San Diego, Calif, 4 al 9 de junio, pp. D.C., EUA, 6 al 9 de julio, 1999.

III, 451-456, 1994.

Rev. Centro Inv. (Méx) Vol. 6, Núm. 21, Jul.-Dic. 2003 31

Potrebbero piacerti anche

- EC Marketing Digital FT Ed 4 Modulo 5 PDFDocumento1 paginaEC Marketing Digital FT Ed 4 Modulo 5 PDFJonathan Cristhian Muñoz LeónNessuna valutazione finora

- Ensayo Sobre Las Tic en Las EmpresasDocumento5 pagineEnsayo Sobre Las Tic en Las Empresascarlosu2575% (4)

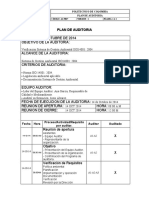

- Modelo - Plan de AuditoriaDocumento2 pagineModelo - Plan de AuditoriaochoasanchezNessuna valutazione finora

- Analisís de Producción Mécanizada enDocumento13 pagineAnalisís de Producción Mécanizada enkelmin4_895078258100% (1)

- Introducción Al Análisis de AlgoritmosDocumento42 pagineIntroducción Al Análisis de Algoritmosjkl316Nessuna valutazione finora

- Derivación NumericaDocumento3 pagineDerivación Numericajkl316Nessuna valutazione finora

- El Patrón MVC, Arquitectura Cliente Vs ServidorDocumento6 pagineEl Patrón MVC, Arquitectura Cliente Vs Servidorjkl316Nessuna valutazione finora

- Errores y Representacion en Punto FlotanteDocumento14 pagineErrores y Representacion en Punto Flotantejkl316Nessuna valutazione finora

- Algoritmo Del Calculo Del Polinomio de LagrangeDocumento1 paginaAlgoritmo Del Calculo Del Polinomio de Lagrangejkl316Nessuna valutazione finora

- Edinson Medina PDFDocumento211 pagineEdinson Medina PDFjkl316Nessuna valutazione finora

- ArbolbinDocumento44 pagineArbolbinjkl316Nessuna valutazione finora

- QRC Exp AlgebraicasDocumento2 pagineQRC Exp Algebraicasjkl316Nessuna valutazione finora

- LimitesDocumento3 pagineLimitesjkl316Nessuna valutazione finora

- Resumen de Linea RectaDocumento2 pagineResumen de Linea Rectajkl316Nessuna valutazione finora

- QRC Funciones RacionalesDocumento2 pagineQRC Funciones Racionalesjkl316Nessuna valutazione finora

- QRC Funciones IntroDocumento3 pagineQRC Funciones Introjkl316Nessuna valutazione finora

- Perros - Fichas de Animales en National GeographicDocumento18 paginePerros - Fichas de Animales en National GeographicMateriales la luz C.A.Nessuna valutazione finora

- Clasificación y Reciclaje de Latas de Aluminio yDocumento11 pagineClasificación y Reciclaje de Latas de Aluminio yAndrea LorenaaNessuna valutazione finora

- Diferentes Usos de Los MontacargasDocumento27 pagineDiferentes Usos de Los MontacargasLDRLNessuna valutazione finora

- Hoja de Vida Act 8 Abril 2011Documento7 pagineHoja de Vida Act 8 Abril 2011arqmiltonruanoNessuna valutazione finora

- Motores de Inducción Monofásicos y Máquinas EspecialesDocumento7 pagineMotores de Inducción Monofásicos y Máquinas EspecialesFernando AguilarNessuna valutazione finora

- Evaluacion Final - Escenario 8 - SISTEMAS DE INFORMACION EN GESTION LOGISTICADocumento11 pagineEvaluacion Final - Escenario 8 - SISTEMAS DE INFORMACION EN GESTION LOGISTICAOlger AndradeNessuna valutazione finora

- Clase 6 Futuridades Bajo Que Condiciones Establecemos Relaciones Con El PorvenirDocumento14 pagineClase 6 Futuridades Bajo Que Condiciones Establecemos Relaciones Con El PorvenirHernán Suárez HurevichNessuna valutazione finora

- Informe Previo 01 - Teresa Electrónica de PotenciaDocumento4 pagineInforme Previo 01 - Teresa Electrónica de PotenciaSergio MarceloNessuna valutazione finora

- Paginas para Descargar Libros GratisDocumento2 paginePaginas para Descargar Libros GratispascoelunicoNessuna valutazione finora

- T Ucsg Pre Tec Ieca 32Documento101 pagineT Ucsg Pre Tec Ieca 32Jostyn CarrionNessuna valutazione finora

- Motores DetalleDocumento2 pagineMotores DetalleNico VarosNessuna valutazione finora

- CH.5 - FODA Del CASODocumento9 pagineCH.5 - FODA Del CASOnataliaguzmanv2005Nessuna valutazione finora

- Caballo de FuerzaDocumento3 pagineCaballo de FuerzaMarielena MejiaNessuna valutazione finora

- 21 Hoja de Vida Academica MoradoDocumento2 pagine21 Hoja de Vida Academica MoradoCarlos Iván GutierrezNessuna valutazione finora

- Implementacion de Una Empresa de Palets de Madera PDFDocumento183 pagineImplementacion de Una Empresa de Palets de Madera PDFDiego SánchezNessuna valutazione finora

- Texto Final (PC01)Documento5 pagineTexto Final (PC01)GiancarloNessuna valutazione finora

- LABORATORIO - 3 Bootloader 16f877a PDFDocumento5 pagineLABORATORIO - 3 Bootloader 16f877a PDFKevin RojasNessuna valutazione finora

- 13 Evaluacion de ProyectosDocumento17 pagine13 Evaluacion de ProyectosArturo VelascoNessuna valutazione finora

- Premisas de Mantenimientos PreventivosDocumento15 paginePremisas de Mantenimientos PreventivosH V Vera RuizNessuna valutazione finora

- Desarrollo Java 8Documento15 pagineDesarrollo Java 8Adolfo Alejandro Tacue GalvisNessuna valutazione finora

- Reparacion de Mantenimiento de Heladeras Comerciales YCamaras FrigorificasDocumento3 pagineReparacion de Mantenimiento de Heladeras Comerciales YCamaras FrigorificasVictor Velasquez0% (1)

- Guia Del Op. de Sistemas TG Taurus70sDocumento241 pagineGuia Del Op. de Sistemas TG Taurus70sCarlos Antonio Aguilar AcostaNessuna valutazione finora

- Presupuesto Placa HuellaDocumento95 paginePresupuesto Placa HuellaDiego Alejandro Ricaurte DueñasNessuna valutazione finora

- DMMS U2 A3Documento4 pagineDMMS U2 A3Jose CenNessuna valutazione finora

- EvidencianVideonFabricarnunnmotornsencillonv2 645ef4fd1557f4a PDFDocumento3 pagineEvidencianVideonFabricarnunnmotornsencillonv2 645ef4fd1557f4a PDFNicolas LopezNessuna valutazione finora

- Jessica Villamar TareaU1Documento13 pagineJessica Villamar TareaU1Jessica Cecibel Villamar CevallosNessuna valutazione finora