Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

IRS Unit-2

Caricato da

SiddarthModiDescrizione originale:

Titolo originale

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

IRS Unit-2

Caricato da

SiddarthModiCopyright:

Formati disponibili

www.jntuworld.

com

www.jwjobs.net

www.jntuworldupdates.org

UNIT- II

Cataloging and Indexing

2.1 History and Objectives of Indexing

2.2 Indexing Process

2.3 Automatic Indexing

2.4 Information Extraction

2.1 History and Objectives of Indexing:

Overview:

The indexing process determines which terms (concepts) can represent a particular item

The transformation from the received item to the searchable data structure

is called indexing

Manual or automatic

Search Once the searchable data structure has been created, techniques must be defined

that correlate the user entered query statement to the set of items in the database to

determine the items to be returned to the user.

Information extraction extract specific information to be normalized and entered into a

structured database (DBMS)

Focus on very specific concepts and contains a transformation process that

modifies the extracted information into a form compatible with the end

structured database

Automatic File Build

Text summarization

One application of information extraction

extracting larger contextual constructs (e.g. sentences) that are combined

to form a summary of an item

2.1.1 History:

Indexing (originally called cataloging) is the oldest technique for identifying the contents

of item to assist in their retrieval

Subject indexing ->hierarchical subject indexing

Indexing creates a bibliographic citation in a structured file that reference the original

text

Citation information about the items, key wording the subjects of the item,

and a constrained length free text field used for an abstract/summary

Usually performed by professional indexers

Automatic indexing

Full text search

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

2.1.2 Objectives:

Represent the concepts within an item to facilitate the users finding relevant information

The full text searchable data structures for items in the Document File provides a new

class of indexing called total document indexing

All of the words within the item are potential index descriptors of the

Subjects of the item.

The process of Item normalization that takes all possible words in an item and

transforms them into processing tokens used in defining the searchable representation of

an item.

Current systems have the ability to automatically weight the processing

tokens based upon their potential Importance in defining the concepts in

the item.

Other objectives of indexing ranking, item clustering

2.2 Indexing Process:

When an organization with multiple indexers decides to create a public or private index, some

procedural decisions on how to create the index terms assist the indexer and end users in

knowing what to expect in the index file

Scope of the indexing define what level of detail the subject index will

contain

Based on usage scenarios of the end users

The need to link index terms together in a single index for a particular

concept

Needed when there are multiple independent concepts found within an item

2.2.1 Scope of Indexing :

When performed manually, the process of reliably and consistently determining the

bibliographic terms that represent the concepts in an item is extremely difficult

Vocabulary domains of indexer and author may be different

Results in different quality level of indexing

Two factors involved in deciding on what level to index the concepts in an item

Exhaustivity

Specificity

What portions of an item should be indexed

Only title or title + abstract ? (low precision and recall)

Weighting of index terms is not common in manual indexing

Weighting is the process of assigning an importance to an index terms use

in an item

The weight should represent the degree to which the concept associated

with the index term is represented in the item

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

The weight should help in discriminating the extent to which the concept

is discussed in items in the database

The manual process of assigning weights adds additional overhead on the

indexer and requires a more complex data structure to store the weights.

2.2.2 Precoordination and Linkage :

Whether linkage are available between index terms for an item

Used to correlate related attributes associated with concepts discussed in

an item

The process of creating term linkages at index creating time is called precoordination

Postcoordination coordinating terms at search time by ANDing index

terms together, which only finds indexes that have all of the search term

Factors that must be determined in the linkage process are the number of terms that can

be related, any ordering constraints on the linked terms, and any additional descriptors

are associated with the index terms.

Linkage of Index Terms :

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

2.3 Automatic Indexing :

2.3.1 Overview

Automatic indexing is the capability to automatically determine the index terms to be

assigned to an item

Simplest case: total document indexing

Complex: emulate a human indexer and determine a limited number of

index terms for the major concepts in the item

Human indexing VS Automatic indexing

Adv: ability to determine concept abstraction and judge the value of a

concept

Disadv: cost, processing time, and consistency

Processing time of an item by a human indexer varies significantly

based upon the indexers knowledge of the concepts being indexed,

the exhaustivity and specificity guidelines and the amount and accuracy

of preprocessing via Automatic File Build. Usually take at least five

minutes per item.

Automatic indexing requires only a few seconds or less of computer time based upon the

size of the processor and the complexity of the algorithms to generate the index.

If the indexing is being performed automatically, by an algorithm, there is consistency in

the index term selection process.

Automatic indexing: weighted and un-weighted;

Indexing by term and indexing by concept

2.3.3.1 Un-weighted Automatic Indexing:

The existence of an index term in a document and sometimes its word location(s) are

kept as part of the searchable data structure

No attempt is made to discriminate between the value of the index terms in representing

concepts in the item

Not possible to tell the difference between the main topics in the item and

a casual reference to a concept

Query against unweighted systems are based on Boolean logic and the

items in the resultant Hit file are considered equal in value

2.3.3.2 Weighted Automatic Indexing :

An attempt is made to place a value on the index terms representation of its associated

concept in the document

An index terms weight is based on a function associated with the frequency of

occurrence of the term in the item.

Luhn postulated that the significance of a concept in an item is directly proportional to

the frequency of use of the word associated with the concept in the document

Values for the index terms are normalized between zero and one

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

The higher the weight, the more the term represents a concept discussed in

the item

The query process uses the weights along with any weights assigned to terms in the query

to determine a rank value used in predicting the likelihood that an item satisfies the query

Thresholds or a parameter specifying the maximum number of items to be

returned are used to bound the number of items returned to a user.

2.3.3.3 Indexing by Term:

The terms of the original item are used as a basis of the index process

Two major techniques: statistical and natural language

Statistical techniques

Calculation of weights use statistic information such as the frequency of

occurrence of words and their distributions in the searchable DB

Vector models and probabilistic models

Natural language processing

Process items at the morphological, lexical, semantic, syntax, and

discourse levels

Each level uses information from the previous level to perform it

additional analysis

Events, and event relationships

2.3.3.4 Indexing by Concept:

The basis for concept indexing is that there are many ways to express the same ideas and

increased retrieval performance comes from using a single representation

Indexing by term treats each of these occurrences as a different index and then uses

thesauri or other query expansion techniques to expand a query to find the different ways

the same thing has been represented

Concept indexing determines a canonical set of concepts based on a test set of terms and

uses them as a basis for indexing all items

2.4 Information Extraction & Summarization:

There are two processes associated with information extraction:

Determination of facts to go into structured fields in a database

In this case only a subset of the important facts in an item may be identified

and extracted.

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Extraction of text that can be used to summarize an item.

In summarization all of the major concepts in the item should be represented

in the summary.

The process of extracting facts to go into indexes is called Automatic File Build. Its goal

is to process incoming items and extract index terms that will go into a structured database.

This differs from indexing in that its objective is to extract specific types of information

versus understanding all of the text of the document.

An Information Retrieval Systems goal is to provide an indepth representation of the

total contents of an item

Information Extraction system only analyzes those portions of a document that

potentially contain information relevant to the extraction criteria

The objective of the data extraction is in most cases to update a structured database with

additional facts. The updates may be from a controlled vocabulary or substrings from the item as

defined by the extraction rules.

The term slot is used to define a particular category of information to be extracted.

Slots are organized into templates or semantic frames.

Information extraction requires multiple levels of analysis of the text of an item. It must

understand the words and their context (discourse analysis).

Recall refers to how much information was extracted from an item versus how much

should have been extracted from the item. It shows the amount of correct and relevant data

extracted versus the correct and relevant data in the item.

Precision refers to how much in formation was extracted accurately versus the total

information extracted.

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Metrics used are over generation and fallout.

Over generation measures the amount of irrelevant information that is extracted.

This could be caused by templates filled on topics that are not intended to be extracted or slots

that get filled with non-relevant data.

Fallout measures how much a system assigns incorrect slot fillers as the number of

potential incorrect slot fillers increases.

The goal of document summarization is to extract a summary of an item maintaining the

most important ideas while significantly reducing the size.

Examples of summaries that are often part of any item are titles, table of contents, and abstracts

with the abstract being the closest

The abstract can be used to represent the item for search purposes or as a way for a user

to determine the utility of an item without having to read the complete item.

It is not feasible to automatically generate a coherent narrative summary of an item with

proper discourse, abstraction and language usage

Restricting the domain of the item can significantly improve the quality of the Output

The more restricted goals for much of the research is in finding subsets of the item that

can be extracted and concatenated (usually extracting at the sentence level) and represents the

most important concepts in the item

There is no guarantee of readability as a narrative abstract and it is seldom achieved.

Different algorithms produce different summaries. Just as different humans create

different abstracts for the same item, automated techniques that generate different summaries

does not intrinsically imply major deficiencies between the summaries.

Most automated algorithms approach summarization by calculating a score for each

sentence and then extracting the sentences with the highest scores

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Kupiec et al. are pursuing statistical classification approach based upon a training set reducing

the heuristics by focusing on a weighted combination of criteria to produce optimal scoring

scheme (Kupiec-95).

They selected the following five feature sets as a basis for their algorithm:

Sentence Length Feature that requires sentence to be over five words in length

Fixed Phrase Feature that looks for the existence of phrase cues (e.g.,in conclusion)

Paragraph Feature that places emphasis on the first ten and last five paragraphs in an item

and also the location of the sentences within the paragraph

Thematic Word Feature that uses word frequency

Uppercase Word Feature that places emphasis on proper names and acronyms.

DATA STRUCTURES:

Introduction to Data Structure in IR :

Stemming

Porter Stemming Algorithm

Dictionary Look-up Stemmers

Successors stemmers

Major Data Structure

Inverted File Structures

N-Gram Data Structures

PAT Data Structures

Signature File Structure

Hypertext Data Structures

Introduction to Data Structure

4

Two aspects of Data structures from IRS perspective

Ability to represent concepts and their relationships

Its support to locate those concepts.

Two major data structures

Stores and manages the received items in their normalized formDocument manager

Contains the processing tokens and associated data to support searchDocument search manager

The results of the search are the references to the items, which are passed to the

Document Manager for retrieval

Data structures that support search function are dealt.

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Major Data Structures:

Before placing data in the searchable data structure, the transformation of data called

stemming is applied.

Conflation is the term used to refer to mapping multiple morphological variants to a

single representation called stem/root.

Reduce tokens to root form of words to recognize morphological variation.

computer, computational, computation all reduced to same token compute

Correct morphological analysis is language specific and can be complex.

Stemming blindly strips off known affixes (prefixes and suffixes) in an iterative

fashion.

Stemming provide compression, savings in storage and processing.

Stemming improves recall

Stemming process has to categorize a word prior to making the decision to stem it.

proper names and acronyms should not stem as they are not related to a

common core concept.

Stemming process in NLP causes loss of information

Tense information is lost, hence a concept economic support being indexed

needed to determine whether occurred in past or will be occurring in future.

www.specworld.in

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

The Porter algorithm:

The Porter algorithm consists of a set of condition/action rules.

The condition fall into three classes

Conditions on the stem

Conditions on the suffix

Conditions on rules

Conditions on the stem :

1. The measure , denoted m ,of a stem is based on its alternate vowel-consonant sequences.

[C] (VC) m [V]

Measure

Example

M=0

TR, EE, TREE, Y, BY

M=1

TROUBLE, OATS, TREES, IVY

M=2

TROUBLES, PRIVATE, OATEN

2.*<X> ---the stem ends with a given letter X

3.*v*---the stem contains a vowel

7 4.*d ---the stem ends in double consonant

5.*o ---the stem ends with a consonant-vowel-consonant, sequence, where the final

consonant is not w, x or y

Suffix conditions take the form:

(current_suffix == pattern)

Conditions on rules :

The rules are divided into steps. The rules in a step are examined in sequence , and only

one rule from a step can apply

{

step1a(word);

step1b(stem);

if (the second or third rule of step 1b was used)

step1b1(stem);

step1c(stem);

www.specworld.in

10

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

step2(stem);

step3(stem);

step4(stem);

step5a(stem);

step5b(stem);

}

Table / Dictionary Look up:

15

Store a table of all index terms and their stems.

The original term or stemmed version of the term is looked up in a dictionary and

Replaced by the stem that best represents it.

Implemented in INQUERY, Retrieval Ware systems

Stem a table look up algorithm implemented in INQUERY uses the following six data

Files

Dictionary of words (lexicon)

Supplemental list of words for the dictionary

Exceptions list for those words that should retain an e at the end

o (e.g., suites to suite but suited to suit)

Direct_Conflation - allows definition of direct conflation via

o word pairs that override the stemming algorithm

Country_Nationality - conflations between nationalities and

o countries (British maps to Britain)

Proper Nouns - a list of proper nouns that should not be stemmed.

Successor Stemmer :

Successor Stemmer based on the length of prefixes that optionally stem expansions of

additional suffixes

The alg investigates word and morpheme boundaries based on the distribution of

phonemes that distinguishes one word form other

The process determines the successor variety for a word, uses this information to divide a

word into segments and selects one of the segment as stem

The successor variety of a segment of a word in a set of words is the no. of distinct

letters that occupy the segment length plus one character

Ex : The successor variety for the first 3 letters of a 5 letter word is the no. of

words that have the same first 3 letters but a different 4th letter plus one

The successor variety of any prefix of a word is the no. of children associate with the

node in the symbol tree representing that prefix

16

www.specworld.in

11

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Successor variety for the first letter b is three. The successor variety for the prefix ba is two.

Affix Removal Stemmers:

Affix removal algorithms remove suffixes and/or prefixes from terms leaving a stem

If a word ends in ies but not eies or aies (Harman 1991)

Then ies -> y

If a word ends in es but not aes , or ees or oes

Then es -> e

If a word ends in s but not us or ss

Then s -> NULL

21

www.specworld.in

12

www.jntuworld.com

www.smartzworld.com

www.jntuworld.com

www.jwjobs.net

www.jntuworldupdates.org

Related Data Structures for PT Searchable Files:

25

Inverted file system

Minimize secondary storage access when multiple search terms are

applied across the total database

N-gram

Break process tokens into smaller string units and uses the token fragment

for search

Improve efficiencies and conceptual manipulation over full word

inversion

PAT Trees and Arrays

View the text of an item as a single long stream versus a juxtaposition of

words

Signature file

Fast elimination of non-relevant items reducing the searchable items into a

manageable subset

Hypertext

Manually or automatically create imbedded links within one item to a

related item

25

Inverted File Structure:

26

Commonly used in DBMS and IR

For each word, a list of documents in which the word is found in is stored

Composed of three basic files

Document files

Inversion lists: contains the document identifier

Dictionary: list all the unique word or other information used in query optimization

(e.q. length of inversion lists)

The inversion list contains Doc-id for each DOC in which the word is found.

To support proximity, continuous word phrase & term weighting all occurrences of a

word are stored in the inversion list along with the word position.

For Systems that supports ranking, the list is re-organized into rank order.

Complete data structures material

www.specworld.in

13

www.jntuworld.com

www.smartzworld.com

Potrebbero piacerti anche

- Bulk Uploading SampleDocumento342 pagineBulk Uploading SampleHuzaifa Bin SaadNessuna valutazione finora

- C++ NotesDocumento129 pagineC++ NotesNikhil Kant Saxena100% (4)

- IRS Unit-1Documento14 pagineIRS Unit-1Ratna Raju Ayalapogu100% (4)

- Unit-Ii: Cataloging and IndexingDocumento13 pagineUnit-Ii: Cataloging and Indexing7killers4u100% (3)

- Information Retrieval SystemsDocumento102 pagineInformation Retrieval SystemsSiddarthModi100% (1)

- IRS Unit-2Documento13 pagineIRS Unit-2Nagendra MarisettiNessuna valutazione finora

- UNIT 2 IRS UpDocumento42 pagineUNIT 2 IRS Upnikhilsinha789Nessuna valutazione finora

- IRS Automatic Indexing UNIT-2Documento18 pagineIRS Automatic Indexing UNIT-2suryachandra podugu50% (2)

- IRS III Year UNIT-3 Part 1Documento18 pagineIRS III Year UNIT-3 Part 1Banala ramyasree50% (2)

- IRS Unit-3Documento28 pagineIRS Unit-3SiddarthModi100% (2)

- IRS Unit-4Documento13 pagineIRS Unit-4Anonymous iCFJ73OMpD50% (4)

- IRS Questions QbankDocumento2 pagineIRS Questions Qbanktest1qaz100% (1)

- Clustering and Search Techniques in Information Retrieval SystemsDocumento39 pagineClustering and Search Techniques in Information Retrieval SystemsKarumuri Sri Rama Murthy67% (3)

- Unit-4 Irs Notes Part 2Documento5 pagineUnit-4 Irs Notes Part 2Banala ramyasreeNessuna valutazione finora

- Introduction To Clustering Thesaurus Generation Item ClusteringDocumento15 pagineIntroduction To Clustering Thesaurus Generation Item Clusteringswathy reghuNessuna valutazione finora

- IRS Study MaterialDocumento87 pagineIRS Study Materialrishisiliveri95100% (1)

- Unit 5Documento19 pagineUnit 55P1 DeekshitNessuna valutazione finora

- Subject:Machine Learning Unit-5 Analytical Learning Topic:Remarks On Explanation Based LearningDocumento21 pagineSubject:Machine Learning Unit-5 Analytical Learning Topic:Remarks On Explanation Based Learningnani Ramesh100% (1)

- Irs Unit1Documento15 pagineIrs Unit1Kadasi AbishekNessuna valutazione finora

- Information Retrieval SytemDocumento18 pagineInformation Retrieval SytemS. Kuku DozoNessuna valutazione finora

- R7411205-Information Retrieval SystemsDocumento4 pagineR7411205-Information Retrieval SystemssivabharathamurthyNessuna valutazione finora

- cp5293 Big Data Analytics Unit 5 PDFDocumento28 paginecp5293 Big Data Analytics Unit 5 PDFGnanendra KotikamNessuna valutazione finora

- Cs6007 Information Retrieval Question BankDocumento45 pagineCs6007 Information Retrieval Question BankNirmalkumar R100% (1)

- Elements of Transport ProtocolsDocumento19 pagineElements of Transport ProtocolsSasi KumarNessuna valutazione finora

- Indexing: 1. Static and Dynamic Inverted IndexDocumento55 pagineIndexing: 1. Static and Dynamic Inverted IndexVaibhav Garg100% (1)

- Viva Questions For Python LabDocumento9 pagineViva Questions For Python Labsweethaa harinishriNessuna valutazione finora

- DBMS - R18 UNIT 5 NotesDocumento23 pagineDBMS - R18 UNIT 5 NotesAbhinav Boppa100% (1)

- IRS Unit-2Documento37 pagineIRS Unit-2Venkatesh JNessuna valutazione finora

- Context Based Web Indexing For Semantic Web: Anchal Jain Nidhi TyagiDocumento5 pagineContext Based Web Indexing For Semantic Web: Anchal Jain Nidhi TyagiInternational Organization of Scientific Research (IOSR)Nessuna valutazione finora

- Unit 2Documento40 pagineUnit 2Sree DhathriNessuna valutazione finora

- Contextual Information Search Based On Domain Using Query ExpansionDocumento4 pagineContextual Information Search Based On Domain Using Query ExpansionInternational Journal of Application or Innovation in Engineering & ManagementNessuna valutazione finora

- Irs Unit Ii Part 1Documento16 pagineIrs Unit Ii Part 1Banala ramyasreeNessuna valutazione finora

- Multi-Document Extractive Summarization For News Page 1 of 59Documento59 pagineMulti-Document Extractive Summarization For News Page 1 of 59athar1988Nessuna valutazione finora

- A Comparison of Open Source Search EngineDocumento46 pagineA Comparison of Open Source Search EngineZamah SyariNessuna valutazione finora

- A Mixed Method Approach For Efficient Component Retrieval From A Component RepositoryDocumento4 pagineA Mixed Method Approach For Efficient Component Retrieval From A Component RepositoryLiaNaulaAjjahNessuna valutazione finora

- Information Retrieval System and The Pagerank AlgorithmDocumento37 pagineInformation Retrieval System and The Pagerank AlgorithmMani Deepak ChoudhryNessuna valutazione finora

- Chapter - 6 Part 1Documento21 pagineChapter - 6 Part 1CLAsH with DxNessuna valutazione finora

- SNS College of Technology: Object Oriented System DesignDocumento22 pagineSNS College of Technology: Object Oriented System DesignJennifer CarpenterNessuna valutazione finora

- Importance of Similarity Measures in Effective Web Information RetrievalDocumento5 pagineImportance of Similarity Measures in Effective Web Information RetrievalEditor IJRITCCNessuna valutazione finora

- Document Ranking Using Customizes Vector MethodDocumento6 pagineDocument Ranking Using Customizes Vector MethodEditor IJTSRDNessuna valutazione finora

- Metadata: Towards Machine-Enabled IntelligenceDocumento10 pagineMetadata: Towards Machine-Enabled IntelligenceijwestNessuna valutazione finora

- Metadata: Towards Machine-Enabled IntelligenceDocumento10 pagineMetadata: Towards Machine-Enabled IntelligenceijwestNessuna valutazione finora

- Information Extraction Using Incremental ApproachDocumento3 pagineInformation Extraction Using Incremental ApproachseventhsensegroupNessuna valutazione finora

- Performance Comparison Between Naive Bayes and K - Nearest Neighbor Algorithm For The Classification of Indonesian Language ArticlesDocumento6 paginePerformance Comparison Between Naive Bayes and K - Nearest Neighbor Algorithm For The Classification of Indonesian Language ArticlesIAES IJAINessuna valutazione finora

- Unit 11Documento55 pagineUnit 11Eldhose KGNessuna valutazione finora

- Learning Context For Text CategorizationDocumento9 pagineLearning Context For Text CategorizationLewis TorresNessuna valutazione finora

- Smart Environment For Component ReuseDocumento5 pagineSmart Environment For Component Reuseeditor_ijarcsseNessuna valutazione finora

- A Survey On Text Categorization: International Journal of Computer Trends and Technology-volume3Issue1 - 2012Documento7 pagineA Survey On Text Categorization: International Journal of Computer Trends and Technology-volume3Issue1 - 2012surendiran123Nessuna valutazione finora

- Construction of Ontology-Based Software Repositories by Text MiningDocumento8 pagineConstruction of Ontology-Based Software Repositories by Text MiningHenri RameshNessuna valutazione finora

- A Statistical Approach To Perform Web Based Summarization: Kirti Bhatia, Dr. Rajendar ChhillarDocumento3 pagineA Statistical Approach To Perform Web Based Summarization: Kirti Bhatia, Dr. Rajendar ChhillarInternational Organization of Scientific Research (IOSR)Nessuna valutazione finora

- Information Retrieval System Assignment-1Documento10 pagineInformation Retrieval System Assignment-1suryachandra poduguNessuna valutazione finora

- An Approach For Design Search Engine Architecture For Document SummarizationDocumento6 pagineAn Approach For Design Search Engine Architecture For Document SummarizationEditor IJRITCCNessuna valutazione finora

- SRS Documentation For ClusteringDocumento18 pagineSRS Documentation For ClusteringMujtaba IbrarNessuna valutazione finora

- Effective Classification of TextDocumento6 pagineEffective Classification of TextseventhsensegroupNessuna valutazione finora

- Wang 2007Documento6 pagineWang 2007amine332Nessuna valutazione finora

- Unit-I: Introduction To Information Retrieval SystemsDocumento14 pagineUnit-I: Introduction To Information Retrieval Systems7killers4u100% (1)

- Chapter 3Documento8 pagineChapter 3Bala SudhakarNessuna valutazione finora

- Automatic Image Annotation: Enhancing Visual Understanding through Automated TaggingDa EverandAutomatic Image Annotation: Enhancing Visual Understanding through Automated TaggingNessuna valutazione finora

- A Survey - Ontology Based Information Retrieval in Semantic WebDocumento8 pagineA Survey - Ontology Based Information Retrieval in Semantic WebMayank SinghNessuna valutazione finora

- 2 Search EnginesDocumento41 pagine2 Search EnginesWendy Joy CuyuganNessuna valutazione finora

- 4.an EfficientDocumento10 pagine4.an Efficientiaset123Nessuna valutazione finora

- A Tag - Tree For Retrieval From Multiple Domains of A Publication SystemDocumento6 pagineA Tag - Tree For Retrieval From Multiple Domains of A Publication SystemInternational Journal of Application or Innovation in Engineering & ManagementNessuna valutazione finora

- NotesDocumento116 pagineNotesSiddarthModiNessuna valutazione finora

- Virtual Memory Can Slow Down PerformanceDocumento5 pagineVirtual Memory Can Slow Down PerformanceSiddarthModiNessuna valutazione finora

- Who Invented C Language?: The Compilers, JVMS, Kernals Etc. Are Written in C Language and Most of Languages Follows CDocumento7 pagineWho Invented C Language?: The Compilers, JVMS, Kernals Etc. Are Written in C Language and Most of Languages Follows CSiddarthModiNessuna valutazione finora

- Guidelines For Submitting The AbstractDocumento2 pagineGuidelines For Submitting The AbstractSiddarthModiNessuna valutazione finora

- IRS Unit-1Documento14 pagineIRS Unit-1SiddarthModi50% (2)

- IRS Unit-3Documento28 pagineIRS Unit-3SiddarthModi100% (2)

- AptitudeasdDocumento59 pagineAptitudeasdSiddarthModi0% (1)

- STM ImpDocumento4 pagineSTM ImpSiddarthModiNessuna valutazione finora

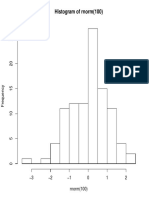

- Histogram of RnormDocumento1 paginaHistogram of RnormSiddarthModiNessuna valutazione finora

- MEFADocumento2 pagineMEFASiddarthModiNessuna valutazione finora

- 1.install Virtualbox 2.put Ubuntu in It Using Iso 3.install R UsingDocumento6 pagine1.install Virtualbox 2.put Ubuntu in It Using Iso 3.install R UsingSiddarthModiNessuna valutazione finora

- XhasdDocumento1 paginaXhasdSiddarthModiNessuna valutazione finora

- CssDocumento15 pagineCssSiddarthModiNessuna valutazione finora

- Teaching and Assessment of Literature Studies Alba, Shane O. ED-31Documento11 pagineTeaching and Assessment of Literature Studies Alba, Shane O. ED-31shara santosNessuna valutazione finora

- Survival Creole: Bryant C. Freeman, PH.DDocumento36 pagineSurvival Creole: Bryant C. Freeman, PH.DEnrique Miguel Gonzalez Collado100% (1)

- DY2 Test Diagnostic-1Documento4 pagineDY2 Test Diagnostic-1Oier Garcia BarriosNessuna valutazione finora

- Action Plan in APDocumento4 pagineAction Plan in APZybyl ZybylNessuna valutazione finora

- Love's Labour's LostDocumento248 pagineLove's Labour's LostteearrNessuna valutazione finora

- Solutions 7 - Giáo ÁnDocumento109 pagineSolutions 7 - Giáo Án29101996 ThanhthaoNessuna valutazione finora

- Assignment 06 Introduction To Computing Lab Report TemplateDocumento10 pagineAssignment 06 Introduction To Computing Lab Report Template1224 OZEAR KHANNessuna valutazione finora

- Semantic Roles 2Documento11 pagineSemantic Roles 2wakefulness100% (1)

- Verbs?: Asu+A Minä Asu+N Sinä Asu+T Hän Asu+U Me Asu+Mme Te Asu+Tte He Asu+VatDocumento1 paginaVerbs?: Asu+A Minä Asu+N Sinä Asu+T Hän Asu+U Me Asu+Mme Te Asu+Tte He Asu+VatLin SawNessuna valutazione finora

- Precalculus Complete FactorizationDocumento60 paginePrecalculus Complete FactorizationSandovalNessuna valutazione finora

- Celebrating The Body A Study of Kamala DasDocumento27 pagineCelebrating The Body A Study of Kamala Dasfaisal jahangeerNessuna valutazione finora

- Mood Classification of Hindi Songs Based On Lyrics: December 2015Documento8 pagineMood Classification of Hindi Songs Based On Lyrics: December 2015lalit kashyapNessuna valutazione finora

- Symmetric Cryptography: Classical Cipher: SubstitutionDocumento56 pagineSymmetric Cryptography: Classical Cipher: SubstitutionShorya KumarNessuna valutazione finora

- Pirkei AvothDocumento266 paginePirkei AvothabumanuNessuna valutazione finora

- ER Diagrams (Concluded), Schema Refinement, and NormalizationDocumento39 pagineER Diagrams (Concluded), Schema Refinement, and NormalizationrajendragNessuna valutazione finora

- LogDocumento223 pagineLogKejar TayangNessuna valutazione finora

- ST93CS46 ST93CS47: 1K (64 X 16) Serial Microwire EepromDocumento17 pagineST93CS46 ST93CS47: 1K (64 X 16) Serial Microwire EepromAlexsander MeloNessuna valutazione finora

- Design of Steel Structure (Chapter 2) by DR R BaskarDocumento57 pagineDesign of Steel Structure (Chapter 2) by DR R Baskarelect aksNessuna valutazione finora

- Anderson 1Documento65 pagineAnderson 1Calibán CatrileoNessuna valutazione finora

- Errores HitachiDocumento4 pagineErrores HitachiToni Pérez Lago50% (2)

- ENGLISH 10 - Q1 - Mod2 - Writing A News ReportDocumento13 pagineENGLISH 10 - Q1 - Mod2 - Writing A News ReportFrancesca Shamelle Belicano RagasaNessuna valutazione finora

- Inama Ku Mirire Nibyo Kuryaupdated FinalDocumento444 pagineInama Ku Mirire Nibyo Kuryaupdated Finalbarutwanayo olivier100% (1)

- DS FT2232HDocumento67 pagineDS FT2232HEmiliano KleinNessuna valutazione finora

- Tasksheet 1Documento3 pagineTasksheet 1joselito VillonaNessuna valutazione finora

- Deswik - Suite 2023.1 Release NotesDocumento246 pagineDeswik - Suite 2023.1 Release NotesCHRISTIANNessuna valutazione finora

- Exadata Storage Expansion x8 DsDocumento16 pagineExadata Storage Expansion x8 DskisituNessuna valutazione finora

- Step2 HackingDocumento17 pagineStep2 Hackingمولن راجفNessuna valutazione finora

- Week 1-MidsDocumento56 pagineWeek 1-MidsFizza ShahzadiNessuna valutazione finora