Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

06 Data Mining-Data Preprocessing - Discrisation

Caricato da

Raj EndranTitolo originale

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

06 Data Mining-Data Preprocessing - Discrisation

Caricato da

Raj EndranCopyright:

Formati disponibili

3.

5 Data Transformation and Data Discretization

113

data cleaning and was addressed in Section 3.2.2. Section 3.2.3 on the data cleaning

process also discussed ETL tools, where users specify transformations to correct data

inconsistencies. Attribute construction and aggregation were discussed in Section 3.4

on data reduction. In this section, we therefore concentrate on the latter three strategies.

Discretization techniques can be categorized based on how the discretization is performed, such as whether it uses class information or which direction it proceeds (i.e.,

top-down vs. bottom-up). If the discretization process uses class information, then we

say it is supervised discretization. Otherwise, it is unsupervised. If the process starts by first

finding one or a few points (called split points or cut points) to split the entire attribute

range, and then repeats this recursively on the resulting intervals, it is called top-down

discretization or splitting. This contrasts with bottom-up discretization or merging, which

starts by considering all of the continuous values as potential split-points, removes some

by merging neighborhood values to form intervals, and then recursively applies this

process to the resulting intervals.

Data discretization and concept hierarchy generation are also forms of data reduction. The raw data are replaced by a smaller number of interval or concept labels. This

simplifies the original data and makes the mining more efficient. The resulting patterns

mined are typically easier to understand. Concept hierarchies are also useful for mining

at multiple abstraction levels.

The rest of this section is organized as follows. First, normalization techniques are

presented in Section 3.5.2. We then describe several techniques for data discretization,

each of which can be used to generate concept hierarchies for numeric attributes. The

techniques include binning (Section 3.5.3) and histogram analysis (Section 3.5.4), as

well as cluster analysis, decision tree analysis, and correlation analysis (Section 3.5.5).

Finally, Section 3.5.6 describes the automatic generation of concept hierarchies for

nominal data.

3.5.2

Data Transformation by Normalization

The measurement unit used can affect the data analysis. For example, changing measurement units from meters to inches for height, or from kilograms to pounds for weight,

may lead to very different results. In general, expressing an attribute in smaller units will

lead to a larger range for that attribute, and thus tend to give such an attribute greater

effect or weight. To help avoid dependence on the choice of measurement units, the

data should be normalized or standardized. This involves transforming the data to fall

within a smaller or common range such as [1, 1] or [0.0, 1.0]. (The terms standardize

and normalize are used interchangeably in data preprocessing, although in statistics, the

latter term also has other connotations.)

Normalizing the data attempts to give all attributes an equal weight. Normalization is particularly useful for classification algorithms involving neural networks or

distance measurements such as nearest-neighbor classification and clustering. If using

the neural network backpropagation algorithm for classification mining (Chapter 9),

normalizing the input values for each attribute measured in the training tuples will help

speed up the learning phase. For distance-based methods, normalization helps prevent

114

Chapter 3 Data Preprocessing

attributes with initially large ranges (e.g., income) from outweighing attributes with

initially smaller ranges (e.g., binary attributes). It is also useful when given no prior

knowledge of the data.

There are many methods for data normalization. We study min-max normalization,

z-score normalization, and normalization by decimal scaling. For our discussion, let A be

a numeric attribute with n observed values, v1 , v2 , . . . , vn .

Min-max normalization performs a linear transformation on the original data. Suppose that minA and maxA are the minimum and maximum values of an attribute, A.

Min-max normalization maps a value, vi , of A to vi0 in the range [new minA , new maxA ]

by computing

vi0 =

vi minA

(new maxA new minA ) + new minA .

maxA minA

(3.8)

Min-max normalization preserves the relationships among the original data values. It

will encounter an out-of-bounds error if a future input case for normalization falls

outside of the original data range for A.

Example 3.4 Min-max normalization. Suppose that the minimum and maximum values for the

attribute income are $12,000 and $98,000, respectively. We would like to map income

to the range [0.0, 1.0]. By min-max normalization, a value of $73,600 for income is

73,600 12,000

transformed to 98,000

12,000 (1.0 0) + 0 = 0.716.

In z-score normalization (or zero-mean normalization), the values for an attribute,

A, are normalized based on the mean (i.e., average) and standard deviation of A. A value,

vi , of A is normalized to vi0 by computing

vi0 =

vi A

,

A

(3.9)

where A and A are the mean and standard deviation, respectively, of attribute A. The

mean and standard deviation were discussed in Section 2.2, where A = n1 (v1 + v2 + +

vn ) and A is computed as the square root of the variance of A (see Eq. (2.6)). This

method of normalization is useful when the actual minimum and maximum of attribute

A are unknown, or when there are outliers that dominate the min-max normalization.

Example 3.5 z-score normalization. Suppose that the mean and standard deviation of the values for

the attribute income are $54,000 and $16,000, respectively. With z-score normalization,

54,000

a value of $73,600 for income is transformed to 73,600

= 1.225.

16,000

A variation of this z-score normalization replaces the standard deviation of Eq. (3.9)

by the mean absolute deviation of A. The mean absolute deviation of A, denoted sA , is

1

+ |v2 A|

+ + |vn A|).

sA = (|v1 A|

n

(3.10)

3.5 Data Transformation and Data Discretization

115

Thus, z-score normalization using the mean absolute deviation is

vi0 =

vi A

.

sA

(3.11)

The mean absolute deviation, sA , is more robust to outliers than the standard deviation,

A . When computing the mean absolute deviation, the deviations from the mean (i.e.,

|xi x |) are not squared; hence, the effect of outliers is somewhat reduced.

Normalization by decimal scaling normalizes by moving the decimal point of values

of attribute A. The number of decimal points moved depends on the maximum absolute

value of A. A value, vi , of A is normalized to vi0 by computing

vi

(3.12)

vi0 = j ,

10

where j is the smallest integer such that max(|vi0 |) < 1.

Example 3.6 Decimal scaling. Suppose that the recorded values of A range from 986 to 917. The

maximum absolute value of A is 986. To normalize by decimal scaling, we therefore

divide each value by 1000 (i.e., j = 3) so that 986 normalizes to 0.986 and 917

normalizes to 0.917.

Note that normalization can change the original data quite a bit, especially when

using z-score normalization or decimal scaling. It is also necessary to save the normalization parameters (e.g., the mean and standard deviation if using z-score normalization)

so that future data can be normalized in a uniform manner.

3.5.3

Discretization by Binning

Binning is a top-down splitting technique based on a specified number of bins.

Section 3.2.2 discussed binning methods for data smoothing. These methods are also

used as discretization methods for data reduction and concept hierarchy generation. For

example, attribute values can be discretized by applying equal-width or equal-frequency

binning, and then replacing each bin value by the bin mean or median, as in smoothing

by bin means or smoothing by bin medians, respectively. These techniques can be applied

recursively to the resulting partitions to generate concept hierarchies.

Binning does not use class information and is therefore an unsupervised discretization technique. It is sensitive to the user-specified number of bins, as well as the presence

of outliers.

3.5.4

Discretization by Histogram Analysis

Like binning, histogram analysis is an unsupervised discretization technique because it

does not use class information. Histograms were introduced in Section 2.2.3. A histogram partitions the values of an attribute, A, into disjoint ranges called buckets

or bins.

116

Chapter 3 Data Preprocessing

Various partitioning rules can be used to define histograms (Section 3.4.6). In an

equal-width histogram, for example, the values are partitioned into equal-size partitions

or ranges (e.g., earlier in Figure 3.8 for price, where each bucket has a width of $10).

With an equal-frequency histogram, the values are partitioned so that, ideally, each partition contains the same number of data tuples. The histogram analysis algorithm can be

applied recursively to each partition in order to automatically generate a multilevel concept hierarchy, with the procedure terminating once a prespecified number of concept

levels has been reached. A minimum interval size can also be used per level to control the

recursive procedure. This specifies the minimum width of a partition, or the minimum

number of values for each partition at each level. Histograms can also be partitioned

based on cluster analysis of the data distribution, as described next.

3.5.5

Discretization by Cluster, Decision Tree,

and Correlation Analyses

Clustering, decision tree analysis, and correlation analysis can be used for data discretization. We briefly study each of these approaches.

Cluster analysis is a popular data discretization method. A clustering algorithm can

be applied to discretize a numeric attribute, A, by partitioning the values of A into clusters or groups. Clustering takes the distribution of A into consideration, as well as the

closeness of data points, and therefore is able to produce high-quality discretization

results.

Clustering can be used to generate a concept hierarchy for A by following either a

top-down splitting strategy or a bottom-up merging strategy, where each cluster forms

a node of the concept hierarchy. In the former, each initial cluster or partition may

be further decomposed into several subclusters, forming a lower level of the hierarchy. In the latter, clusters are formed by repeatedly grouping neighboring clusters in

order to form higher-level concepts. Clustering methods for data mining are studied in

Chapters 10 and 11.

Techniques to generate decision trees for classification (Chapter 8) can be applied to

discretization. Such techniques employ a top-down splitting approach. Unlike the other

methods mentioned so far, decision tree approaches to discretization are supervised,

that is, they make use of class label information. For example, we may have a data set of

patient symptoms (the attributes) where each patient has an associated diagnosis class

label. Class distribution information is used in the calculation and determination of

split-points (data values for partitioning an attribute range). Intuitively, the main idea

is to select split-points so that a given resulting partition contains as many tuples of the

same class as possible. Entropy is the most commonly used measure for this purpose. To

discretize a numeric attribute, A, the method selects the value of A that has the minimum

entropy as a split-point, and recursively partitions the resulting intervals to arrive at a

hierarchical discretization. Such discretization forms a concept hierarchy for A.

Because decision treebased discretization uses class information, it is more likely

that the interval boundaries (split-points) are defined to occur in places that may help

improve classification accuracy. Decision trees and the entropy measure are described in

greater detail in Section 8.2.2.

3.5 Data Transformation and Data Discretization

117

Measures of correlation can be used for discretization. ChiMerge is a 2 -based

discretization method. The discretization methods that we have studied up to this

point have all employed a top-down, splitting strategy. This contrasts with ChiMerge,

which employs a bottom-up approach by finding the best neighboring intervals and

then merging them to form larger intervals, recursively. As with decision tree analysis,

ChiMerge is supervised in that it uses class information. The basic notion is that for

accurate discretization, the relative class frequencies should be fairly consistent within

an interval. Therefore, if two adjacent intervals have a very similar distribution of classes,

then the intervals can be merged. Otherwise, they should remain separate.

ChiMerge proceeds as follows. Initially, each distinct value of a numeric attribute A is

considered to be one interval. 2 tests are performed for every pair of adjacent intervals.

Adjacent intervals with the least 2 values are merged together, because low 2 values

for a pair indicate similar class distributions. This merging process proceeds recursively

until a predefined stopping criterion is met.

3.5.6

Concept Hierarchy Generation for Nominal Data

We now look at data transformation for nominal data. In particular, we study concept

hierarchy generation for nominal attributes. Nominal attributes have a finite (but possibly large) number of distinct values, with no ordering among the values. Examples

include geographic location, job category, and item type.

Manual definition of concept hierarchies can be a tedious and time-consuming task

for a user or a domain expert. Fortunately, many hierarchies are implicit within the

database schema and can be automatically defined at the schema definition level. The

concept hierarchies can be used to transform the data into multiple levels of granularity. For example, data mining patterns regarding sales may be found relating to specific

regions or countries, in addition to individual branch locations.

We study four methods for the generation of concept hierarchies for nominal data,

as follows.

1. Specification of a partial ordering of attributes explicitly at the schema level by

users or experts: Concept hierarchies for nominal attributes or dimensions typically

involve a group of attributes. A user or expert can easily define a concept hierarchy by

specifying a partial or total ordering of the attributes at the schema level. For example, suppose that a relational database contains the following group of attributes:

street, city, province or state, and country. Similarly, a data warehouse location dimension may contain the same attributes. A hierarchy can be defined by specifying the

total ordering among these attributes at the schema level such as street < city <

province or state < country.

2. Specification of a portion of a hierarchy by explicit data grouping: This is essentially the manual definition of a portion of a concept hierarchy. In a large database,

it is unrealistic to define an entire concept hierarchy by explicit value enumeration. On the contrary, we can easily specify explicit groupings for a small portion

of intermediate-level data. For example, after specifying that province and country

118

Chapter 3 Data Preprocessing

form a hierarchy at the schema level, a user could define some intermediate levels

manually, such as {Alberta, Saskatchewan, Manitoba} prairies Canada and

{British Columbia, prairies Canada} Western Canada.

3. Specification of a set of attributes, but not of their partial ordering: A user may

specify a set of attributes forming a concept hierarchy, but omit to explicitly state

their partial ordering. The system can then try to automatically generate the attribute

ordering so as to construct a meaningful concept hierarchy.

Without knowledge of data semantics, how can a hierarchical ordering for an

arbitrary set of nominal attributes be found? Consider the observation that since

higher-level concepts generally cover several subordinate lower-level concepts, an

attribute defining a high concept level (e.g., country) will usually contain a smaller

number of distinct values than an attribute defining a lower concept level (e.g.,

street). Based on this observation, a concept hierarchy can be automatically generated based on the number of distinct values per attribute in the given attribute set.

The attribute with the most distinct values is placed at the lowest hierarchy level. The

lower the number of distinct values an attribute has, the higher it is in the generated concept hierarchy. This heuristic rule works well in many cases. Some local-level

swapping or adjustments may be applied by users or experts, when necessary, after

examination of the generated hierarchy.

Lets examine an example of this third method.

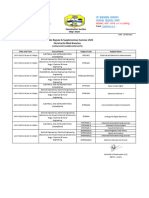

Example 3.7 Concept hierarchy generation based on the number of distinct values per attribute.

Suppose a user selects a set of location-oriented attributesstreet, country, province

or state, and cityfrom the AllElectronics database, but does not specify the hierarchical

ordering among the attributes.

A concept hierarchy for location can be generated automatically, as illustrated in

Figure 3.13. First, sort the attributes in ascending order based on the number of distinct values in each attribute. This results in the following (where the number of distinct

values per attribute is shown in parentheses): country (15), province or state (365), city

(3567), and street (674,339). Second, generate the hierarchy from the top down according to the sorted order, with the first attribute at the top level and the last attribute at the

bottom level. Finally, the user can examine the generated hierarchy, and when necessary,

modify it to reflect desired semantic relationships among the attributes. In this example,

it is obvious that there is no need to modify the generated hierarchy.

Note that this heuristic rule is not foolproof. For example, a time dimension in a

database may contain 20 distinct years, 12 distinct months, and 7 distinct days of the

week. However, this does not suggest that the time hierarchy should be year < month <

days of the week, with days of the week at the top of the hierarchy.

4. Specification of only a partial set of attributes: Sometimes a user can be careless

when defining a hierarchy, or have only a vague idea about what should be included

in a hierarchy. Consequently, the user may have included only a small subset of the

3.5 Data Transformation and Data Discretization

country

15 distinct values

province_or_state

365 distinct values

city

street

119

3567 distinct values

674,339 distinct values

Figure 3.13 Automatic generation of a schema concept hierarchy based on the number of distinct

attribute values.

relevant attributes in the hierarchy specification. For example, instead of including

all of the hierarchically relevant attributes for location, the user may have specified

only street and city. To handle such partially specified hierarchies, it is important to

embed data semantics in the database schema so that attributes with tight semantic

connections can be pinned together. In this way, the specification of one attribute

may trigger a whole group of semantically tightly linked attributes to be dragged in

to form a complete hierarchy. Users, however, should have the option to override this

feature, as necessary.

Example 3.8 Concept hierarchy generation using prespecified semantic connections. Suppose that

a data mining expert (serving as an administrator) has pinned together the five attributes number, street, city, province or state, and country, because they are closely linked

semantically regarding the notion of location. If a user were to specify only the attribute

city for a hierarchy defining location, the system can automatically drag in all five semantically related attributes to form a hierarchy. The user may choose to drop any of

these attributes (e.g., number and street) from the hierarchy, keeping city as the lowest

conceptual level.

In summary, information at the schema level and on attributevalue counts can be

used to generate concept hierarchies for nominal data. Transforming nominal data with

the use of concept hierarchies allows higher-level knowledge patterns to be found. It

allows mining at multiple levels of abstraction, which is a common requirement for data

mining applications.

112

Chapter 3 Data Preprocessing

3.5.1

Data Transformation Strategies Overview

In data transformation, the data are transformed or consolidated into forms appropriate

for mining. Strategies for data transformation include the following:

1. Smoothing, which works to remove noise from the data. Techniques include binning,

regression, and clustering.

2. Attribute construction (or feature construction), where new attributes are constructed and added from the given set of attributes to help the mining process.

3. Aggregation, where summary or aggregation operations are applied to the data. For

example, the daily sales data may be aggregated so as to compute monthly and annual

total amounts. This step is typically used in constructing a data cube for data analysis

at multiple abstraction levels.

4. Normalization, where the attribute data are scaled so as to fall within a smaller range,

such as 1.0 to 1.0, or 0.0 to 1.0.

5. Discretization, where the raw values of a numeric attribute (e.g., age) are replaced by

interval labels (e.g., 010, 1120, etc.) or conceptual labels (e.g., youth, adult, senior).

The labels, in turn, can be recursively organized into higher-level concepts, resulting

in a concept hierarchy for the numeric attribute. Figure 3.12 shows a concept hierarchy

for the attribute price. More than one concept hierarchy can be defined for the same

attribute to accommodate the needs of various users.

6. Concept hierarchy generation for nominal data, where attributes such as street can

be generalized to higher-level concepts, like city or country. Many hierarchies for

nominal attributes are implicit within the database schema and can be automatically

defined at the schema definition level.

Recall that there is much overlap between the major data preprocessing tasks. The first

three of these strategies were discussed earlier in this chapter. Smoothing is a form of

($0...$1000]

($0...$200]

($0...

$100]

($100...

$200]

($200...$400]

($400...$600]

($600...$800]

($800...$1000]

($200... ($300...

$300]

$400]

($400... ($500...

$500]

$600]

($600... ($700...

$700]

$800]

($800... ($900...

$900] $1000]

Figure 3.12 A concept hierarchy for the attribute price, where an interval ($X . . . $Y ] denotes the range

from $X (exclusive) to $Y (inclusive).

Potrebbero piacerti anche

- Data Mining - Mining Sequential PatternsDocumento10 pagineData Mining - Mining Sequential PatternsRaj EndranNessuna valutazione finora

- Data Mining-Mining Sequence Patterns in Biological DataDocumento6 pagineData Mining-Mining Sequence Patterns in Biological DataRaj EndranNessuna valutazione finora

- Data Mining-Graph MiningDocumento9 pagineData Mining-Graph MiningRaj EndranNessuna valutazione finora

- Data Mining-Outlier AnalysisDocumento6 pagineData Mining-Outlier AnalysisRaj EndranNessuna valutazione finora

- Data Mining-Spatial Data MiningDocumento8 pagineData Mining-Spatial Data MiningRaj EndranNessuna valutazione finora

- 5.1 Mining Data StreamsDocumento16 pagine5.1 Mining Data StreamsRaj EndranNessuna valutazione finora

- Data Mining-Mining Time Series DataDocumento7 pagineData Mining-Mining Time Series DataRaj EndranNessuna valutazione finora

- Data Mining-Partitioning MethodsDocumento7 pagineData Mining-Partitioning MethodsRaj Endran100% (1)

- Data Mining-Multimedia DataminingDocumento8 pagineData Mining-Multimedia DataminingRaj EndranNessuna valutazione finora

- Data Mining-Support Vector Machines and Associative Classifiers RevisedDocumento4 pagineData Mining-Support Vector Machines and Associative Classifiers RevisedRaj EndranNessuna valutazione finora

- Data Mining-Model Based ClusteringDocumento8 pagineData Mining-Model Based ClusteringRaj EndranNessuna valutazione finora

- Data Mining-Constraint Based Cluster AnalysisDocumento4 pagineData Mining-Constraint Based Cluster AnalysisRaj Endran100% (1)

- Data Mining - Other ClassifiersDocumento7 pagineData Mining - Other ClassifiersRaj EndranNessuna valutazione finora

- Data Mining - Data ReductionDocumento6 pagineData Mining - Data ReductionRaj EndranNessuna valutazione finora

- Data Mining - Bayesian ClassificationDocumento6 pagineData Mining - Bayesian ClassificationRaj EndranNessuna valutazione finora

- Data Mining - DiscretizationDocumento5 pagineData Mining - DiscretizationRaj EndranNessuna valutazione finora

- Data Mining-BackpropagationDocumento5 pagineData Mining-BackpropagationRaj Endran100% (1)

- Data Mining-Applications, IssuesDocumento9 pagineData Mining-Applications, IssuesRaj EndranNessuna valutazione finora

- Data Mining-Rule Based ClassificationDocumento4 pagineData Mining-Rule Based ClassificationRaj EndranNessuna valutazione finora

- 02 Data Mining-Partitioning MethodDocumento8 pagine02 Data Mining-Partitioning MethodRaj EndranNessuna valutazione finora

- Data Mining-Data WarehouseDocumento7 pagineData Mining-Data WarehouseRaj EndranNessuna valutazione finora

- 08 Data Mining-Other ClassificationsDocumento4 pagine08 Data Mining-Other ClassificationsRaj EndranNessuna valutazione finora

- Data Mining - Density Based ClusteringDocumento8 pagineData Mining - Density Based ClusteringRaj EndranNessuna valutazione finora

- Data Mining - Outlier AnalysisDocumento11 pagineData Mining - Outlier AnalysisRaj Endran100% (2)

- Shoe Dog: A Memoir by the Creator of NikeDa EverandShoe Dog: A Memoir by the Creator of NikeValutazione: 4.5 su 5 stelle4.5/5 (537)

- The Yellow House: A Memoir (2019 National Book Award Winner)Da EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Valutazione: 4 su 5 stelle4/5 (98)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDa EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeValutazione: 4 su 5 stelle4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingDa EverandThe Little Book of Hygge: Danish Secrets to Happy LivingValutazione: 3.5 su 5 stelle3.5/5 (400)

- Grit: The Power of Passion and PerseveranceDa EverandGrit: The Power of Passion and PerseveranceValutazione: 4 su 5 stelle4/5 (588)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDa EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureValutazione: 4.5 su 5 stelle4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDa EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryValutazione: 3.5 su 5 stelle3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDa EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceValutazione: 4 su 5 stelle4/5 (895)

- Team of Rivals: The Political Genius of Abraham LincolnDa EverandTeam of Rivals: The Political Genius of Abraham LincolnValutazione: 4.5 su 5 stelle4.5/5 (234)

- Never Split the Difference: Negotiating As If Your Life Depended On ItDa EverandNever Split the Difference: Negotiating As If Your Life Depended On ItValutazione: 4.5 su 5 stelle4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerDa EverandThe Emperor of All Maladies: A Biography of CancerValutazione: 4.5 su 5 stelle4.5/5 (271)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDa EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaValutazione: 4.5 su 5 stelle4.5/5 (266)

- On Fire: The (Burning) Case for a Green New DealDa EverandOn Fire: The (Burning) Case for a Green New DealValutazione: 4 su 5 stelle4/5 (74)

- The Unwinding: An Inner History of the New AmericaDa EverandThe Unwinding: An Inner History of the New AmericaValutazione: 4 su 5 stelle4/5 (45)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDa EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersValutazione: 4.5 su 5 stelle4.5/5 (345)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDa EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyValutazione: 3.5 su 5 stelle3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDa EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreValutazione: 4 su 5 stelle4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Da EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Valutazione: 4.5 su 5 stelle4.5/5 (121)

- Her Body and Other Parties: StoriesDa EverandHer Body and Other Parties: StoriesValutazione: 4 su 5 stelle4/5 (821)

- Elastic Modulus SFRCDocumento9 pagineElastic Modulus SFRCRatul ChopraNessuna valutazione finora

- P 1 0000 06 (2000) - EngDocumento34 pagineP 1 0000 06 (2000) - EngTomas CruzNessuna valutazione finora

- Building Program Template AY02Documento14 pagineBuilding Program Template AY02Amy JaneNessuna valutazione finora

- Micron Interview Questions Summary # Question 1 Parsing The HTML WebpagesDocumento2 pagineMicron Interview Questions Summary # Question 1 Parsing The HTML WebpagesKartik SharmaNessuna valutazione finora

- 23 Things You Should Know About Excel Pivot Tables - Exceljet PDFDocumento21 pagine23 Things You Should Know About Excel Pivot Tables - Exceljet PDFRishavKrishna0% (1)

- Cs8792 Cns Unit 1Documento35 pagineCs8792 Cns Unit 1Manikandan JNessuna valutazione finora

- A320 Basic Edition Flight TutorialDocumento50 pagineA320 Basic Edition Flight TutorialOrlando CuestaNessuna valutazione finora

- PLT Lecture NotesDocumento5 paginePLT Lecture NotesRamzi AbdochNessuna valutazione finora

- 4th Sem Electrical AliiedDocumento1 pagina4th Sem Electrical AliiedSam ChavanNessuna valutazione finora

- Avalon LF GB CTP MachineDocumento2 pagineAvalon LF GB CTP Machinekojo0% (1)

- SBL - The Event - QuestionDocumento9 pagineSBL - The Event - QuestionLucio Indiana WalazaNessuna valutazione finora

- General Field Definitions PlusDocumento9 pagineGeneral Field Definitions PlusOscar Alberto ZambranoNessuna valutazione finora

- SILABO 29-MT247-Sensors-and-Signal-ConditioningDocumento2 pagineSILABO 29-MT247-Sensors-and-Signal-ConditioningDiego CastilloNessuna valutazione finora

- Information Security Chapter 1Documento44 pagineInformation Security Chapter 1bscitsemvNessuna valutazione finora

- Role of The Government in HealthDocumento6 pagineRole of The Government in Healthptv7105Nessuna valutazione finora

- Engineering Management (Final Exam)Documento2 pagineEngineering Management (Final Exam)Efryl Ann de GuzmanNessuna valutazione finora

- Unit-5 Shell ProgrammingDocumento11 pagineUnit-5 Shell ProgrammingLinda BrownNessuna valutazione finora

- Apm p5 Course NotesDocumento267 pagineApm p5 Course NotesMusumbulwe Sue MambweNessuna valutazione finora

- Zelio Control RM35UA13MWDocumento3 pagineZelio Control RM35UA13MWSerban NicolaeNessuna valutazione finora

- Ss 7 Unit 2 and 3 French and British in North AmericaDocumento147 pagineSs 7 Unit 2 and 3 French and British in North Americaapi-530453982Nessuna valutazione finora

- Accomplishment ReportDocumento1 paginaAccomplishment ReportMaria MiguelNessuna valutazione finora

- Course Specifications: Fire Investigation and Failure Analysis (E901313)Documento2 pagineCourse Specifications: Fire Investigation and Failure Analysis (E901313)danateoNessuna valutazione finora

- United Nations Economic and Social CouncilDocumento3 pagineUnited Nations Economic and Social CouncilLuke SmithNessuna valutazione finora

- Basic DfwmacDocumento6 pagineBasic DfwmacDinesh Kumar PNessuna valutazione finora

- Gabby Resume1Documento3 pagineGabby Resume1Kidradj GeronNessuna valutazione finora

- Office Storage GuideDocumento7 pagineOffice Storage Guidebob bobNessuna valutazione finora

- Lab Session 7: Load Flow Analysis Ofa Power System Using Gauss Seidel Method in MatlabDocumento7 pagineLab Session 7: Load Flow Analysis Ofa Power System Using Gauss Seidel Method in MatlabHayat AnsariNessuna valutazione finora

- Government of West Bengal Finance (Audit) Department: NABANNA', HOWRAH-711102 No. Dated, The 13 May, 2020Documento2 pagineGovernment of West Bengal Finance (Audit) Department: NABANNA', HOWRAH-711102 No. Dated, The 13 May, 2020Satyaki Prasad MaitiNessuna valutazione finora

- Himachal Pradesh Important NumbersDocumento3 pagineHimachal Pradesh Important NumbersRaghav RahinwalNessuna valutazione finora

- NOP PortalDocumento87 pagineNOP PortalCarlos RicoNessuna valutazione finora