Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

Networking Basics: by Shay Woodard, Nick Gattuccio, & Marshall Brain

Caricato da

api-19732732Descrizione originale:

Titolo originale

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

Networking Basics: by Shay Woodard, Nick Gattuccio, & Marshall Brain

Caricato da

api-19732732Copyright:

Formati disponibili

Woodard • Gattuccio • Brain Networking Basics

Networking Basics

by Shay Woodard, Nick Gattuccio, & Marshall Brain

The following article is excerpted from Chapter Two, “Networking Basics,” of

the book Microsoft Technology: Networking, Concepts, Tools, by Woordard, Gat-

tuccio and Brain (Copyright © 1999 by Prentice Hall PTR, Prentice-Hall, Inc.)

Reprinted by permission.

A network is two things – technology and business. While the first of these is quite obvious, network

technology is sometimes so overwhelming to non-technical managers that they lose sight of its basic role –

to perform as a business tool. This is a classic case of losing the forest for the trees. While network technol-

ogy is very important, its complexity should not obscure its role as a business tool.

In this chapter, our goal is twofold. First, we hope to demystify network technology by painting a

detailed picture of its key pieces and defining the technology’s key vocabulary. While a great deal of tech-

nology is involved, it all comes together in a straightforward way. Understanding the vocabulary clarifies

nine-tenths of the technology. In Chapter One we introduced network technology in terms of the network

services it provides. While this is the plan we’ll follow throughout the book, it is nevertheless important to

understand network technology nuts and bolts. Our goal in this chapter, then, is to address network technol-

ogy at its most fundamental layer – the wires, data, and connections.

This chapter’s second goal is to highlight the central business issues that fall under the rubric of manag-

ing technology. In few other areas of business planning is there greater risk of technical systems turning the

tables on managers and creating a situation where the technology dominates business. The two – technology

and business – work hand-in-glove because a poorly implemented network creates limitations and con-

straints that limit business planning options.

PART ONE: The ABCs of Network Technology

The basic unit of network organization is the LAN – the Local Area Network. This is very important.

Nearly everything in network architecture and design builds on the fundamental principle that a LAN is the

network building block. While in practice, LANs are typically broken up into smaller units – LAN segments

or workgroups, for example – this does not alter the LAN’s status as the fundamental unit of network order.

It is also helpful to think of LANs not as computing technology, but as communication technology.

Their central function is letting users share computing resources by allowing data to flow easily from device

to device. On this simple principle, designers use cable to connect computers and other network devices

(printers, for example) within a limited area (for example, within a department or on a floor of a multistory

building). Then, using these LANs for building blocks, networks can expand to virtually any scale by simply

linking together the individual LANs. This allows you to link floors of a building (each with its own LAN)

into a company-wide network, or link several buildings on a campus, or even link up sites around the world

into a powerful enterprise-wide network. This LAN-based model lets you build networks on virtually any

geographical scale.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 1

Woodard • Gattuccio • Brain Networking Basics

Communication Media & the Network Interface

In a network, information (data) traverses a communications medium (typically a wire) as electrical sig-

nals that originate and terminate in computing devices like computers and printers. To visualize the process,

picture a busy freeway as seen from the air. Traffic flows smoothly and vehicles enter and exit at ramps

located every few miles along the route. Viewed from above, the traffic appears to be a single continuous

stream. Upon closer inspection, however, you notice that vehicles enter and leave the freeway in a seemingly

random fashion. But this is not the case. The motion is not random. Each vehicle has its own point of origin

and its own destination, irrespective of the streaming flow of traffic.

The analogy is not perfect, but it successfully isolates three basic elements of a network: its communica-

tion medium (typically a cable, but radio frequency and infrared transmissions can also serve as a data com-

munications medium); its data (which flows in its most elementary binary form – essentially zeros and

ones); and the points of contact between network wires and the computing devices to which they are con-

nected. These latter are called network interface cards (NICs). These three are the highway, the traffic, and

the entry/exit ramps. (There is a fourth critical piece on this layer, the connection devices like hubs and

switches; we’ll discuss these shortly.)

Cable is the communication medium over which LANs pass data – the electronic highway. As with the

transportation analogy, the medium can take many forms (freeway, boulevard, street, or road). Network

cables are typically coaxial, twisted-pair, or fiber optic. Some are shielded – that is, insulated to help prevent

electromagnetic interference from corrupting the signal – while others are not. In twisted-pair cable, two

insulated wires are twisted, then encased in a sheath. Coaxial, on the other hand, has a solid wire at its center

which is surrounded by insulation, then an outer metal screen. Both transmit data in the form of electrical

signals. With fiber-optic cable, however, data is transmitted as modulated light waves traveling through a

glass medium.

These different types of cable vary in cost and transmission capacity, and each typically serves a spe-

cific network role. For example, on LANs, unshielded twisted pair (UTP) cable is most commonly used

because it is inexpensive, easy to work with (it is thin and flexible, much like telephone wire), and it per-

forms well over a typical LAN’s short distances. On the other hand, a high-speed backbone (high-capacity

cables that connect LANs together) might use a fiber optic cable because of its large transmission capacity.

Fiber optic cable has the added advantage of being non-conductive, which is advantageous for external

cables (between buildings, for example) that are vulnerable to lightening strikes. Coaxial cable (thick, stiff

cable similar to that used for TV-to-VCR connections) is rapidly losing favor on modern networks because it

is difficult to work with and integrates poorly with switched networks.

Network devices make their physical connections to network cable through a special adapter called a

network interface card (NIC). The network transfers data over cable in its most elementary form – as a

stream of bits (zeros and ones). The NIC takes in data and converts it to a form that fis the transmission

medium – the cable. Computers hand their data over to the NIC, which chops them into small segments and

puts them into “envelopes” called packets. Then, the NIC moves the packets onto the network. The NIC also

does the reverse – picks incoming packets off the network and hands them over to the computer. NICs are

standard on many computers, printers, and other devices designed for network use, or else one can be

installed.

Data passes over the network inside electronic envelopes called packets. (We’ll discuss packets and

packet switching in more detail in Chapter Ten.) Quite simply, though, when a user at one workstation

wishes to exchange information with a user at another, network software on the first user’s workstation

places the data into one or more packets. Packets have a limited size, so if the “message” is too large to fit in

a packet, the software breaks it up into manageable fragments and places each into its own packet. The

packet also contains special information, including its destination address. Then, the NIC at the sender’s

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 2

Woodard • Gattuccio • Brain Networking Basics

workstation dispatches the packet onto the LAN. As the packet transits the network, every device attached to

the local network “sees” it. If the device is not the intended destination, it ignores the packet. If, however, the

address on the packet matches that of the network device, the NIC at the receiver’s end copies the packet and

removes the data from the packet and hands it over to the computer, whose own software takes over.

The above illustration simplifies the exchange somewhat, but it nevertheless paints a sensible picture of

how a network functions at its basic level – at the layer of the wire. The principles of packets and addressing,

and the view of a network as fundamentally wire, and the role that software plays in adding “intelligence” to

the flow of network communication are among networking’s core principles.

Moving Data: Ethernet & Fast Ethernet, etc.

Data needs an engine to drive it. While the wire itself is the transmission medium, different technologies

handle the task of physically moving the data over the wires and regulating the characteristics of the electri-

cal signals that carry it. The most common of these is Ethernet, in place in a reported 80% of LANs. Other

technologies include Token Ring, FDDI (fiber distributed data interface), LocalTalk (for Apple Macin-

toshes), and some others. Each of these standards governs several key features of how data moves around a

network. Two technical aspects involve the physical characteristics of the electrical signals themselves, and

the manner in which the data is packaged (the look and feel of the packets we spoke of). Other issues involve

the speed at which data can flow, the physical length of wire it can support, and the network’s topology (that

is, the physical layout of the network, as in a star, ring, or straight-line bus). Finally, there is the very impor-

tant issue of how it brokers shared access to the network medium – the cable – to avoid data “collisions.”

Ethernet is the most popular option for controlling this physical layer of data flow because it offers an

excellent balance of cost, speed, and ease of implementation. Relatively inexpensive, Ethernet supports data

flowing at a rate of 10 million bits per second (10 Mbps), although an improved Ethernet, called Fast Ether-

net, supports speeds up to 100 Mbps. Its main weakness shows up on busy or crowded LAN segments,

where data “collisions” may degrade network performance. This may occur because the access method

Ethernet uses to push data onto the network allows more than one station to access the wire at the same time.

If this happens, a collision will occur. Ethernet corrects the collision by prompting those involved to wait

briefly, then re-send their packets, but the error and recovery require time. On a very busy network, frequent

data collisions may degrade network performance.

Ethernet uses an access method wherein each device, or network node, initiates its own access to the

network. When a workstation, for example, wants to send data over the network, its NIC “looks” to see if the

line is free. If it detects another signal on the wire, it waits a short interval, then tries again. When it sees the

line is clear, it sends its packets. The problem occurs when more than one network node tries to access the

network at precisely the same time. Both see a free network, so both dispatch their packets, which then col-

lide.

An alternative to Ethernet is a standard called Token Ring. A Token Ring network avoids collisions

altogether because it uses a different access method that doesn’t allow devices to initiate their access inde-

pendently. In a Token Ring network, a special data packet called a token passes around the ring from device

to device in just one direction. If a device wishes to access the network to transmit data, it must first wait

until it receives the token. It cannot initiate access without it. Once it obtains the token, it dispatches its data,

along with the token, out onto the ring. The destination receives the message, pulls it from the network, then

releases the token and the process repeats.

On a corporate LAN, Fast Ethernet is typically the standard of choice – in part because it is the market

leader, and in part due to its great value as a cost-effective, relatively high-speed option. Beyond the scope of

LANs, however, networks may require even higher-speed connections and more reliable data flow. These

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 3

Woodard • Gattuccio • Brain Networking Basics

include network backbone connections that link geographically separated LANs. (Short-run backbones – for

example, those connecting LANs on separate floors of multistory buildings – can function well with Fast

Ethernet.) Linking multiple buildings, as with several buildings on a university or corporate campus, are typ-

ically served by a robust connection like FDDI. Standing for Fiber Distributed Data Interface, FDDI offers

100 Mbps connection speeds that are highly stable (i.e., resistant to electromagnetic interference or other

line “noise”). FDDI is also appropriate for peer-to-peer server connections, particularly where a large, busy

network is supported by multiple groups of pooled servers.

It should be noted that in actual practice none of these technologies delivers their rated speeds. Informa-

tion in the data’s envelope (the packet headers and trailers) increases by about 30% the data’s “packet over-

head.” Add to this gaps between packets and data collisions (with Ethernet) and a 10 Mbps Ethernet network

is able to sustain throughput of only about 2.5 Mbps.

So far we have presented a very simple picture of a network that consists of devices, data, NICs, and

cable. This picture is based on a simple model, called a bus network. In such a LAN, the cable is viewed as a

single line with devices connected at various points along the way. Such a LAN bus topology is rarely used

any more, but its value comes in how well it illustrates the interaction of the basic network elements. While

a bus topology itself is archaic, its pieces and the ways in which they interact are not.

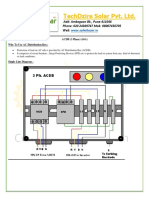

Figure 0–1 Basic LAN Bus Network

Basic LAN Bus Network Topology. This simple topology illustrates how the fundamental

“wire-layer” network pieces – device, data, NIC, and transmission medium interact to

complete network connections. While the bus topology is outdated, its model of network

interactions is not.

The concepts exposed in Figure 2-1 apply to more common topologies as well. While significant differ-

ences separate the topologies of LAN bus, Ethernet, and Token Ring networks, the manner in which their

basic pieces interact remains largely consistent. Network professionals may bristle at this last statement, as

technical subtleties tempt one to cite exceptions and qualifications to such a blanket statement. For the pur-

poses of this overview, however, the picture is reliable – but with one notable exception. So far, we have not

mentioned one of the most important elements of a LAN – the hub. The hub, along with other network con-

necting devices, enables networks to expand. If LANs are a network’s basic building blocks, then the central

connectors are the glue. But this is intelligent glue.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 4

Woodard • Gattuccio • Brain Networking Basics

The Central Connections: Hubs, Bridges, Routers & Switches

We said earlier that LANs are the basic building blocks of a computer network. Large-scale networks

knit LANs together with cables (or other transmission medium). But linking LANs together introduces com-

plexity, particularly if the network has a great many LANs, or if one or more are separated geographically.

As we saw in the bus model, all of a network’s devices share the communications medium (the cable) in

such a way that every “message” passes every device, and every device “looks” at every message. On a

LAN’s small scale, distributing all of the traffic throughout the network does not typically overwhelm it.

When several LANs are joined into a large, wide-ranging network, however, the potential for problems is

enormous.

It would be extremely inefficient for a 50-LAN network, for example, to distribute all of its traffic over

every one of its segments. A much better system would build on the data packet’s inherent addressability.

Recall that data packets contain the data’s destination “address.” But while packets contain the address of

their destination, the packets themselves have no way of using the address information. That’s the NIC’s job.

Instead, packets simply traverse the network. The NIC pays attention to passing traffic, and when it notices

that a packet is addressed to its attached device, it copies the packet off the network. The packets themselves

have no “intelligence.”

Another problem is that LANs have physical limitations. There are limits to how many workstations a

LAN can efficiently support, and to how much traffic it can carry. There is also a limit on how far a run of

LAN cable can extend (about 100 meters) before its signal degrades. The point is, building large-scale net-

works is constrained by technology that only performs well on a small scale – on the scale of the LAN.

These issues create an enormous technical constraint on network scalability. Without solutions, large-scale

networks would be impossible to build. Fortunately, these problems are solved by the technology of central

connection points – hubs, bridges, routers, and switches. These devices allow networks to be segmented, and

they also add “address-intelligence” at the level of the physical wire. This makes the potentially overwhelm-

ing traffic problem manageable. They also solve the problem of line-length constraints – where the physics

of data transmission over wire degrades the electrical signal over distance – by acting as repeaters.

Hubs

A hub is among the most critical elements of a LAN, as it is the point of central connection for all of the

LAN’s shared devices. A typical hub has multiple ports to which a LAN’s devices connect. The actual num-

ber of ports and the transmission capacity of each varies widely, as does their price and additional features.

Regardless, a hub serves the same function as the shared cable in the bus model – it connects devices on the

LAN. Figure 2-2 depicts the hubbed LAN.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 5

Woodard • Gattuccio • Brain Networking Basics

Figure 0–2 A Simple Hubbed LAN

Rather than a straight-line connection, as with a bus topology, a hubbed LAN relies on a

hub to function as a central connector. Traffic from any device on the LAN goes to the hub,

which “repeats” the message out to all of its connected devices.

On a hubbed LAN, devices exchange data by first sending it (through its NIC) to the hub. The hub, in

turn, repeats the “message” back out to all of its connected devices. Again, as with the bus LAN, only the

device to which the message is addressed will copy the message, while the others ignore it. By repeating the

message, the hub extends the effective distance the message signal can extend, since the hub transmits what

is essentially a fresh, new signal. This way, every device on the LAN can be as far away from the hub as the

connecting medium will allow (100 meters). That probably wouldn’t be practical, but it might. What’s

important is that this lets a LAN scale up very easily, since adding new devices does not increase the physi-

cal length of the LAN (as adding devices to a bus would), but only adds a new connection to it. If a LAN

grows to the point where all of its hub ports are in use, then you can simply add a second hub to one of the

original hub’s ports. The second hub repeats the first hub’s transmissions through all of its ports. Hub jump-

ing like this allows enormous flexibility and scalability in LAN design, although there are physical limits to

how many jumps are allowed.

The effective scale of a hubbed LAN is also constrained by network traffic. Because hubs repeat all

transmission to all of its connected devices, hubs can easily support a volume of network traffic that exceeds

the cable’s ability to carry it. Exceeding the medium’s effective transmission capacity will degrade network

performance. When a LAN reaches its maximum effective size, it is necessary to install another LAN and

then connect the two. From another perspective, installing another LAN allows designers to segment a net-

work, effectively breaking it into smaller pieces, improving performance while adding even more scalability.

Hubs connect devices within a LAN. Three main types of devices are designed to connect inter-

networked LANs – routers, bridges, and switches.

Routers

A large network that links, for example, 25 LANs, might support five hundred or more users. Under the

hub model, where data is broadcast to all connected devices, a network such as this would collapse under the

weight of its traffic. To scale beyond the LAN, there clearly needs to be a way to segment and route network

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 6

Woodard • Gattuccio • Brain Networking Basics

traffic. A large network needs to have intelligence on the wire so it can read data-packet address information

and use it to segment and route its network traffic.

Figure 0–3 Inter-Networked LANs

Using “intelligent” connectors like routers and switches, virtually unlimited numbers of

LANs may be inter-networked into enterprise-wide networks. Such networks are not limited

to just a single geographical location, but can unite a network even on a global scale.

It is reported that typically 80% of transmissions on a workgroup or departmental LAN are destined for

stations within that LAN. Filtering out that 80% and keeping it off the larger network lets designers build

both scale and efficiency into a network. Routers are among the most popular technologies to filter network

traffic.

A router is a device that, like a hub, has ports through which data passes. With a router, however, data

passes only from one LAN to another. Unlike hubs, routers do not blindly repeat the data they receive.

Instead, routers are “intelligent” devices. As data packets flow into them, they inspect the packet’s header

(which contains the packet’s destination address) and based on this information they make a routing deci-

sion. The most obvious decision the router makes is when the destination address is on the same LAN as

point of origin. In this case, the router stops it from leaving the LAN.

But a router is capable of making more than simple yes/no forwarding decisions. Because routers under-

stand communications protocols (like IP, which governs Internet addressing), routers can make configurable

routing decisions. For example, administrators can use routers to help balance network traffic by configuring

them to use predetermined pathways.

Because routers are configurable, they lend themselves to complex uses, as in a network security sys-

tem. Routers can play an important role in a network’s firewall, for example. Another strength is that routers

can connect LANs that use different protocols – for example, connecting an Ethernet LAN with a Token

Ring LAN. The tradeoff for all of this intelligence and functionality is twofold. First, routers are typically

more expensive than other inter-network connection devices, and they are also more complicated to set up.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 7

Woodard • Gattuccio • Brain Networking Basics

Bridges

Bridges are simpler and less expensive than routers, but offer similar inter-network capability. Typi-

cally, bridges have ports connected to two or more separate LANs. Rather than complicated routing deci-

sions, however, bridges make straightforward yes/no decisions about forwarding the data packets they

receive. Bridges base their decisions on the packet’s destination address, which it compares to a stored table

of known network addresses. If the destination is located on the same LAN from which the packet origi-

nated, it will not be allowed across the bridge. If addressed to a device on another LAN, the packet is

bridged to a port through which it can reach its destination.

Like routers, bridges inspect information in the packet’s header. But because they make more limited

use of packet information, they look at far less of the header information than routers do. This makes them

very fast. They are also protocol-independent, not because they “understand” protocols, as routers do, but

because they can ignore protocol issues altogether. This makes them extremely versatile on a homogeneous

network. While not as sophisticated as routers, bridges do possess some filtering capabilities.

Switches

Switches are the most recent, most sophisticated and most expensive of inter-networking devices. Like

routers and bridges, switches also link LANs. Their strength comes in their ability to link many LANs. They

are like bridges, but because they have multiple ports they can manage complex switching among multiple

LANs. This makes them useful tools for segmenting network traffic. Switches can also allocate bandwidth

in their segment connections. This allows network administrators to balance network traffic to reduce con-

gestion on workgroups while still providing high-bandwidth “pipelines” where they are needed (in connec-

tions to server pools, for example).

Switches provide the same kind of address-intelligence (filtering and forwarding) that routers provide.

But rather than working with the IP address, as routers do, a switch uses a special media access control

(MAC) address that works at a very low level, where the network devices interface with the medium (cable).

Network nodes, or devices, are mapped to unique MAC addresses, and the switch can then handle routing

and filtering tasks independent of protocols or other constraints.

Among the greatest strengths of a switch is its ability to support connections across multiple LANs as

well as supporting simultaneous transmissions. For example, a switch might have a high-bandwidth connec-

tion (100 Mbps) coming in from one LAN which it switches to ten different 10-Mbps segments of a second

LAN. A switch is capable of servicing traffic to the ten smaller connections simultaneously. This offers

enormous efficiency and speed. Speed can be even further increased with a technique called duplexing,

which can effectively double the bandwidth of a connection.

Our summary of these central connectors is necessarily brief. The technology is in many cases very

complex. It is also rapidly evolving. There are variations (like self-learning hubs) and powerful hybrid tech-

nologies (switching hub, for example), but the concepts remain relatively straightforward.

Summary of Internetworking

Networking technology has evolved rapidly in recent years, and the trend is almost certain to accelerate

in coming years. In its early days (the early 1980s), networks were essentially local – they were LANs. As

the role of the personal computer in business (and the university) mushroomed, LANs proliferated. The

power and utility they offered by enabling a shared computing environment was so compelling that the next

step in their evolution was inescapable – inter-networked LANs.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 8

Woodard • Gattuccio • Brain Networking Basics

This class of network technology, the central connectors, are the essential feature in the evolution of net-

works. They are the cornerstone of network scalability. As their technology evolves (expanding the power

and functionality of the central connections, and increasing their speed and bandwidth) the shape and char-

acteristics of networks themselves will also continue to evolve.

Computing Devices

On top of the network layer that we’ve described so far sit the computing devices themselves. These

include workstation computers of many types (including portable, or “laptop” computers), server computers,

and a wide assortment of peripheral devices like printers, data storage devices, and many others. In the

course of discussing network services throughout the remainder of this book, we’ll discuss computing

devices extensively.

Although the subject of computing devices is vast and far-reaching, from the point of view of the net-

work, computing devices are very straightforward. They are simply points of origin and destination for net-

work data. When a workstation sends a print job to a workgroup printer, for example, all the network layer

sees is the stream of data. It doesn’t know the data is a print job, and it doesn’t care. Database file, print job,

email message – these are all the same to the network layer. This is an important feature of networking – its

layers – and we’ll come back to it at several points throughout the book. Erecting network technology (and

computing technology in general) in discrete layers like this allows layers to evolve independently of one

another. For example, while to a certain extent applications need to be “network-aware,” in general a word

processor doesn’t care if it is on a network or not. These layers – network, computing devices, and software

– enjoy a reasonable degree of independence, as does their implementation in a network environment.

Bringing It All Together

In Chapter One we discussed networks in terms of their architecture – client/server, peer-to-peer, etc.

We outlined network functionality in terms of the services a network provides. The overview in Chapter

One, with its emphasis on architecture and services, lays the foundation for how we intend to proceed

throughout this book. At the same time, the technical viewpoint (at the level of the nuts and bolts, if you

will) is an equally legitimate window on network technology. While we’re electing not to pursue it at length

beyond our brief treatment here, it is nevertheless central.

These two windows into networking (the bottom-up and the top-down views, in a sense) interact very

closely. In fact, they are inseparable. A network’s architecture, and the extent of the services it provides,

depends on the state of networking technology. Where the network imposes constraints (as with bandwidth),

the constraint extends to every piece on the network. This point is central. Our transportation analogy carries

over to this point. Transportation technology (vehicles – their design and functionality) reflects the state of

transportation infrastructure. If trucks, for example, were twenty feet wide and a hundred feet long, apples

would be cheaper. While it would be easy to build trucks like this, it doesn’t happen because the network

medium (roads and freeways) can’t handle trucks like this. The network’s constraints become the technol-

ogy’s. And the point works in reverse. Supersonic flight, for example, is extremely expensive and environ-

mentally unsound (due to noise issues primarily). These are technological constraints. Consequently, our

commercial air-traffic network reflects these limits of aircraft technology.

Networking technology is evolving at a more rapid pace than any other aspect of business systems.

Advances over just the past decade have created communications and commercial platforms that only a few

could have imagined in the 1980s. There is no reason to doubt the trend will continue. The software, hard-

ware, and communications companies leading the technological vanguard are largely prosperous, and ven-

ture capital is abundant. The climate for technological innovation is optimistic, even frenetic. On the

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 9

Woodard • Gattuccio • Brain Networking Basics

marketing end, the industry is consolidating its resources, as software, communications, media, and enter-

tainment corporations merge into multi-media giants, poised to exploit new opportunities in the field –

opportunities that will be fostered largely by advances in technology. Enhancements to networking infra-

structure are occurring at an extraordinary pace and on a global scale. Traditionally separate mediums –

computing, broadcast television, telephone systems, cable systems, and even satellite technology – are rap-

idly crossing one another’s boundaries and integrating their respective technologies. The common denomi-

nator in all of this is networking.

PART TWO: Managing Technology

The common complaint is that the technology manages the business. This exaggerates the point, of

course, but the point merits attention nevertheless. Poorly managed, technology can hold a business hostage.

The day is long past where business managers could foist technology decision-making onto technical

management and concentrate instead on business. Among our central themes in this book is that technology

is business.

What things does a business manager need to know about technology? Does he or she need to know the

difference between Cat 5 and Cat 3 cable, or the relative merits of a bastion host versus packet-filtered fire-

wall? Probably not. But a business manager does need to know the right questions to ask. When faced with

any technology decision, here are some of the fundamental business questions a manager should be asking:

• What does this technology do for the business? What does it not do? How will our business systems

benefit, and how will they be constrained?

• How much does this technology cost? What is the learning curve for users? What are its risks? How

does it compare with alternative technologies? Where does the new technology stand compared to

its predecessor – is it an incremental improvement or a significant innovation? How will its intro-

duction impact other pieces of the network?

• How will the technology affect our business partners? Our clients and customers? Will it increase

our ability to communicate with them? Will it enhance the business systems that support our interac-

tions with them, or will it degrade them, or have no effect?

• If this technology breaks, what happens to the rest of the network, and to the business systems it

supports? How easily and inexpensively can it be repaired? Can the technology be made fault-toler-

ant, and at what cost?

• How difficult is it to administer and maintain? Can existing technical staff manage it, or will they

require training? If so, how much? How great a leap in technical expertise will it require?

• Is the technology mainstream, or is it extreme? Will it be widespread in two years? Will it still be

supported then?

• What are the recurring costs of the technology? What are the exposures? What is the useful life of

the technology?

• How does it compare with network strategies employed by other companies that are our size? What

about other companies in our industry?

• How do you calculate the return on this investment? What is the return? Will benefits accrue to the

company immediately, or is this part of a long-term strategy that is tied to the network’s (or the busi-

ness system’s) long-term evolution?

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 10

Woodard • Gattuccio • Brain Networking Basics

There is no perfect technology, nor perfect mix of technologies. Like building anything else – a house,

for example – any number of sizes, shapes, and designs can suit a business’s needs. It is essential to under-

stand a technology’s goals and purposes before understanding its technology.

Network Cost Centers

In Chapter Three we discuss network management and administration by dividing networks into three

service zones – the network, the data center, and the desktop. (See Chapter Three for extended definitions of

the three service zones.) The goal there is to simplify a network’s vast array of technology by logically

dividing and classifying its parts in manageable segments. Management’s view is equally well served by

such a compartmentalized approach. The key issues in managing the network (as opposed to administrating

it) center on asset management, cost management, and best practices. We cover administration best practices

and asset management in Chapter Three, but we’ll discuss higher-level business management practices, as

well as cost management, briefly here.

What are the key cost centers for a network? In fact, the list is long. Many of these are obvious (direct

budgeted costs), while many others are hidden or indirect costs that may not find a line on the IS budget.

Some are recurring (like maintenance costs and communication fees) while others are cyclic (like training

for rollout of new technology). Below we provide an outline of a network’s primary cost centers. Each

should be assessed for each of the network’s three zones.

Hardware

The category includes expenditures for new (and leased) equipment. In the data center, this includes

server computers, data storage equipment, central communication equipment and related peripherals, as well

as environment control devices (temperature and humidity) and power conditioning and management

devices. For the network zone, this includes core infrastructure items like cabling and switching equipment

(hubs, routers, etc.). The desktop is the most dynamic area for hardware costs in most companies, including

desktop and mobile computers, internal devices (hard disks, other read/write components, optional circuitry

like video cards), and peripherals like printers and scanners. The category includes secondary acquisition

costs (e.g., lease fees) as well as disposal costs. Some costs, like memory, storage capacity, etc., might apply

to hardware in more than one zone.

Software

Server software, operating system software, management tools and utilities, security software, develop-

ment software (e.g., programming languages, development environments, etc.), and general utilities form

the bulk of costs at the data center. At the network zone, typically software costs are minimal, although

switching and routing software may fit here. The desktop is, again, where costs can be most fluid and unpre-

dictable, and most difficult to manage. Included here is new software, upgrades to existing software and

annual site license fees. Applications themselves that populate desktops range from clients applications

working with core server systems to business applications and utilities.

Consumables

Network-related supplies, including storage media (disks and tapes), printer supplies, etc. This category

typically does not apply at the network zone.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 11

Woodard • Gattuccio • Brain Networking Basics

Network Management & System Administration

This is a very large category which may span two or more zones depending on the network’s configura-

tion. Following is a summary.

• Asset inventory management. Costs for maintaining asset inventories, periodic inventory taking,

change management, and maintenance of inventory tools, like databases and other records. Costs are

primarily labor.

• Application management. Includes server and desktop applications, custom applications, operating

system, utilities, and other application services. In the case of custom applications, this includes con-

figuration and maintenance, not development costs. The category includes labor, primarily, for con-

figuration and access control. Includes managing upgrades and deployment of new applications.

Costs here can be significant. For example, an operating system upgrade generally requires that each

machine on the network receive on the order of 4 hours of personal attention.

• Hardware configuration. Costs to deploy and configure hardware on the network, change configura-

tions, upgrade existing equipment, modify network topology, etc., as well as disposal of retired

equipment.

• Storage management. Includes costs for capacity planning, developing backup and archival systems,

disaster planning, and data-access systems (for data recovery). Includes planning integration of

hardware devices, as well as disk and file management, routine storage-media maintenance (defrag-

mentation, testing, etc.), record keeping, and policy planning.

• System planning. Costs for ongoing monitoring and managing of network systems, periodically

reassessing performance, tracking growth, and planning evolution and expansion of network capac-

ity and performance.

• Procurement. Costs for evaluating and testing technology (both hardware and software), developing

options and contingency plans, and documenting purchase recommendations. Includes the issue of

lifecycle management.

• Security management. Developing, implementing, and monitoring network security systems, policy,

and procedure. Includes password protection and access control, network gateway security (i.e.,

firewall), and virus protection.

• Traffic management. Costs for monitoring and assessing network traffic, planning load balancing,

periodically reassessing traffic patterns, and optimizing network traffic flow.

• Performance management. Closely related to traffic management, but expands to include non-traf-

fic-related performance issues like software, operating system, and application services, as well as

network hardware, and fine-tuning configurations to optimize network performance.

• Troubleshooting & Repair. Unscheduled maintenance on network and data center hardware and soft-

ware to correct malfunctions. (Troubleshooting and repair at the desktop zone is covered in the Sup-

port Costs section, below.)

• Backup (spare) equipment and unscheduled replacements. Critical network components, whose fail-

ure may negatively impact the network, may have replacement units on hand to allow rapid replace-

ment in emergencies. Furthermore, equipment failures that can not be cost-effectively repaired may

require replacement. Warranties or service contracts may negate the latter.

• Recurring costs. Costs for service contracts and other incidental recurring expenses.

• Consulting & Outsource expenses. Costs for outside services contracted to augment in-house admin-

istration and management.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 12

Woodard • Gattuccio • Brain Networking Basics

Communication

The cost of connectivity, which is typically applied at the network zone. These costs include fees for

things like ISP hosting, WAN connections, leased-lines, on-line access, remote access costs (long-distance

tolls to RAS server), etc.

Support &Training

Training and support costs, more than any other, typically carry hidden or incidental costs. Furthermore,

a great many unknowns (for example, predicting needed support for an operating system upgrade) typically

fall into this category of cost. For these reasons, budgeting, managing, and controlling costs in the area of

support and training are among the most challenging for business mangers. Following are some key types of

support and training costs. Note that training costs can involve end users, managers, and/or IS technicians.

• Administration and management of support and training services. These include labor costs for both

management and administrative support.

• Vendor management. Costs associated with contracting for outside training and/or support services.

• Course development. Costs (labor and materials) to develop training course curricula and materials.

• Training facilities, materials, and logistics. Costs for maintaining (or renting) training facilities,

printing or otherwise generating course materials, and logistics for providing equipment (e.g., com-

puters), transportation, or other needs for both trainers and trainees.

• Outside training. Costs for sending technicians, managers, and/or employees to a remote training

site – includes course costs, lost productivity, transportation, food and lodging, etc.

• Helpdesk support. Labor, training, and administration of help desk systems.

• Troubleshooting & Repair. User problems, either hardware or software related, which cannot be

solved through help desk intervention typically require a service call.

Development Costs

Includes the cost to design and develop, test, document, rollout, troubleshoot, and fine-tune custom-

built applications. In cases where application development is handled by a third-party, this includes all asso-

ciated costs.

Downtime Costs

Frequently omitted from technology budgets is downtime costs – both scheduled (e.g., for maintenance

or for the rollout of new technology) and unscheduled (due to equipment failure).

Summary

The above sections summarize typical categories of costs a company typically incurs to manage, sup-

port, and maintain a network. Most lend themselves to subdividing along the network’s service zones – data

center, network, and desktop – which helps isolate costs at a more granular level. But there are two levels to

profiling costs for a business network. Only the first involves identifying network costs, as we’ve done

above. The second is managing costs.

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 13

Woodard • Gattuccio • Brain Networking Basics

Managing Network Costs

The total cost of ownership (TCO) is a concept frequently used to understand the cost of computing ser-

vices. Simply put, TCO is a method that aggregates the lifetime cost of computing equipment (including

such things as hardware, software, installation, service, support, training, upgrades, downtime, and anything

else that may apply under special circumstances) as a cost-analysis metric. The method gained currency in

the hands of manufacturers of “thin client” systems to help them make the case that such systems were supe-

rior business investments than “fat” clients – typical workstation computers. (Thin-client systems hark back

to the mainframe/dumb client model, where powerful servers do most of the processing on behalf of very

low-powered – and inexpensive – workstation computer terminals.) While the thin client/fat client debate

rages on, TCO as a valuable cost-analysis metric has acquired significant status. While typically used to ana-

lyze the cost of workstation computers (i.e., the desktop zone), TCO methods can be applied across the net-

work.

Computer industry consulting groups like Forrester Research and the Gartner Group place the TCO cost

of workstation computers at between $8,000 and $10,000. These claims accompany their contention that

smart management, sound policies, and sensible procedures can bring TCO down significantly. This is a

widely held view.

Various TCO strategies slice the onion different ways, but common threads emerge. Following is a sum-

mary of generally accepted sound management practices for managing network costs.

Standard Desktop Configuration

The heart of cost containment is to be found in the desktop zone. This is where a network incurs the

greatest risk of spinning out of control, because this is where users touch the network. In a 2000-workstation

network, you have 2000 views of the network. While flexibility is among a computer network’s greatest

strengths, uncontrolled it can evolve into a costly weakness.

Where desktop configurations are idiosyncratic, the cost of reconfiguring for new or replacement work-

ers can make TCO skyrocket. Inconsistencies across desktop platforms can make collaboration difficult,

unreliable, or even impossible, impacting productivity severely. Indiscriminate software installations make a

nightmare of site-license management, and makes reliable asset management nearly impossible.

Clearly, no single desktop “image” suits all employees, particularly in a large company with diverse job

classifications. However, it is highly likely that a fixed number of job-related desktop images will suffice –

one for clerical staff, for example, another for engineers, another for managers, etc. There will always be

special-case needs, of course, but even these should be handled procedurally (with a request-and-approval

procedure), so that the unique installation can be recorded and tracked as part of your corporate memory.

An important part of administering the desktop zone is periodically auditing the desktop image to

ensure that the desktop images remain in conformance with company policy. This is important, because only

with consistency at the desktop can a company enjoy TCO advantages through economies of scale – not just

in procurement, but also in training and support.

Enterprise-Wide Planning and Policy-based Management

Consistency at the desktop is only the beginning. The hallmark of network functionality, after all, is the

success with which its pieces integrate. Integration is the keyword that informed much of Chapter One. Net-

work planning must begin at the top, with a macroscopic view of the overall network architecture and the

business systems they support. This applies to planning for future enhancements, upgrades, and scalability

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 14

Woodard • Gattuccio • Brain Networking Basics

as well. Data center, desktop, and network infrastructure must integrate fully and seamlessly, not just in the

present, but in the future as well.

Policy-based management is a common buzz-phrase in network management of late, but for sound rea-

sons. If offers an a priori approach to network management, in which needs are assessed and functionality is

assigned in advance of a system’s implementation. This dovetails closely with standardized desktop images,

and in fact, the desktop image is an outcome of just such a policy-based management decision. The goal is

constraining users to just the right palette of applications and just the right amount of computing power to

allow them to complete their assigned tasks. This helps maintain consistency and scalability, reduces costs

per workstation, and lightens user support costs – in part because users are not confronted with applications

on which they are not trained.

Perhaps nowhere is policy-based management more an issue than in the arena of online communica-

tions – everything from internal messaging to online access to the Internet. We discuss the subject of mes-

saging policy at length in Chapter Four.

Stratified Central User Support

Astonishing statistics are reported about the costs of inefficient training, service and support. It has been

reported, for example, that it costs upwards of $80.00 for an IS technician to make a desktop service call. It

is also reported that, even on highly efficient networks (experiencing less than 5% downtime), downtime can

cost a 2000-workstation network over a half-million dollars per year. These numbers may not be relevant in

your company, but that is not the point. The point is, manageable and controllable factors in the arena of

training, service and support can potentially shave a significant fraction from your TCO – regardless of your

company’s size.

User support should be centralized and stratified. Systems are best managed, and network problems and

issues best tracked, when support services are administered centrally. Efficiencies of scale are achieved, ser-

vice experience is better shared, and common problems are more easily identified and tracked when admin-

istered centrally. User support and problem resolution should also be stratified. By this, we mean the

company’s systems and procedures should route all service calls through a common channel to a least-cost

support center – typically telephone based. If unable to solve the problem, the call center should be trained to

filter problems in a cost-efficient way. Reliance on an online knowledge base, FAQ (frequently asked ques-

tions) files, or online documentation library can help reduce the need for costly service calls. Only in cases

of true need should you dispatch a technician to a workstation (except, of course, in cases of equipment fail-

ure).

Scalable, Fault-tolerant Architecture

Few businesses, if any, plan not to grow. Fortunately, modern networks, when well designed and imple-

mented with an eye toward growth, scale easily. We’ll see in Chapters Seven and Four how network direc-

tory and communication services are highly compatible with Internet-based naming and configuration

schemes, making them highly suited to virtually unlimited growth. We saw in Chapter One how client/server

architecture can also add enormously to a network’s ability to scale up rapidly and easily. However, these

capabilities are useful only when built in to the framework of the original network. Re-designing and re-

engineering a network is costly – both in technological terms and in lost productivity due to downtime.

Fault-tolerance is another critical must. In Chapter Five we discuss the issue of fault-tolerance and

failover capability in connection with data storage (and again in Chapter Three in connection with system

administration). Fault-tolerance is a risk-reduction cost that must be weighed against lost productivity due to

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 15

Woodard • Gattuccio • Brain Networking Basics

downtime resulting from equipment or software system failure. Accepting the risk and the cost of expensive

downtime measures poorly against the cost of robust, fault-tolerant infrastructure and equipment.

Centralized Network Management and Administration

Increasingly, system administration and network management tools are allowing technicians and

administrators to service the network from a central point. Particularly in the area of deploying software

(new or upgrades), this can offer enormous cost savings. When the deployment is for operating system soft-

ware, or communication software, which may need precise configuring, the potential savings are even more

significant. The arithmetic is easy. Even at just fifteen minutes per workstation, deploying an upgrade across

2000 workstations is enormously expensive – costing perhaps as much as the software itself.

Sophisticated network management tools also typically include powerful network monitoring tools to

allow for ongoing performance assessments and fine-tuning. These can also play a role in auditing user con-

formance to company policy – particularly in the area of online communication. With Internet connections

increasingly common in business, user desktops extend the user’s reach globally. While a powerful tool, this

may also merit attention from a management and policy-planning point of view.

Questions For Managers

• What is the population of our network? How many workstations, servers, hubs, routers, etc.? What

is the geographical scope of our network? Does our infrastructure meet the demands of the net-

work’s size and scope?

• How do we monitor network performance, bandwidth, throughput and so on? Is our bandwidth ade-

quate throughout the network to handle the load? How is network load measured? How is it

reported? Can I see a report?

• What is the average load on the network? What is our maximum capacity? How often do we max

out? What are the implications of this? Do we lose customers? Do we lose productivity? At what

rate is network traffic growing? When do we expect to overload our present capacity? Do we have

the means to scale up? Is there a plan for scaling up? Can I see the plan?

• What is our TCO? What was it last year? What is it expected to be next year (in two years, three

years)? How do we calculate TCO? What variables are included? What is excluded from the calcu-

lation? How does out TCO stack up against others in our industry? How does it stack up against

other businesses our size?

• What new technologies are on the horizon that may impact our TCO in a significant way? What’s all

this about thin clients and network PCs? Are they really less expensive, or do they have hidden

costs? How will new networking technologies impact productivity? How will new networking tech-

nologies impact our network links with our customers and business partners? Can you show me dif-

ferent options with the projected total costs of each?

• How do we handle training and user support? How much do we pay for training and support? Do we

measure the effectiveness of our training and the efficiency of our support? How do we calculate the

cost of these things? What are the criteria and methods? What are the results? May I see these

results?

• How do we define downtime? How do we measure downtime? What is our annualized downtime?

What was it last year? Are these figures reasonable? What are the main causes of downtime? Do we

have a weak link? How do we fix this?

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 16

Woodard • Gattuccio • Brain Networking Basics

• Do we have standard desktop configurations? How many do we have? To what job classifications

are they tied? How much does each one cost (i.e., what is the TCO on each configuration)? Are

these configurations reasonable? How many exceptions are requested (and how many granted) on

average?

• Is our network fault-tolerant? To what degree? By what means do we ensure our current level of

fault-tolerance? How is this measured? What does it cost? Is our present level adequate? What level

of fault-tolerance is adequate? How much will it cost?

Copyright © 1998 by Interface Technologies, Inc. All rights reserved. Page 17

Potrebbero piacerti anche

- Introduction To Computer NetworkingDocumento44 pagineIntroduction To Computer Networkingjohn100% (1)

- IRC5 Compact IO Circuit DiagramDocumento3 pagineIRC5 Compact IO Circuit Diagramgapam_2Nessuna valutazione finora

- Computer Network and InternetDocumento21 pagineComputer Network and InternetRaj K Pandey, MBS, MA, MPANessuna valutazione finora

- 1.1 What Is A Network?Documento8 pagine1.1 What Is A Network?Phoenix D RajputGirlNessuna valutazione finora

- Lecture 5 - Computer NetworksDocumento37 pagineLecture 5 - Computer Networksamangeldinersultan200tNessuna valutazione finora

- Module 1: Introduction To NetworkingDocumento12 pagineModule 1: Introduction To NetworkingKihxan Poi Alonzo PaduaNessuna valutazione finora

- On Computer Networking and SecurityDocumento14 pagineOn Computer Networking and SecurityshitaltadasNessuna valutazione finora

- Network: Chapter 2: Types of NetworkDocumento8 pagineNetwork: Chapter 2: Types of NetworksweetashusNessuna valutazione finora

- ICT23Documento25 pagineICT23Rick RanteNessuna valutazione finora

- Networks+Safety ChapterDocumento57 pagineNetworks+Safety ChapterSouhib AzzouzNessuna valutazione finora

- Computer NetworkDocumento67 pagineComputer NetworkWaqas AliNessuna valutazione finora

- AY2021 Networking Concepts Notes 1Documento9 pagineAY2021 Networking Concepts Notes 1Priam Sujan KolurmathNessuna valutazione finora

- IP Based Networks BasicDocumento14 pagineIP Based Networks BasicAbi AnnunNessuna valutazione finora

- Networking and Internetworking DevicesDocumento21 pagineNetworking and Internetworking DevicesDip DasNessuna valutazione finora

- Data Communication and Etworks: Prepared by Senait KDocumento11 pagineData Communication and Etworks: Prepared by Senait KSenait Kiros100% (1)

- EEE555 Note 2Documento29 pagineEEE555 Note 2jonahNessuna valutazione finora

- NETWORKINGDocumento60 pagineNETWORKINGkurib38Nessuna valutazione finora

- Module 6 - Reading1 - NetworksandTelecommunicationsDocumento8 pagineModule 6 - Reading1 - NetworksandTelecommunicationsChristopher AdvinculaNessuna valutazione finora

- 1 Introduction To Computer NetworkingDocumento53 pagine1 Introduction To Computer Networkingmosesdivine661Nessuna valutazione finora

- Unit 10Documento2 pagineUnit 10api-285399009Nessuna valutazione finora

- What Is Computer Networks?Documento8 pagineWhat Is Computer Networks?Katrina Mae VillalunaNessuna valutazione finora

- 1 Introduction To Computer NetworkingDocumento50 pagine1 Introduction To Computer NetworkingSaurabh SharmaNessuna valutazione finora

- Networking in DMRCDocumento16 pagineNetworking in DMRCaanchalbahl62100% (1)

- InternetNotes PDFDocumento11 pagineInternetNotes PDFSungram SinghNessuna valutazione finora

- SPTVE CSS 10 Quarter 4 Week 1 2Documento10 pagineSPTVE CSS 10 Quarter 4 Week 1 2Sophia Colleen Lapu-osNessuna valutazione finora

- Cisco Internetworking ProsuctsDocumento8 pagineCisco Internetworking Prosuctsxavier25Nessuna valutazione finora

- Network Administration and Management: - Brian S. CunalDocumento30 pagineNetwork Administration and Management: - Brian S. CunalviadjbvdNessuna valutazione finora

- 2.0 NetworkDocumento24 pagine2.0 NetworkChinta QaishyNessuna valutazione finora

- Lecture 1 - Introduction To NetworkingDocumento37 pagineLecture 1 - Introduction To NetworkingAdam MuizNessuna valutazione finora

- Lecture 1Documento31 pagineLecture 1sohail75kotbNessuna valutazione finora

- COMP1809- Final Coursework sắp xongDocumento15 pagineCOMP1809- Final Coursework sắp xongdungnagch220140Nessuna valutazione finora

- Unit 1 DCN Lecture Notes 1 9Documento16 pagineUnit 1 DCN Lecture Notes 1 9arun kaushikNessuna valutazione finora

- 2 Computer NetworksDocumento13 pagine2 Computer Networksthapliyallaxmi72Nessuna valutazione finora

- Computer NetworkDocumento3 pagineComputer NetworkMamoon KhanNessuna valutazione finora

- Telecomms ConceptsDocumento111 pagineTelecomms ConceptsKAFEEL AHMED KHANNessuna valutazione finora

- Learning ObjectiveDocumento6 pagineLearning ObjectiveAndrei Christian AbanNessuna valutazione finora

- NetworkingDocumento40 pagineNetworkingBINS THOMASNessuna valutazione finora

- Networking 2011Documento18 pagineNetworking 2011paroothiNessuna valutazione finora

- What Is A Network?Documento9 pagineWhat Is A Network?amrit singhaniaNessuna valutazione finora

- Grade 8 Second Term NoteDocumento21 pagineGrade 8 Second Term NoteThiseni De SilvaNessuna valutazione finora

- Lesson 1-Introduction To Computer - Networks RCCS209Documento88 pagineLesson 1-Introduction To Computer - Networks RCCS209ShamiMalikNessuna valutazione finora

- Accowenting and FinanceDocumento24 pagineAccowenting and FinanceSwete SelamNessuna valutazione finora

- Basic Networking ConceptDocumento33 pagineBasic Networking ConceptAbdul Majeed Khan100% (3)

- Communication and Network ConceptsDocumento57 pagineCommunication and Network ConceptsKomal KansalNessuna valutazione finora

- NetworkingDocumento41 pagineNetworkingBINS THOMASNessuna valutazione finora

- Lec-1 BASICS OF COMPUTER NETWORKSDocumento20 pagineLec-1 BASICS OF COMPUTER NETWORKSDivya GuptaNessuna valutazione finora

- Networking BasicsDocumento102 pagineNetworking Basicssan1507Nessuna valutazione finora

- Setting Up Computer NetworksDocumento7 pagineSetting Up Computer NetworksNinja ni Zack PrimoNessuna valutazione finora

- NGÂN HÀ BTEC-CO1 - LUẬN VĂNDocumento23 pagineNGÂN HÀ BTEC-CO1 - LUẬN VĂNNgân HàNessuna valutazione finora

- Working2022 PDFDocumento9 pagineWorking2022 PDFKrrish KumarNessuna valutazione finora

- Backbone Network Components (Data Communications and Networking) 1Documento5 pagineBackbone Network Components (Data Communications and Networking) 1Udara YapaNessuna valutazione finora

- Computer Application: Surbhi Goel 12455 Bcom Hons A Pgdav College DuDocumento17 pagineComputer Application: Surbhi Goel 12455 Bcom Hons A Pgdav College DuManish YadavNessuna valutazione finora

- PREL Dc1 LessonQDocumento18 paginePREL Dc1 LessonQshillamaelacorteNessuna valutazione finora

- Unit IDocumento13 pagineUnit Iabdulbasithboss46Nessuna valutazione finora

- Module 1: Basic Networking ConceptsDocumento7 pagineModule 1: Basic Networking ConceptsPriscilla Muthoni WakahiaNessuna valutazione finora

- Computer NetworkingDocumento57 pagineComputer Networkingsomtechm100% (1)

- Computer Networking: An introductory guide for complete beginners: Computer Networking, #1Da EverandComputer Networking: An introductory guide for complete beginners: Computer Networking, #1Valutazione: 4.5 su 5 stelle4.5/5 (2)

- Local Area Networks: An Introduction to the TechnologyDa EverandLocal Area Networks: An Introduction to the TechnologyNessuna valutazione finora

- Ethernet Networking for the Small Office and Professional Home OfficeDa EverandEthernet Networking for the Small Office and Professional Home OfficeValutazione: 4 su 5 stelle4/5 (1)

- Introduction to Internet & Web Technology: Internet & Web TechnologyDa EverandIntroduction to Internet & Web Technology: Internet & Web TechnologyNessuna valutazione finora

- EE 522 PIC Lab1 HandoutDocumento6 pagineEE 522 PIC Lab1 HandoutMuhammad Faizan BhinderNessuna valutazione finora

- Plc&Scada MergedDocumento823 paginePlc&Scada Mergedmarathi sidNessuna valutazione finora

- Supplemental Restraint System (SRS) PDFDocumento64 pagineSupplemental Restraint System (SRS) PDFruanm_1Nessuna valutazione finora

- Flyer Deutz Ded Englisch High ResDocumento2 pagineFlyer Deutz Ded Englisch High ResJorge Corrales100% (1)

- Relay and AVR Atmel Atmega 16Documento12 pagineRelay and AVR Atmel Atmega 16Robo IndiaNessuna valutazione finora

- Manual ModbusDocumento45 pagineManual ModbusMaximiliano SanchezNessuna valutazione finora

- CSEN1071 Data Communications SyllabusDocumento2 pagineCSEN1071 Data Communications SyllabusGanesh NNessuna valutazione finora

- Kre1012653 - 1 - Odi2-065r15m18j02-Q V3Documento3 pagineKre1012653 - 1 - Odi2-065r15m18j02-Q V3Talha JavedNessuna valutazione finora

- RRU5301 Technical Specifications: LTE FDDDocumento6 pagineRRU5301 Technical Specifications: LTE FDDАнна ПакулисNessuna valutazione finora

- FV ProporcionalDocumento16 pagineFV Proporcionalrominita2005Nessuna valutazione finora

- Lab Report Alternating CurrentDocumento4 pagineLab Report Alternating Currentalinaathirah04Nessuna valutazione finora

- AnalogDocumento20 pagineAnalogAbsbjsNessuna valutazione finora

- NOJA-8231-00 - Relay-CMS Compatibility Chart On Release of Relay-1.21.0.0 - ...Documento1 paginaNOJA-8231-00 - Relay-CMS Compatibility Chart On Release of Relay-1.21.0.0 - ...Randi AmayaNessuna valutazione finora

- Fire c166Documento108 pagineFire c166carver_uaNessuna valutazione finora

- Serial Device Server Rs 485 To EthernetDocumento7 pagineSerial Device Server Rs 485 To EthernetDeni DendenNessuna valutazione finora

- Votano 100 User Manual EnuDocumento138 pagineVotano 100 User Manual Enufd27100% (2)

- Cas-Gps Light Vehicle System: Type VG For Portable InstallationsDocumento3 pagineCas-Gps Light Vehicle System: Type VG For Portable InstallationsAbhinandan PadhaNessuna valutazione finora

- Belyst - Ifp UstDocumento7 pagineBelyst - Ifp UstMabel Solusi MandiriNessuna valutazione finora

- SICAM AK - Hardware Based SASDocumento6 pagineSICAM AK - Hardware Based SASsavijolaNessuna valutazione finora

- LAB13 (Noise Reduction)Documento36 pagineLAB13 (Noise Reduction)안창용[학생](전자정보대학 전자공학과)Nessuna valutazione finora

- A Novel Current Steering Charge Pump With LowDocumento4 pagineA Novel Current Steering Charge Pump With LowAhmed ShafeekNessuna valutazione finora

- Fundamentals of Microcontrollers (MCU's) : Hands-On WorkshopDocumento23 pagineFundamentals of Microcontrollers (MCU's) : Hands-On WorkshopVIJAYPUTRANessuna valutazione finora

- SatLink Hub Family - Rev J PSNDocumento4 pagineSatLink Hub Family - Rev J PSNDiego Gabriel IriarteNessuna valutazione finora

- MultivibratorsDocumento12 pagineMultivibratorsInam Rajj100% (1)

- The Compression HandbookDocumento48 pagineThe Compression HandbookStephan KhodyrevNessuna valutazione finora

- LD8 Product SheetDocumento2 pagineLD8 Product SheetYuridho Agni KusumaNessuna valutazione finora

- ReportDocumento2 pagineReportArka EnergyNessuna valutazione finora

- Ha 17902Documento14 pagineHa 17902dubuko100% (1)

- ML2281, ML2282, ML2284, ML2288 Serial I/O 8-Bit A/D Converters With Multiplexer OptionsDocumento26 pagineML2281, ML2282, ML2284, ML2288 Serial I/O 8-Bit A/D Converters With Multiplexer OptionsJoao TeixeiraNessuna valutazione finora