Documenti di Didattica

Documenti di Professioni

Documenti di Cultura

EES - Abstract Book

Caricato da

ifelakojaofinDescrizione originale:

Copyright

Formati disponibili

Condividi questo documento

Condividi o incorpora il documento

Hai trovato utile questo documento?

Questo contenuto è inappropriato?

Segnala questo documentoCopyright:

Formati disponibili

EES - Abstract Book

Caricato da

ifelakojaofinCopyright:

Formati disponibili

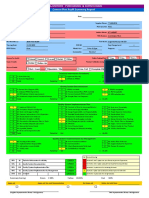

New concepts New challenges New solutions

ABSTRACT BOOK

W W W. E U R O P E A N E VA L U AT I O N . O R G

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

Strand 1 Evaluation governance, networks and information Strand 2 Evaluation research, methods and practices Strand 3 Evaluation ethics, capabilities and professionalism Strand 4 Evaluation of regional, social and development programs and policies Strand 5 Evaluation in government and in organizations

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

Contents

Oral Presentations S5-03 S2-26 S2-41 S5-11 S2-04 S3-18 S4-03 S5-21 S3-01 S4-17 S3-33 S3-23 S5-22 S3-32 S3-10 S5-02 S3-19 S2-03 S1-13 S4-15 S2-32 S1-06 S2-39 S1-04 S3-20 S2-01 S5-04 S1-26 S2-11 S4-24 S1-20 S5-26 S1-08 S4-21 S2-13 S3-24 S2-40 S5-19 S2-35 S4-01 S4-10

Paper session . . . . . .The

use of evaluation in public policy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . evaluation tools and technologies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5 8

Paper session . . . . . .New Panel . . . . . . . . . . . .New Paper session . . . . . .The

steps with Contribution Analysis: strengthening the theoretical base and widening the practice . . . . . 10 outcomes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13 evaluation quality standards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15 climate change and energy efficiency . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

role of evaluation in the civil society I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Paper session . . . . . .Defining Panel . . . . . . . . . . . .Assuring

Paper session . . . . . .Evaluating Panel . . . . . . . . . . . .Does

Performance Management Have a Future? Issues and Challenges . . . . . . . . . . . . . . . . . . . . . . . . . . . 19 and Evaluation: Approaches and Practices I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20 of humanitarian aid . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

Paper session . . . . . .Gender

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Equity

and Ethics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25 evaluation capacity through university programmes: where are the evaluators of the future? . . . . 26

Panel . . . . . . . . . . . .Building

Panel . . . . . . . . . . . .Identifying

and assessing capacity development outcomes: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27 perspectives from the EU, UN, OSCE and the Council of Europe and Equity: Improving the Evaluation of Social Programmes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28 use and useability I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29 multiple perspectives in judging value in a networked evaluation world . . . . . . . . . . . . . . . . . . . 33 and combining evaluative approaches . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34 and evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

Panel . . . . . . . . . . . .Equality

Paper session . . . . . .Evaluation Paper session . . . . . .Auditing

Panel . . . . . . . . . . . .Managing

Paper session . . . . . .Comparing Paper session . . . . . .Network

effects on evaluation and organization I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36 of health systems and interventions I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 39 and systems thinking for evaluators . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 41 . . . . . . . . . . . . . . . . . . . . . . . . . 44

Paper session . . . . . .Evaluation

Panel . . . . . . . . . . . .Complexity Paper session . . . . . .M&E

systems and real time evaluation I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

Panel . . . . . . . . . . . .Holding

the state to account: using evaluation to challenge the theories, understandings and myths underpinning policies and programs

Paper session . . . . . .ICT Panel . . . . . . . . . . . .The

systems for evaluation quality and use . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45 impact of ethics: are code of conducts in evaluation networks necessary? . . . . . . . . . . . . . . . . . . . . . . 47 to evaluating research . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48 and governance I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 50

Paper session . . . . . .Approaches Paper session . . . . . .Evaluation

Panel . . . . . . . . . . . .International

Organization for Collaborative Outcome Management (IOCOM) . . . . . . . . . . . . . . . . . . . . . . 52 The value and contribution to evaluation in the networked society in government and organizations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53 ongoing and ex-post evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

Paper session . . . . . .Evaluation

Paper session . . . . . .Monitoring, Panel . . . . . . . . . . . .The

international evaluation partnership initiative . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57 by Results: What results? How should future evaluations of such approaches be undertaken? . . . 58 for improved governance and management I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59 of local, regional and cross border programs I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62 use . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64 . . . . . . . . . . . . . . . . . . . . . . . 66

Panel . . . . . . . . . . . .Payment

Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Building

the capacity of beneficiary countries in monitoring and evaluation. contrasting methods and experience

Panel . . . . . . . . . . . .Tools

and methods for evaluating the efficiency of development interventions . . . . . . . . . . . . . . . . . . . . . . 67 Power, Power of Evaluation and Speaking Truth to Power . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68 Approaches to Impact Evaluation: Session 1 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69 and employment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70 of public policies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

Panel . . . . . . . . . . . .Evaluation Panel . . . . . . . . . . . .Innovative Paper session . . . . . .Evaluation

Paper session . . . . . .Evaluability

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S2-46 S1-11 S4-25 S5-27 S1-14 S5-14 S2-15 S2-36 S2-37 S4-04 S2-18 S3-26 S2-31 S5-16 S2-16 S2-22 S2-20 S3-02 S1-07 S2-21 S2-34 S3-22 S4-12 S2-12 S2-43 S3-11 S2-06 S5-23 S2-45 S2-07 S3-27 S5-05 S2-17 S2-05 S2-44 S1-17 S5-28 S5-08 S1-22 S3-05 S2-14 S4-27 S4-29 S3-17 S5-20 S3-14 S2-02 S4-28

Panel . . . . . . . . . . . .Agency

And Evaluative Culture: Contributions Of Feminist Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75 of international partnerships and collaborative networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 76 of income support, credit and insurance interventions I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Joint

Evaluation of Dutch Development NGOs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80 effects on evaluation and organization II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81 evaluation practice . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85 Approaches to Impact Evaluation: Session 2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 87

Paper session . . . . . .Network Paper session . . . . . .The

interaction of evaluation, research and innovation I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

Paper session . . . . . .Improving Panel . . . . . . . . . . . .Innovative Panel . . . . . . . . . . . .What Paper session . . . . . .The

is excellent? The challenge of evaluating research . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 88 ethics in evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91 in Canada: two years later . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 94 management and evaluation: love at first sight or marriage of (in)convenience? . . . . . . . . . . . 95

impact of values and dispositions on evaluation approaches . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

Paper session . . . . . .Integrating

Panel . . . . . . . . . . . .Credentialing Panel . . . . . . . . . . . .Performance Paper session . . . . . .The

role of evaluation in civil society II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96 methodologies in development evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98 or improved evaluation approaches I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .100 evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .102 and Evaluation: Approaches and Practices II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .104 evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .108 systems and real time evaluation II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .106 in evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .109 for equitable development . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .110 of innovation policies and innovative programmes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .111 of competencies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .114 use and useability II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .117 EU evaluation practice . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .119 and evaluating organisational culture: searching for the right questions . . . . . . . . . . . . . . . . . .121

Paper session . . . . . .Innovative Paper session . . . . . .New Paper session . . . . . .Meta

Paper session . . . . . .Gender Paper session . . . . . .M&E

Paper session . . . . . .Multinational Panel . . . . . . . . . . . .Theories

Panel . . . . . . . . . . . .Evaluation Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Risk

Assessment, Monitoring and Evaluation in Food Safety the case of the Codex Alimentariu . . . . . . . .116

Paper session . . . . . .Evaluation Paper session . . . . . .Improving

Panel . . . . . . . . . . . .Monitoring Panel . . . . . . . . . . . .The

Roles and Complementarity between Monitoring and Evaluation Functions . . . . . . . . . . . . . . . . . . . . .122 innovation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .123 Research Excellence for Evidence-based Policy: The Important Role of Organizational Context . .126 and governance II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .127 methods in evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .129

Paper session . . . . . .Evaluating Panel . . . . . . . . . . . .Evaluating Paper session . . . . . .Evaluation Paper session . . . . . .Innovative Paper session . . . . . .Probing Panel . . . . . . . . . . . .A

the logic of evaluation logics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .131 networks and knowledge sharing I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .134 in a European context I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .138

European Evaluation Theory Tree . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .133 Role of Philanthropic Foundations in Development Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .137

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .The

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Sharing

information in the networked society . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .140 options and requirements for the publication of (evaluation) results Empowerment and Ethics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .141 based policy and programs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .143 of research programmes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .145

Paper session . . . . . .Equity,

Paper session . . . . . .Evidence

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Using

research to rethink programme implementation: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .147 The Health Systems Strengthening Experience in Nigeria and gender mainstreaming . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .148 data and performance assessment I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .151 the contribution of programs in complex environments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .153 the micro-macro disconnect in the evaluation of climate change resilience . . . . . . . . . . . . . . .155 Monitoring and Evaluation (M&E) Readiness Evidence for Evaluation . . . . . . . . . . . . . . . . . . . . . . .150

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Valuing

Paper session . . . . . .Evaluation Paper session . . . . . .Capturing

Panel . . . . . . . . . . . .Addressing

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S3-25 S2-47 S5-09 S2-28 S1-25 S4-23 S4-19 S2-24 S3-06 S3-07 S2-09 S3-28 S1-16 S2-29 S4-31 S4-09 S3-15 S5-10 S3-03 S5-15 S3-13 S1-23 S1-21 S5-18 S5-12 S4-30 S3-31 S1-09 S1-01 S2-33 S2-10 S3-08 S1-15 S4-14 S2-38 S4-32 S1-28 S5-13 S3-09 S3-21 S2-30 S5-24 S4-22 S2-23 S4-02 S4-26 S1-18

Panel . . . . . . . . . . . .Evaluation

Capacity Development for International Development: . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .156 Lessons Learned across Multiple Initiatives the Authors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .157 in a European context II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .158 monitoring and evaluation tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .160

Panel . . . . . . . . . . . .Meet

Paper session . . . . . .Evaluation

Paper session . . . . . .Perfomance Panel . . . . . . . . . . . .Using

theories of change and evaluation to strengthen networks: the case of evaluation associations . . . .162 of Health Systems and Interventions II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .163

Paper session . . . . . .Evaluation Paper session . . . . . .Real Paper session . . . . . .New

time evaluation for decision-making . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .164 methods for impact evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .166 capacity building and regional development . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .168 in complex environments I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .172 Development: Learning from experience I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .170

Paper session . . . . . .Evaluation Paper session . . . . . .Capacity

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .The

Future of Evaluation (and What We Should Do About It) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .174 networking, network associations and evaluation I . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .175 outcomes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .177 aggregated analysis to enhance learning in development cooperation . . . . . . . . . . . . .179 environmental and social impacts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .181 data and performance assessment II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .183 in an educational Context . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .185 policies, human rights and development evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . .187

Paper session . . . . . .Social

Paper session . . . . . .Predicting

Panel . . . . . . . . . . . .Meta-Evaluations Paper session . . . . . .Evaluating Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation

Paper session . . . . . .Gender-sensitive Paper session . . . . . .The

interaction of evaluation, research and innovation II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .189 credibility and learning II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .191 and communication technology for development . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .192 in development evaluation Experiences from DAC, ECG, UNEG . . . . . . . . . . . . . . . . . . . . . . .193

Paper session . . . . . .Evaluation

Panel . . . . . . . . . . . .Information Panel . . . . . . . . . . . .Networking Panel . . . . . . . . . . . .Jordans

Evaluation and Impact Assessment Unit: lessons in evaluation capacity building . . . . . . . . . . . . . .194 in the Health Care Sector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .195 the Paris Declaration on aid effectiveness . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .197 empowerment: integrating theories of change, theoretical frameworks and M&E . . . . . . . . . .198 for improved governance and management II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .199

Paper session . . . . . .Evaluation Panel . . . . . . . . . . . .Evaluating Panel . . . . . . . . . . . .Evaluating Paper session . . . . . .Evaluation Paper session . . . . . .Open Panel . . . . . . . . . . . .Joint

source, data exchange and evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .202 in complex environments II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .205

evaluations: Advancing theory and practice . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .204 Development: Learning from experience II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .207

Paper session . . . . . .Evaluation Paper session . . . . . .Capacity Paper session

. . . .Social networking, network associations and evaluation II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .209 security and livelihood protection evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .211 conferences and events: new approaches and practice . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .213 evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .214 in Turbulent Time . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .215

Paper session . . . . . .Food

Panel . . . . . . . . . . . .Evaluating

Panel . . . . . . . . . . . .Comprehensive Panel . . . . . . . . . . . .Evaluation Paper session . . . . . .The

influence of (New) Public Management Theory on Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .216 Development: Learning from experience III . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .218 the debate: what is ethical practice in international development evaluation? . . . . . . . . . . . . . .221 evaluation in the EU: a simple idea and a hard practice in a complex context . . . . . . . . . . . .224

Paper session . . . . . .Capacity

Panel . . . . . . . . . . . .Reframing Paper session . . . . . .The

use (and abuse) of evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .222 of local, regional and cross border programs II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .225

Panel . . . . . . . . . . . .Environmental Paper session . . . . . .Evaluation Paper session . . . . . .New

or improved evaluation approaches II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .226 evaluation through cost benefit and systems analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .228 of income support, credit and insurance interventions II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .230 networks and knowledge sharing II . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .232

Paper session . . . . . .Ex-ante

Paper session . . . . . .Evaluation Paper session . . . . . .Evaluation

Poster session . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .234 List of Speakers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 245 List of Keywords . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 249

W W W. E U R O P E A N E VA L U AT I O N . O R G ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

Oral Presentations

S5-03

S5-03 Strand 5 Paper session

The use of evaluation in public policy

Wednesday, 3 October, 2012

O 001

9 : 3 0 1 1 : 0 0

The use of evaluation in public policy: An analysis of evaluations in the Norwegian government 20052011

J. Askim 1, E. Doeving 2, A. Johnsen 3

1 2

University of Oslo, Department of Political Science, Oslo, Norway Oslo and Akershus University College of Applied Sciences, School of Business, Oslo, Norway 3 Oslo and Akershus University College of Applied Sciences, Department of Public Management, Oslo, Norway

The ideal of using evaluation as a policy tool is strong in public management. The policy of using evaluation has gained strength over the latest thirty years and much public money is spent on evaluations in many countries. However, according to Murray Saunders is evaluation practice as an object of research completely underdeveloped. Earlier research indicates that the quality of the evaluations impacts on the propensity of the evaluations to be used in political decision making processes. This paper aims to address this gap in the evaluation theory by empirically analysing the evaluation practice in the Norwegian government. The governmental public management regulations demand that all ministries and agencies evaluate their activities for example by cost-benefit analyses, process and impact evaluations. The Norwegian Government Agency for Financial Management has recently developed a database documenting all evaluations conducted for the ministries and agencies in Norway since the mid 2000s. In this paper we analyse these data with an emphasis on describing traits of the evaluations and the development in how the government has used evaluations as a policy tool over time. Preliminary analyses show that there have been more than 700 evaluations conducted since 2005. This paper categorises these evaluations by for example policy areas, type of evaluation, methods employed, providers of evaluations as well as size of the evaluations. The paper then analyses the data and explores hypotheses regarding how different governmental bodies design evaluation as policy tools and how different political and administrative factors affect the use of evaluation in government. Keywords: Evaluation database; Design of evaluations; Use of evaluations;

O 002

Ex ante evaluation in The Netherlands

D. Hanemaayer 1

1

Beleidsevaluatie.info, Oegstgeest, Netherlands

Ex ante evaluation can be an important instrument in the preparation of policy and programmes. Ex ante evaluation is about delivering information to politicians information about the problems to be tackled by policy, about policy-goals, about policy-instruments and about costs and benefits of policy or programmes. With a good ex ante evaluation on the desk, politicians are able to take good decisions, which means effective and efficient policy and programs. Since the beginning of the 21st century we see a broad uprise of ex ante evaluation (impact assessment) in international organisations (for example EU, OECD, UN). But we hardly see a comparable development on the level of national states and below. We will present an overview of the Dutch ex ante-landscape evaluation in the period since 2000. Stable applications of types of ex ante evaluation are for example the mandatory environmental impact assessment for fysical investments; the assessment of draft laws by the Council of State; the assesment on administrative burdens; the application of cost-benefit analysis (a specific form of ex ante evaluation) by the decision-making on infrastructural investments; the stream of cost-benefits analysis by the CPB (the Netherlands Bureau for Economic Policy Analysis); and a recently introduced assessment-instrument. We describe the slowly growing interest in social cost-benefit analysis, and we describe the very limited use of ex ante evaluation on goal-attainment, but we will show some Dutch examples of this kind of ex ante evaluation. We will plea for application of ex ante evaluation of policy-goals and not only of costs and benefits, in discussing the theoretical underpinnings of cost-benefit analysis. We will discuss the relationship between ex ante and ex post evaluation. And we will discuss in general the the limited the drives for application of ex ante evaluation in a period in which fact-free policies seems to grow in popularity. Although ex ante evalution is surely not the ultimate panacee for all shortcomings in policy-making, we plea for using ex ante evaluation of policy-goals in the preparation of complex policy-issues because it surely can contribute to effective and efficient policy. Keywords: Ex ante evaluation; Goal attainment;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

O 003

Theory-based evaluation: insights from public management theory for the assessment of the ESF assistance to administrative capacity building in Lithuania

S5-03

V. Nakrosis 1

1

Public Policy and Management Institute, Vilnius, Lithuania

Theory-based impact evaluation was identified as one of the main evaluation approaches for assessing the effective delivery of the European Cohesion policy. The underlying assumption of theory-based evaluation is that every intervention constitutes a theory whose causal chain from inputs to impacts could be tested by evaluators. While the theory-based approach is usually associated with impact evaluation, it could also be beneficial for the interim evaluation of policy interventions. There is little evidence about the potential benefits of theory-based approach in the new intervention area of administrative capacity building, where the ESF support was provided for the first time in the 20072013 programming period. Although the previous evaluations of administrative capacity building considered such governance issues as the structure of governance and the decentralisation process in the selected EU Member States, there have been no critical explanations of the ESF support to administrative capacity building based on public management theories. A good ground for testing the main assumptions and characteristics of theory-based evaluation in this policy area is Lithuania, where the largest share of ESF assistance (18%) among all EU10 countries was allocated for administrative capacity building. The proposed presentation will share the main insights from the application of public management theory in the assessment of the European Social Fund assistance to administrative capacity building in Lithuania. It will be based on the evaluation of Priority 4 Strengthening administrative capacities and increasing efficiency of public administration of the ESF-supported Human Resources Development Operational Programme commissioned by the Lithuanian Ministry of Finance and carried out by the Public Policy and Management Institute (Vilnius, Lithuania) in 2011. This evaluation drew upon the general models of public management (traditional public administration, the New Public Management and governance) and more specific propositions from the academic literature of policy implementation, project management and change management that were synthesised into a single theoretical framework. The evaluation employed a mixed (quantitative-qualitative) methodological approach to data gathering and analysis involving such methods as desk research, analysis of the monitoring data, statistical analysis, interviews, surveys and case studies. Among other things, the evaluation found that certain assumptions of organisational change behind some measures of Priority 4 failed to materialise during the programme implementation in the context of the financial-economic crisis, overloaded agenda of state/municipal institutions and staff lacking motivation. A combination of the limited organisational maturity of state/municipal institutions and the insufficient supply of capacity building services in the domestic market constrained the effective delivery of various capacity building interventions. Furthermore, the NPM-based implementation approach to strengthening administrative capacities did not enable result-based orientation of the ESF assistance. From the theoretical point of view, although no impact assessment of capacity building interventions was possible in the middle of the programme implementation, the evaluation successfully tested several hypotheses of organisational change and identified a number of factors critical for the design and implementation of these interventions. The lessons of this evaluation could be useful for the future management and evaluation of ESF-supported interventions in the area of administrative capacity building. Keywords: Theory-based evaluation; Public management; European Social Fund; Administrative capacity building;

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

O 004

Evaluating legislation the experience of the EU VAT evaluation

G. Ebling 1, J. Berlinska 1

1

European Commission, DG TAXUD, Brussels, Belgium

The European Commission has long been an active player in the development of evaluation, its standards and good practices, particularly within the expenditure measures. It is however fairly recent that emphasis has been put on evaluation of legislation and other non-budgetary measures; the expertise and know-how is growing. In this context, DG Taxation and Customs Union has conducted a retrospective evaluation of the most pertinent elements of the EU VAT system. The triggers of the evaluation The VAT complexity results in administrative burdens for businesses; dealing with VAT accounts for almost 60% of the total burden measured for 13 priority areas identified in the context of the Better Regulation Agenda. On the other hand, the growing dependence of the EU Member States on VAT as a source of revenue for financing their policies highlighted also the need to tackle its vulnerability to fraud. The technological progress and changes in the economic environment created as many opportunities as threats and challenges, revealing the potential vulnerability of the current EU VAT structure. The scope of the evaluation The evaluation looked into the design and implementation of certain VAT arrangements, identified as most pertinent, assessing their effectiveness and efficiency in terms of effects they had created. It examined as well their relevance and their coherence with the notion of the smooth functioning of the single market. The emphasis was put on the economic aspects of the cross-border phenomena related to the VAT and their consequences for the single market. Methodology Given its economic nature, the study reconciled to the maximum possible extent the classical evaluation methodology with economic modelling.

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

Results VAT exemptions are the most obvious and probably the most economically damaging, creating significant distortion and deflection of trade of goods and services, reducing productivity and output and generally impeding the successful completion of the single market; the extensive use of reduced rates creates little desirable effects at a high fiscal cost and they add to the complexity of the system; there are far too many differences n VAT procedures across the EU Member States it is estimated that a 10% reduction in differences in VAT procedures could boost intra-EU trade by as much as 3.7 % and GDP by up to 0.4 %; VAT requirements generate high administrative and compliance cost for businesses and administrations; The level of VAT evasion and avoidance is still worrisome. Keywords: Policy; Legislation; Economic; EU;

S5-03

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S2-26 Strand 2

Paper session

New evaluation tools and technologies

S2-26

O 005

Capturing Technology for Development

A. E. Flanagan 1, S. Wegner 1, K. Ruiz 1

1

World Bank Group, Independent Evaluation Group, Washington DC, USA

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

The proposed paper Capturing Technology for Development is well aligned with the theme of the EES conference, Evaluation in a networked society. New concepts, New Challenges, New Solutions. The unprecedented increase in access to telephony and data services in developing countries has opened up opportunities to harness the potential of Information and Communication Technology (ICT) for development. In addition, the existence of a global network presents opportunities and challenges for the way in which development institutions deliver (and monitor and evaluate) their services to clients. In this context, the paper will capture both the effectiveness of World Bank Group support to ICT infrastructure and its enabling environment as a tool for development and also its experience integrating ICT components into projects to enhance the delivery of services to the public, and to make governments more transparent and effective. The proposed paper would present the results of a mix of approaches used to assess the effectiveness of public and private projects in the ICT sector and ICT-using sectors. One approach is an econometric analysis investigating the role of World Bank Group interventions (World Bank policy reforms and IFC investments) in influencing the speed of mobile diffusion in developing countries using a unique variable measuring the presence or absence of World Bank Group involvement in a countrys telecommunications sector. Establishing a correlation between public money and higher mobile telephony rates supports the argument that the World Bank Group has an important role to play in increasing access to information. The paper would also address the potential of using ICT to support other development issues and objectives, and outlines areas where the government and the private sector can play a role in supporting the roll out of networks, fostering the adoption of technology, and, importantly, supporting the necessary complementary factors for success.

O 006

New Developments in Using Administrative Data for Formative and Summative Impact Evaluation with Examples

G. Henry 1

1

Education Policy at Carolina, Public Policy University of North Carolina at Chapel Hill, Chapel Hill, USA

In the past decade, many administrative databases for education, social services, health and employment have been developed that can be fruitfully combined and used for evaluation. In this paper, we explore both some of the most promising methods for combining and analyzing these data, including longitudinal datasets, and show how they are beginning to be applied to issues of effectiveness and equity in resource distribution. Specifically, we will show how multiple administrative datasets have been combined to evaluate programs, personnel, and policy. In our examples, we demonstrate the use of these data to evaluate teachers, evaluate school reforms, and evaluate training and professional development programs. We include examples of formative impact evaluation and summative impact evaluation. The examples will not only illustrate the data and analytical techniques but findings that could stimulate improvement and novel insights concerning the equitable distribution of important resources, such as more effective teachers, that can come only from combining multiple datasets. The examples are based on the U.S. but the approaches can become applicable in the developed and developing world. Keywords: Administrative databases; Methods for formative impact evaluation; Methods for summative impact evaluation; Effectiveness; Equitable distribution of resources;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

O 007

The usefulness of game theory as a method for policy evaluation

L. Hermans 1, S. Cunningham 1, J. Slinger 1

1

Delft University of Technology, Faculty of Technology Policy and Management, Delft, Netherlands

S2-26

Most of todays public policies are formulated and implemented in multi-actor systems and networks. Interdependent actors jointly need to agree on shared policy objectives and associated measures and multiple actors influence subsequent policy implementation and its impacts. Evaluating policies that are formulated and implemented in such multi-actor systems requires insight into the interactions among actors, and how these influenced the outcomes. In planning theory it is long accepted that policies and programmes are not implemented as planned, but that important deviations occur during implementation. Such deviations and emergent strategies are even more likely when several (semi-)autonomous actors are involved in policy implementation. Thus, if evaluators seek to understand how policy impacts come about, they need to look into the black-box of implementation. Game theory has long been around as a method that supports a rigorous analysis of the interaction processes among actors. However, so far, it has not been widely applied in the evaluation field. And although there are known limitations involved in using game theoretic models in a real-world setting, there are also several examples of past applications where game theory has been helpful as part of policy analysis and institutional analysis. Hence, when searching for a new methodological toolbox to help analyze implementation processes in a networked society, questions regarding the usefulness of game theory as an evaluation method remain pertinent. This paper reports an application of game theory to evaluate implementation of coastal policy decisions in the Netherlands. In particular, it evaluates the implementation of a national policy that was first formulate in 1990, followed by regional implementation processes driven by local actors. Based on this case, the usefulness of game theory as a method for evaluations is explored. This is done by addressing methodological requirements such as analytical rigor, practical feasibility, and the usefulness of resulting insights. Keywords: Game theory; Multi-actor systems; Coastal policy; Policy implementation;

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

O 242

Finding a Comparison Group: Is Crowdsourcing a Viable Option?

T. Azzam 1, J. Miriam 1

1

Claremont Graduate University, School of Behavioral and Organizational Sciences, Claremont, USA

This paper presents a research study exploring the methodological viability of crowdsourcing as way to create matched comparison groups. The study compares the results from a survey of a truly randomized control group to the survey results of a matched comparison group that was created using Amazon.coms Mturk crowdsourcing service. Both the control and the matched comparison group were compared to the truly randomized treatment group to determine the methodological reliability of crowdsourcing. Initial results indicate that this approach is a viable option for evaluation designs that do not have ready access to a comparison group, large budgets, or time. This methodological approach can help transform many real-world evaluations in a quick and cost-effective way. The paper will highlight the strengths and limitations of this crowdsourcing approach along with a description of the process for using crowdsourcing in evaluation practice. Increasingly evaluations are being used to understand how much programs impact those who participate. To obtain the most accurate measure of program participant outcomes, it is often recommended to compare outcomes for those participating in a program to outcomes for a randomized control group or a non-randomized comparison group with very similar characteristics to the program participants. Statistical techniques used to identify a good comparison group, such as propensity score matching, often require the evaluator to collect outcome data from an unfeasibly large number of participants beyond those already enrolled in the program. Amazons Mturk system, which is an online crowdsourcing service, is a website owned by Amazon whereby people can be recruited online to complete tasks such as filling out a survey or testing out a website. This system could allow evaluators to create a high-quality comparison group at a low cost. The current study aimed to demonstrate how Amazons Mturk and propensity score matching can be used to create a comparison group for an evaluation of a program for college students. Initial findings suggest that crowdsourcing is a viable approach for the creating comparison groups, however there are certain limitations to this approach. For example, crowdsourcing websites such as Mturk require that individuals be 18 years or older to participate, and this would exclude the utility of this method in the evaluation of many education programs serving children under the age of 18. But even with this limitation in mind, many program evaluations can benefit from the addition of a crowdsourced matched group because it is very cost effective, and data can be collected quickly in a matter of days. This could transform many evaluation designs and improve the internal validity of their results. Keywords: Crowdsourcing; Comparison group; Quasi-experimental design; Impact evaluation; Matched group design;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S2-41 Strand 2

Panel

New steps with Contribution Analysis: strengthening S2-41 the theoretical base and widening the practice

O 008

New steps with Contribution Analysis: strengthening the theoretical base and widening the practice

Wednesday, 3 October, 2012

J. Toulemonde 1, F. Leeuw 2, S. Lemire 3

9 : 3 0 1 1 : 0 0

1 2

EUREVAL and Lyon University, Lyon, France Dutch ministry of Justice, Den Hagen, Netherlands 3 Ramboll Management, Copenhagen, Denmark

Contribution Analysis is a pragmatic approach to applying the principles of theory based evaluation. It follows the causal chains in their whole length, reports on whether the intended changes occurred or not, and identify the main contributions to such changes, hopefully including the programme under evaluation. Over the last ten years, Contribution Analysis was repeatedly recommended in a number of evaluation guidelines and attracted visible interest in international events, including in the Prague EES Conference in 2010. However, the instances of rigorous implementation have been surprisingly scarce, and its theoretical foundations are still fragile. A special issue of Evaluation on Contribution Analysis, edited by John Mayne, will be issued by mid 2012. The session will gather several of the authors involved with this Special Issue. It intends to highlight the latest steps taken and the challenges ahead in terms of theoretical foundations, practicalities, and quality standards. The speakers will be Frans Leeuw (Dutch Ministry of Justice), Sebastien Lemire (Ramboll Management Consulting), Jacques Toulemonde (Eureval and Lyon University), Rob D. van den Berg (Global Environment Facility), and Erica Wimbush (National Health Service Scotland). The topics covered will be the following: (i) formulating validity contribution claims, (ii) investigating external factors in a systematic way, (iii) analysing catalytic effects, (iv) assessing the quality of a contribution analysis, and (v) satisfying the needs of evaluation users with a contribution analysis. Keywords: Causality; Impact evaluation; Theory based evaluation;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

10

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S5-11 Strand 5

Paper session

The role of evaluation in the civil society I

S5-11

O 010

PADev as a method for assessing agencies

W. Rijneveld 1, F. Zaal 2

1 2

Resultante, Gorinchem, Netherlands Royal Tropical Institute, Amsterdam, Netherlands

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

PADev is an evaluation methodology developed since 2008 in Ghana and Burkina Faso. The perspective it adopts is that of the local community and in participatory workshops men and women, older and younger people, officials and common people investigate what the changes have been in their livelihood domains in the past thirty years. An inventory of all interventions, projects and initiatives in the area in that period allows them to track the relationships between the changes and the interventions, and the effects of interventions on various categories of people that they represent and describe. The effects on various wealth classes is a prominent issue. In one of the exercises people list the five to ten major agencies that intervene in their area and rate them on a number of criteria related to process and outcomes of interventions that were determined during previous exercises. These include issues like relevance, long term commitment, realistic expectations, honesty and transparency and level of participation. Combining the results from different subgroups provides a broader picture of how agencies are being rated by the population as well as interesting differences between men and women, young and old, or between subgroups from different geographical locations. This exercise indicates that peoples perceptions, when captured in a systematic way, can provide for an interesting alternative or addition to the usual way of organizational assessment. The fact that this is done through participatory workshops with different subgroups and with a scope that includes all local intervening actors and not just a single organization helps to eliminate biases towards a specific organization. The presentation will elaborate the method used, the outcomes of the assessment with an analysis of the different criteria, differences between types of actors (e.g. government and non-government), differences between gender and between locations. A number of examples will give details on the sort of information that is obtained and how it could serve to have intervening agencies strive for the position of best agency. The presentation will also discuss when and where this method may be a useful addition for the evaluators toolbox. Keywords: Participation; Organizational Assessment; Downward accountability;

O 012

Building capacities of Czech development CSOs based on the FoRS (Czech Forum for Development Cooperation) Code on Effectiveness

I. Pibilova 1, D. Svoboda 2, J. Bohm 3

1 2

Independent Consultant, Prague 10, Czech Republic Development Worldwide, Prague 2, Czech Republic 3 SIRIRI, Prague 2, Czech Republic

Czech Republic is an emerging donor, nevertheless, in the last 10 years more than 60 Czech CSOs have been involved in international development. Most of these CSOs formed the Czech Forum for Development Cooperation (FoRS) for joint policy, advocacy, capacity building and international networking. FoRS members have been engaged in the global debate on CSO development effectiveness since 2007, resulting in approving the FoRS Code on Effectiveness and conducting the first self-evaluation in 2011. The Code provides indicators in the following areas: 1. Grassroots knowledge, 2. Transparency and accountability, 3. Partnership, 4. Respect to human rights and gender equality and 5. Accountability for impacts and their sustainability. It is the first of its kind in the EU-12 and one of a few among the EU development platforms. The Code and the results of the self-evaluation have been widely shared. Workshops have been organized as per the needs identified. However, it has been realized that innovative solutions were needed to ignite a wider debate on development effectiveness and to foster capacity building among diverse actors, including less developed, often volunteer-based CSOs, individual experts and students. Therefore two new concepts have been launched: A. www.DevelopmentCoffee.org is an on-line platform based on crowdsourcing, therefore anybody can propose themes and vote. Monthly evening coffees on the most popular themes are hosted by different CSOs. After an introduction by experts who explain relevant links to the Code, an informal debate follows among diverse (non)state actors. B. Peer Reviews allow a structured, in-depth debate on each principle of the Code and mutual learning among self-selected peer CSOs. The process is facilitated through FoRS on-line forum. Once piloted, this approach will be shared with (inter)national platforms within the global post-Busan process on CSO development effectiveness. Advantages of both concepts lie in quick, easy set-up with zero costs as well as in capacity building fully driven by target groups. The EES presentation outlines underlying assumptions, methodology and key lessons learnt with respect to the concepts above and thus provides an impulse for creating similar initiatives of other actors or for utilising some elements elsewhere. The EES criteria are reflected as follows: 1. Relevance: The concepts can be utilised by evaluation practitioners, their networks and by any professional platform/network interested in free experience sharing and mutual learning. 2. Quality: The concepts will be introduced in a structured way including a demonstration and a short manual.

W W W. E U R O P E A N E VA L U AT I O N . O R G ABSTRACT BOOK

11

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

3. Theme of the Conference is directly addressed as the concepts utilise the networked society for enhancing development effectiveness. Both concepts are fully participatory, easy to access and share. Both use social media for promotion, idea generation and engagement. 4. Evaluation knowledge and skills: Both concepts provide an open platform for promoting, evaluating and sharing experiences on effectiveness principles.

S5-11

5. Creativity/innovation: DevelopmentCoffee.org is a unique crowdsourcing initiative in the EU development sector (acc. to authors knowledge). Peer reviews are also newly employed for enhancing CSO development effectiveness on the national level. 6. Public interest: The concepts were generated in an emerging donor country and bring a perspective of new actors. Keywords: Social media; Crowdsourcing; Self-evaluation; Peer review; CSO development effectiveness;

O 134

Wednesday, 3 October, 2012

The contribution of CSOs in development: the challenges of tracking and documentation of results in CSOs in Tanzania

9 : 3 0 1 1 : 0 0

D. Biria 1

1

Tanzania Evaluation Association, Administration, Dar es Salaam, Tanzania

Introduction In recent years many stakeholders have started gradually to realize the importance and contribution of the civil society sector in the development of the country. However, there remains one major challenge of CSOs failing to account for their contribution quantitatively and qualitatively. Background of the study: Since early 1990s to date there has been a sharp increase in the number of civil society organizations in the country. This is partly due to the democratization of the governance processes and economic liberalization policy which forced the government to pull out in business and provision of some services. The gap created by withdrawal of Government was filled in by the CSOs and the private sector. Dr. L.Ndumbaro (2007), Foundation for Civil Society (FCS) (2006) and others have shown that CSOs contribution is enormous but needs to be substantiated by facts and figures. To this end, if CSOs are to prove their relevance they have to invest in monitoring and evaluation so that they will be able to track and document results. Problem statement Findings of CSOs Capacity Assessment commissioned by the UNDP (UNDP, 2006) and FCS (2009) showed that CSOs fail to effectively show the results of their interventions. Though, CSOs boast to contribute immensely to development there are remains no evidence in writing. What is really missing is what comes out of those interventions and not activity targets only. Management questions (a) Why do CSO leaders fail to track results of their work? (b) What can be done to overcome this situation? Research questions (a) What are the factors leading to low or no tracking and documenting results of the CSOs work? (b) What actions should be taken to establish and support M&E functions in CSOs work? Objectives: (a) Examine factors responsible for low tracking and documenting of CSOs work (b) Identify specific actions required to change the mindset of leaders in CSOs to realize the importance of tracking beyond activity and output level. (c) Establish how M&E systems can be put in place where they non-existent and strengthened where they exist within the sector. Significance of the study To improve tracking, documentation and dissemination of the CSO work in the country Methodology: Research design Personal semi structured interviews will be conducted followed up with visits to interview the CSOs members. Population There are a variety of CSOs in the country. According to Dr. Ndumbaro (2006) there are over 8,000 CSOs in the country. Sampling and Sampling Technique The study will adopt a stratified random sampling method to select CSOs for the study. 20 % of the total population will be used. Data Collection This study will collect both qualitative and quantitative data. The secondary data will contribute the making of background information and will determine the scope of primary data collection. Data Collection Instrument Both structured closed-ended and open ended questions will be used to get quantitative and qualitative information respectively. Keywords: Tracking; Outcome; Evaluation; Documentation; Dissemination;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

12

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S2-04 Strand 2

Paper session

Defining outcomes

S2-04

O 013

Seeking a Valid Attribution Model for Conflict Interventions

E. Brusset 1

1

Channel Research, Lasne, Belgium

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

Brief bio: Mr Emery Brusset is an evaluation consultant specialised in conflict situations. He has been experimenting with different methods that allow for evidence based assessments in situations such as Afghanistan, or Melanesian societies, over 17 years of continuous experience. He is about to submit a PhD at LSE on complexity theory applied to evaluation, from which this presentation draws. The main challenge for the evaluation of interventions that occur in the context of conflict (particularly for the evaluation of peace interventions) is the causal link between specific outcomes and broad improvements in the situation. Conflict situations are particularly attuned to what a growing literature of complexity theory now defines as complex interconnected systems, including highly networked actors and societies that are bound together by strong tensions. That situation leads to low quality of predictions, and to poor correlations between causes and effects. If impact is defined as the actual positive, negative, intended, unintended, consequence of an outcome, peace impact assessment will be particularly challenging in networked conflicts (please note the link to the conference theme). The main bodies of thinking in this area today rely on variants of Theories of Change and contribution analysis, which are essentially tracking cascades of effects. The presentation will outline why these are not optimal solutions to apply in conflict interventions. The level of attribution remains very low, as shown in recent evaluations, such as the Joint Donor Evaluation of Peace-Building in Sudan and the Joint Donor Evaluation in Congo, both carried out under the aegis of the OECD in which the author played a central role. Simply put, the quality of evaluations of peace-building today is chronically poor, and this is not due to the quality of the teams, or to lack of time or information. The reasons are to be found in methods. The challenge for contribution analysis and Theories of Change is mainly to do with difficulty in assessing the strength of links in a causal chain, and with the phenomenon of evaporation of objectives, a chronic condition in the fast-moving change that prevail in the object of evaluation. To overcome this, we know of 3 main attribution models: 1. Baselines capturing initial conditions, to have a before/after reference. 2. Quasi-experimental design, using control groups that are not benefiting from the intervention. 3. Interaction models using the identification of outcomes, and their interaction with the key catalysts in a conflict dynamic. The proposed paper will outline the analytical steps in the latter attribution model, explaining the parallel to impact assessment as it is used in mining and petroleum projects, pointing out in which way it could be a better model of attribution for complex systems. The paper will rely on specific academic thinking which could inform evaluation, and on concrete examples (Afghanistan, Indonesia, New Caledonia, Colombia, Congo and Sudan) of what has worked and not worked in the implementation of conflict evaluations in recent years. Audience This paper will be written primarily for evaluation commissioners and managers, as well as a broader public interested in the evaluation of politics. It will present options for the proper use of evaluation as an analytical tool but also a tool for enhanced participation. Keywords: Conflict evaluation; Complexity theory; Theory of change; Contribution analysis; Impact assessment;

O 014

Developing an Index to Evaluate Effectiveness of Sanitation Program

R. S. Goyal 1, M. Chaudhary 1

1

Institute of Health Management Research, Jaipur, India

Effectiveness of investments in household sanitation program can best be assessed by going beyond the physical outputs (number of toilets built/percent population covered) and looking at the components like extent of toilets maintained and used by the community. It should also incorporate impact of program in terms of bringing a decline in morbidity associated with lack of sanitation and, improvement in the quality of life particularly of women (who are at the receiving end due to lack of sanitation facilities within the house). This paper seeks to discuss a theoretical framework to develop an index to assess the effectiveness of the sanitation program in urban and rural areas in developing countries. It is desirable the Sanitation Effectiveness Index should reflect on; Effectiveness of the interventions/program (policy, investment, reach, coverage etc.) Appropriateness and affordability of technology Socio-cultural and physical acceptability Outcomes and impact in terms lowered morbidity (associated with lack of sanitation), improved quality of life particularly of women (in terms of convenience, time saved, safety/ security) and improved general hygiene conditions

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

13

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

Given that in different societies/countries, standalone and cumulative contribution of these factors would be manifested differently, challenge would be to identify the most wide/common contributors/ variables affecting/acceptable to most nations. Similarly, we shall have to take a call on relative contribution/weightage of every one of these factors in the index. On the basis of our literature search, we are considering the following variables for the construction of index. Effectiveness of the interventions/program policy and political commitment (e.g., subsidy for construction of toilets), strategy and programs, budget allocation, mainstreaming, program implementation mechanism and human resources, geographical reach, population covered, public and private expenditure, proportion of toilets maintained and regularly used, monitoring and oversight. Appropriateness and affordability of technology cost effective and user friendly, widely available, whether appropriate in local contexts (like scarcity of water, recycling of excreta). Socio-cultural and physical acceptability knowledge, understanding and appreciation of usage and benefits, priority, social relevance (gender concerns, equal access to all), culturally acceptable (e.g., building a toilet in the house).

S2-04

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

Outcomes and impact prevalence of diseases associated with lack of sanitation [like; Diarrhea, Malaria, Schistosomiasis, Trachoma. Intestinal helminths (Ascariasis, Trichuriasis, Hookworm), Japanese encephalitis. Hepatitis-A, Arsenic, Fluorosis], family expenditure on medical care for these diseases, reach of toilet program to all sections of society, quality of life (particularly of women) in terms of convenience, time saved, safety/ security, benefit accrued from recycling of excreta and improvement in general hygiene conditions. The paper will review the efficacy of including these variables in the index. To determine the relative contribution/weight of every variable in the index, a principal component factor analysis will be attempted. Loading of different variables on the principal component will be used to determine the relative weights of different variables in the index. We shall attempt to validate this index on data from India. Keywords: Sanitation; Evaluation; Effectiveness; Index; Outcomes and impact;

O 015

Measurement in evaluation: what do the numbers actually tell us?

A. Doucette 1

1

The George Washington University, The Evaluators Institute, Washington D.C., USA

The relevance of evaluation is often thought about in terms of assessing the need for intervention, profiling trajectories of change as a result of program exposure (outcome), estimating program impact, determining cost benefit, and so forth. While there is general agreement on the importance of evaluation, there is much discussion on how to build an adequate evidence base on which to investigate what works for whom, under what conditions, and why. In a world where social program costs are rising exponentially, measuring program outcomes and impact is becoming a significant tool in informing program and policy decisions. Program outcome research is designed to address several questions. Is the program effective? What amount of exposure is necessary to get a good outcome? Is one approach better than another? What accounts for variance in outcomes? What is the relationship of outcome to cost? These are just some of the questions asked by evaluators and policy decision-makers, governments and funders. What is common to the evaluation of all of these issues is their dependence on measurement. Although we applaud the use of sophisticated analytic models that allow us to parcel out the variance attributed to program and participant characteristics and the contribution of specific approaches and circumstances; we rarely question the soundness of the measures used to support the evaluation decisions made about how a program/intervention works, how participants change, and how effective a program is in terms of participant/stakeholder outcomes. More often than not, we assume measurement precision as opposed to scrutinizing the quality of the measurement we relied on to support the theories that are developed, and the decisions that are make about a program or intervention. The presentation focuses on the role of measurement, the assumptions we make in selecting measurement approaches and the goodness of fit between the selected measurement metrics and the objectives of the evaluation. While measurement is only one step in the evaluation process, it is nevertheless, the foundation. The complexity of the evaluation environment presents new challenges. For example, while educational reform efforts in developing countries target 21st century skills (communication, collaboration, self-managed learning, etc.) evaluations of those efforts continue to rely on standardized tests. The presentation will focus on the measurement approach taken in educational reform initiatives in developing countries, highlighting the incorporation of sophisticated measurement models (Item Response Theory); what we can learn from from model fit, and more importantly, model misfit; and, whether measurement data adequately address the evaluation objectives. The challenges of applying measures to diverse populations will also be addressed, with specific emphasis on how measures and participant response options may function differentially than expected. Data from several evaluation studies will be used in illustrating often ignored measurement challenges for evaluation practice. Keywords: Measurement; Methods;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

14

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S3-18 Strand 3

Panel

Assuring evaluation quality standards

S3-18

O 016

Assuring Evaluation Quality Standards in a Networked society

F. Etta 1, E. Kaabunga 2, A. Sibanda 3

1 2 3

African Evaluation Association, Lagos, Nigeria African Gender & Development Evaluators Network, Nairobi, Kenya African Gender & Development Evaluators Network, Harare, Zimbabwe

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0

There is no doubt that evaluation especially of international development is continuing to attract global attention and many efforts are underway to improve evaluation quality. Most professional evaluation networks and international development organisations have developed evaluation guidelines, norms and/ or standards with the purpose of enhancing the quality of the evaluation process and its products. Three reasons inform this panel discussion; the quality of evaluations and that of evaluation practice especially in Africa is receiving increasing attention as the global debate rages about development outcomes and impacts of development aid. This debate has been couched as one primarily of methods following the powerful emergence of the Impact Evaluation movement in the past 67 years with the resurgence of Randomized Control Trials (RTCs) and experimental designs as the gold standard/method for impact evaluation. Advocates of other evaluation methods insist that evaluating impact is a legitimate evaluation endeavour and is not the preserve of any one particular method. What the debate has spawned among other things is a new attention to quality assurance and control in evaluation. The second reason for this panel emanates from the role of the proposer in professional evaluation associations. As a member of the 4 African evaluation Associations; two national and two continental, I am curious to understand and see how other evaluators, mangers, as well as commissioners of evaluations use evaluation standards and guidelines to improve evaluation quality. The third and final reason, concerned with the technological nature of contemporary society, is related to my attempt as a practitioner to find ways that this learning can be massified, maximised and or quickened by Information and Communication technologies which have changed the way that contemporary society works. It is our belief that reflecting on practice is something we do not do very often and yet it is critical for learning. The Spring 2011 volume of New Directions for Evaluation # 129, published by the American Evaluation Association & Jossey-Bass is devoted to Lessons and reflections from evaluations. Michael Morris (2011) while acknowledging that major developments have taken place in the domain of evaluation ethics, observes a serious shortage of rigorous, systematic evidence that can guide evaluation or that evaluators can use for self-reflection or for improving their next evaluation, (Morris, American Journal of Evaluation, 32(1),134151. The point is made that whereas there is little or scanty research on ethics (Henry & Mark, 2003), guidelines, principles, or standards, are often generously heaped on evaluators. The American Journal of Evaluation carries in each volume, Guiding Principles for Evaluators. In 2007 the African Evaluation Association (AfrEA) approved the African Evaluation Guidelines adapted from the AEAs Programme Evaluation Standards. The United Nations Evaluation Group, (UNEG) approved Norms, Standards for evaluations within the UN system; the OECD DAC published the Quality Standards for Development Evaluation (2010). Each Panel member will consider these questions: How was quality Assured/maintained in your last evaluation? What effect did using Standards, Guidelines have in the quality assurance? Was your capacity improved for the next evaluation? How? Keywords: Quality; Standards; Guidelines; Ethics; Practice;

W W W. E U R O P E A N E VA L U AT I O N . O R G

ABSTRACT BOOK

15

TH E 10 T H EES BIENN IAL CON F EREN CE 3 5 OCTOBER, 2012, HEL SINK I, FIN LAN D

S4-03 Strand 4

Paper session

Evaluating climate change and energy efficiency

S4-03

O 017

A Recipe for Success? Randomized Free Distribution of Improved Cooking Stoves in Senegal

J. Peters 1, G. Bensch 1

1

RWI, Essen, Germany

Wednesday, 3 October, 2012

9 : 3 0 1 1 : 0 0